One-to-One Comparisons

•

DeepSeek R1 vs V3: Which Model Should You Use?

DeepSeek R1 vs DeepSeek V3: Compare reasoning power, coding performance, speed, and cost to see which DeepSeek model is best for AI applications.

Written By :

Bhavyadeep Sinh Rathod

If you’re comparing DeepSeek R1 vs V3, you’re already past the surface-level research. You’ve seen the benchmark scores. You know both models perform well on MMLU, MATH-500, and HumanEval. What’s still unclear is how that performance translates when you actually use them. One model might look stronger on paper but miss edge cases in debugging. Another might be faster but fall apart on multi-step reasoning. That gap between benchmark performance and real output is where the decision actually gets made. This guide is built to close that gap.

Here’s the bottom line. DeepSeek R1 is the reasoning model built for accuracy, multi-step logic, and complex problem solving. DeepSeek V3 is the general-purpose coding model optimized for speed, scale, and cost efficiency. To make that tradeoff concrete, I tested both models on real prompts across coding, debugging, system design, and data analysis. In this guide, you’ll see exactly how they perform, where each one wins, and which model you should choose based on your actual use case.

TL;DR

DeepSeek V3 vs R1 comes down to reasoning vs throughput. R1 is built for multi-step logic, debugging, and system design where correctness matters more than speed.

DeepSeek V3 is the better default for most developers. It handles code generation, APIs, and everyday tasks faster and at lower cost, making it easier to scale in production.

Use R1 selectively. It consistently catches deeper issues and edge cases that V3 can miss, especially in complex or failure-sensitive workflows.

Practical setup: Route 80–90% of tasks to V3, and switch to R1 only when the problem actually requires deeper reasoning.

What is DeepSeek V3 (Chat)?

DeepSeek V3 is a general-purpose model, accessed via the deepseek-chat endpoint. Released in December 2024, V3 is a 671B Mixture-of-Experts model that activates only 37B parameters per token. It was pre-trained on 14.8 trillion tokens and later distilled knowledge from R1 itself, giving it stronger analytical chops than a typical general-purpose LLM.

DeepSeek positions V3 as a direct competitor to GPT-4o and Claude 3.5 Sonnet, but at a fraction of the token cost. It doesn't generate reasoning traces. It responds directly, which means lower latency, fewer output tokens, and a significantly cheaper cost profile. If your workload is code generation, summarization, translation, tool calling, or any high-volume task where speed and cost efficiency matter as much as accuracy, V3 is your default AI coding assistant.

Related Read: DeepSeek vs ChatGPT: Which AI model wins?

What is DeepSeek R1 (Reasoner)?

DeepSeek R1 is DeepSeek's reasoning specialist, accessed via the deepseek-reasoner API endpoint. Released in January 2025, R1 was built on the V3 base and then trained through large-scale reinforcement learning to do something V3 cannot – to think before it answers. It generates explicit chain-of-thought traces, visible in <think> tags, where it breaks problems apart, checks its own logic, and backtracks before committing to a final response.

DeepSeek positions R1 as their answer to OpenAI's o1 series. The technical report backs that claim. R1 matches o1 on AIME 2024 and surpasses it on MATH-500, at roughly 96% lower cost per output token. If your pipeline involves complex debugging, mathematical proofs, or multi-step planning where a wrong answer is more expensive than a slow one, R1 is the model DeepSeek built for you.

DeepSeek R1 vs DeepSeek V3: What is the difference?

The difference between DeepSeek R1 and V3 is how each model approaches a problem. R1 thinks first, then answers. V3 answers directly. Every other difference basically originates from that.

Here, I have listed how they fundamentally differ on various factors.

Reasoning model vs general model

R1 is a reasoning model. It was trained to solve problems that require logical deduction, such as:

Math proofs

Multi-step debugging

System design with failure analysis

Its training rewarded the model for getting hard problems right, even if that meant spending more time and tokens.

On the other hand, V3 is a general-purpose model. It was trained to perform well across a broad range of tasks like:

Code generation

Summarization

Translation

Content writing

Tool calling

Its training optimized for breadth and efficiency, not depth on any single task type.

In practice, if you need to debug a race condition in async Python, R1 is the right tool.

If you need to generate a REST API scaffold, write documentation, and summarize a meeting transcript in the same pipeline, V3 handles all three without switching models.

Depth vs speed

R1 trades speed for depth. It generates internal reasoning tokens (often hundreds of them) before producing a final answer. On our Stripe webhook test, R1 identified a concurrency flaw in the idempotency design that V3 missed entirely. That depth costs time: R1 responses consistently take 2-3x longer than V3 on the same prompt.

V3 trades depth for speed. It produces output tokens directly, with no visible reasoning step. On our REST vs GraphQL test, V3 delivered an accurate, useful summary in roughly three seconds. R1 would have arrived at a similar answer, but slower and at higher token cost with no meaningful accuracy gain.

The benchmark gap makes this concrete. On MATH-500, R1 scores 97.3% versus V3's 90.0%. That 7-point gap represents problems where step-by-step reasoning is the difference between a correct and incorrect answer.

On MMLU (general knowledge), the gap narrows to 90.8% vs 88.5%, because broad knowledge recall doesn't benefit as much from chain-of-thought.

Step-by-step thinking vs direct output

R1 wraps its reasoning in <think> tags. You can read exactly how it broke the problem apart, where it reconsidered, and why it chose its final answer. On our WebSocket debugging test, R1's chain-of-thought traced through the async execution flow and identified that a NameError would fire in the finally block if path parsing failed before client_id was assigned.

V3 sidestepped the issue with an early return but never identified it as a distinct failure mode.

V3 produces clean, direct output with no reasoning trace. This makes its responses easier to parse programmatically and cheaper to run at scale. For API pipelines where you're processing thousands of requests, the absence of reasoning tokens means lower latency and lower cost per call.

The tradeoff is auditability. With R1, you can verify how the model reached its conclusion. With V3, you only see what it concluded. For regulated industries, safety-critical systems, or any workflow where you need to explain the model's reasoning to a human reviewer, R1's visible chain-of-thought has real value.

Accuracy vs efficiency

R1 optimizes for accuracy. Its self-verification loop catches errors that V3's direct output misses. On our CSV refactoring test, R1 flagged that the output file wouldn't be created on zero-match runs, a behavior that would break downstream processes. V3 didn't catch it. On our system design test, R1 identified five deadlock scenarios to V3's four, with named mitigations for each.

V3 optimizes for efficiency. At $0.27/$1.10 per million tokens versus R1's $0.55/$2.19, V3 costs roughly half as much per query. Factor in R1's additional reasoning tokens, and the effective cost gap widens to 3-5x on reasoning-heavy tasks. V3 also supports a 128K context window versus R1's 64K, which matters for long-document processing.

For most production workloads, V3's accuracy is sufficient. The gap only becomes critical on tasks where a wrong answer is more expensive than a slow answer. Tasks like payment processing logic, security audits, mathematical proofs, and complex system design.

Read More: DeepSeek alternatives: 6 AI tools worth switching to

DeepSeek V3 vs DeepSeek R1: Feature comparison

The sections above explained what R1 and V3 are built for. The table below puts them side by side across 23 specific criteria so you can compare exactly where each model leads, from context window size and hallucination control to API pricing and production scalability.

Criteria | DeepSeek R1 | DeepSeek V3 |

Model type | Reasoning (RL-trained) | General-purpose (MoE) |

Model purpose | Deep reasoning, math, logic | Broad task coverage, speed |

Ease of use | Requires parsing CoT output | Direct, clean responses |

Speed and efficiency | Slower (generates reasoning tokens) | Faster (direct output) |

Accuracy (reasoning tasks) | 97.3% MATH-500, 79.8% AIME | 90.0% MATH-500, 39.6% AIME |

Instruction following | Strong, but verbose | Concise and direct |

Output style | Step-by-step reasoning traces + final answer | Direct answer |

Context window | 64K tokens (API) | 128K tokens |

Context awareness depth | Strong on long-document analysis (FRAMES) | Strong on 128K NIAH tests |

Coding performance (HumanEval) | 90.2% | 82.6% (HumanEval-Mul) |

Debugging capability | Excels at tracing logical errors | Good for pattern-based fixes |

Code generation quality | High accuracy, verbose output | Clean, production-ready code |

Logical reasoning (GPQA Diamond) | 71.5% | 59.1% |

Multi-step problem solving | Core strength (RL-trained for this) | Adequate, not specialized |

Context retention across prompts | Strong within CoT | Strong across 128K window |

Hallucination control | Lower hallucination rate via self-verification | Good, improved in V3-0324 |

Adaptability to complex prompts | Excellent on multi-constraint tasks | Good on standard prompts |

Chain-of-thought reasoning depth | Deep, explicit, auditable | Implicit, no trace visible |

Token efficiency and cost | $0.55/$2.19 per 1M tokens (input/output) | $0.27/$1.10 per 1M tokens |

Performance on large inputs | Handles complex long-form analysis | Strong 128K context processing |

Scalability for production | Higher cost per query | Lower cost, easier to scale |

Latency under heavy workloads | Higher latency (reasoning overhead) | Lower latency |

Pricing (API) | $0.55 input / $2.19 output per 1M tokens | $0.27 input / $1.10 output per 1M tokens |

I tested DeepSeek R1 vs V3 on real prompts: Here's what I found

Benchmarks tell you how a model performs on standardized tests. Real prompts tell you how it performs on your actual work. I ran both models through seven tasks that mirror daily developer workflows, using DeepSeek's web app with the V3 (chat) and R1 (Deep Think) models at default settings. The results below are where the comparison stops being theoretical.

Coding task

I started with something simple. No starter code, no context, just a clean prompt asking both models to write the same function from scratch. I wanted to see how each model approaches a greenfield coding task: does it just produce working code, or does it think about edge cases, testing, and how the function fits into a larger project?

Prompt

DeepSeek V3 output

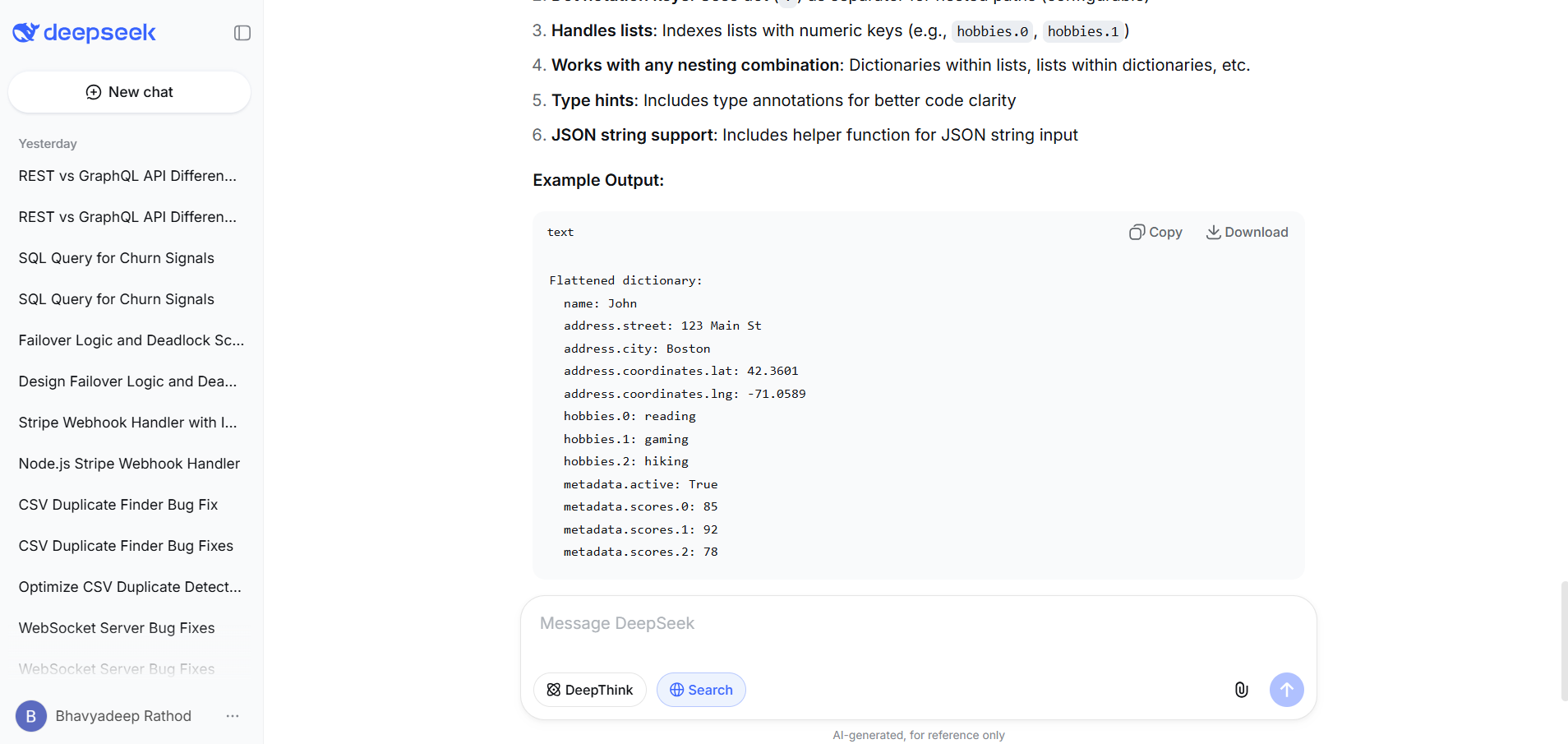

V3 delivered a comprehensive response with two functions:

flatten_json for dictionaries and a separate flatten_json_string helper for raw JSON string input.

The code included full type hints (Union[Dict, Any]), docstrings, and six test cases covering nested dicts, JSON strings, and root-level lists. It handled dicts, lists with numeric indices, and primitives correctly.

The tradeoff: ~100 lines of output including boilerplate, test cases, and a usage guide. If you wanted a production-ready utility file, V3 handed you one. If you wanted just the function, you'd need to extract it from the noise.

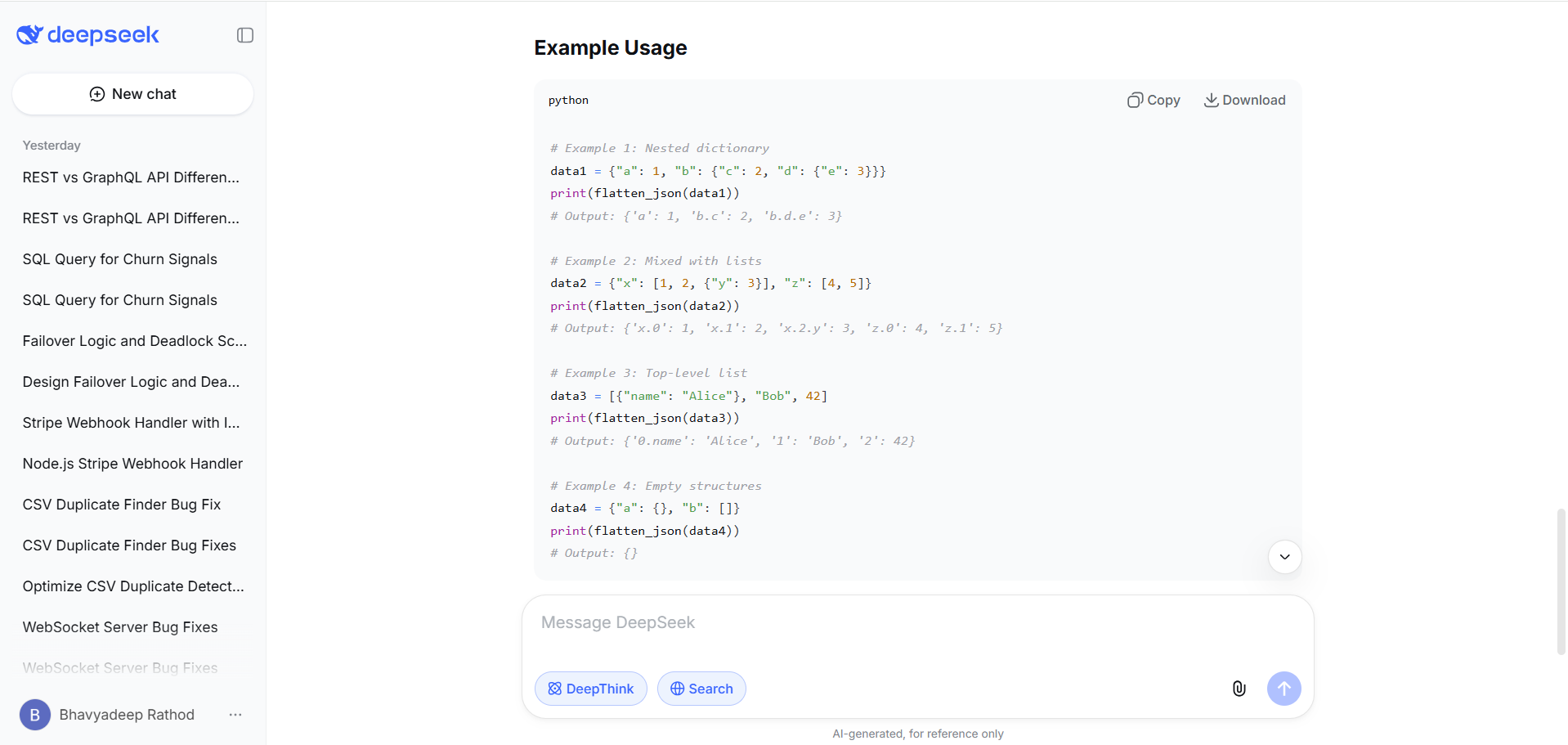

DeepSeek R1 output

R1 returned a single, tight function (~30 lines) that handled the same cases: nested dicts, lists with index-based keys, and primitives. The code was structurally cleaner, consolidating dict and list handling with isinstance(value, (dict, list)) instead of separate branches.

It included four concise test examples and flagged an edge case V3 didn't: empty structures ({"a": {}, "b": []}) return an empty dict {}, which is a deliberate design choice R1 called out explicitly. The explanation section was compact and focused on the recursive logic rather than usage tips.

The verdict

Both functions are correct and handle the same core cases. The difference is in engineering style. V3 over-delivered: type hints, a JSON string parser, extensive test scaffolding. Useful if you're building a utility module from scratch.

R1 was more precise: tighter code, cleaner abstraction, and it surfaced the empty-structure edge case that V3 silently handled without comment. For a senior developer who wants a clean function to drop into an existing codebase, R1's output requires less trimming. For someone bootstrapping a new project who wants tests and helpers included, V3 saves a step.

Debugging task

For the second test, I wanted to flip the task. Instead of writing new code, I handed both models a broken one. I took an async WebSocket server (~80 lines) with several bugs baked in and asked each model to find them, explain why they happen, and fix them.

My focus here was on diagnostic depth: does the model just spot the obvious issues, or does it trace through the execution flow and catch the ones that only surface under real load?

Prompt

DeepSeek V3 output

V3 identified five bugs total. The first three matched the bugs flagged in the code comments: the message_history memory leak in unregister(), the race condition from iterating connected_clients during await points in broadcast(), and the IndexError from unvalidated path parsing. V3 then went further, identifying two additional issues: a missing asyncio.Lock around all registry mutations (register, unregister, and the broadcast snapshot), and the fact that message_history trimming only applies to the sender, not recipients.

For each bug, V3 explained the mechanism clearly and provided corrected code. It also delivered a completely corrected version of the entire server at the end, incorporating all five fixes together with deque(maxlen=100) replacing the manual trim logic. Total output: roughly 1,200 tokens.

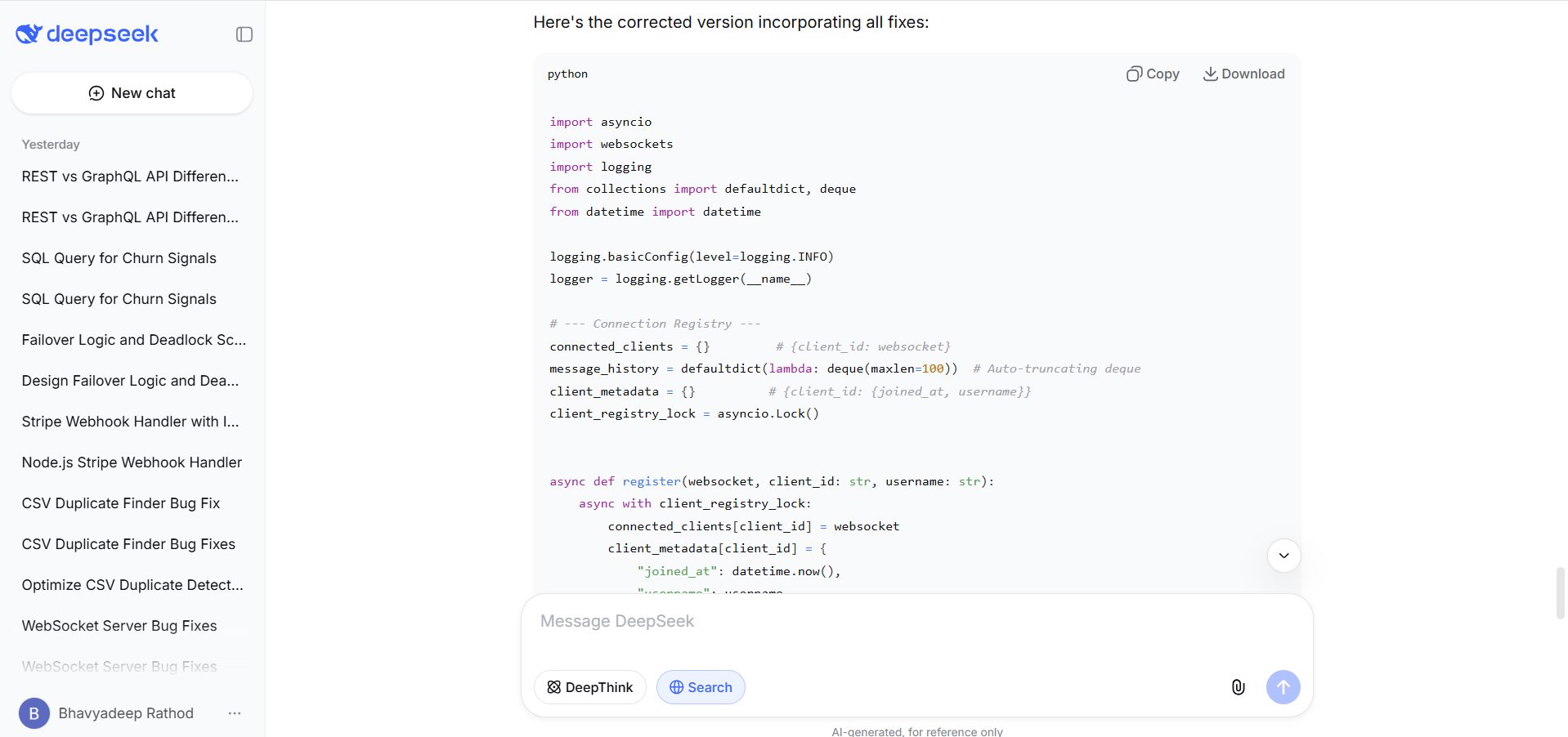

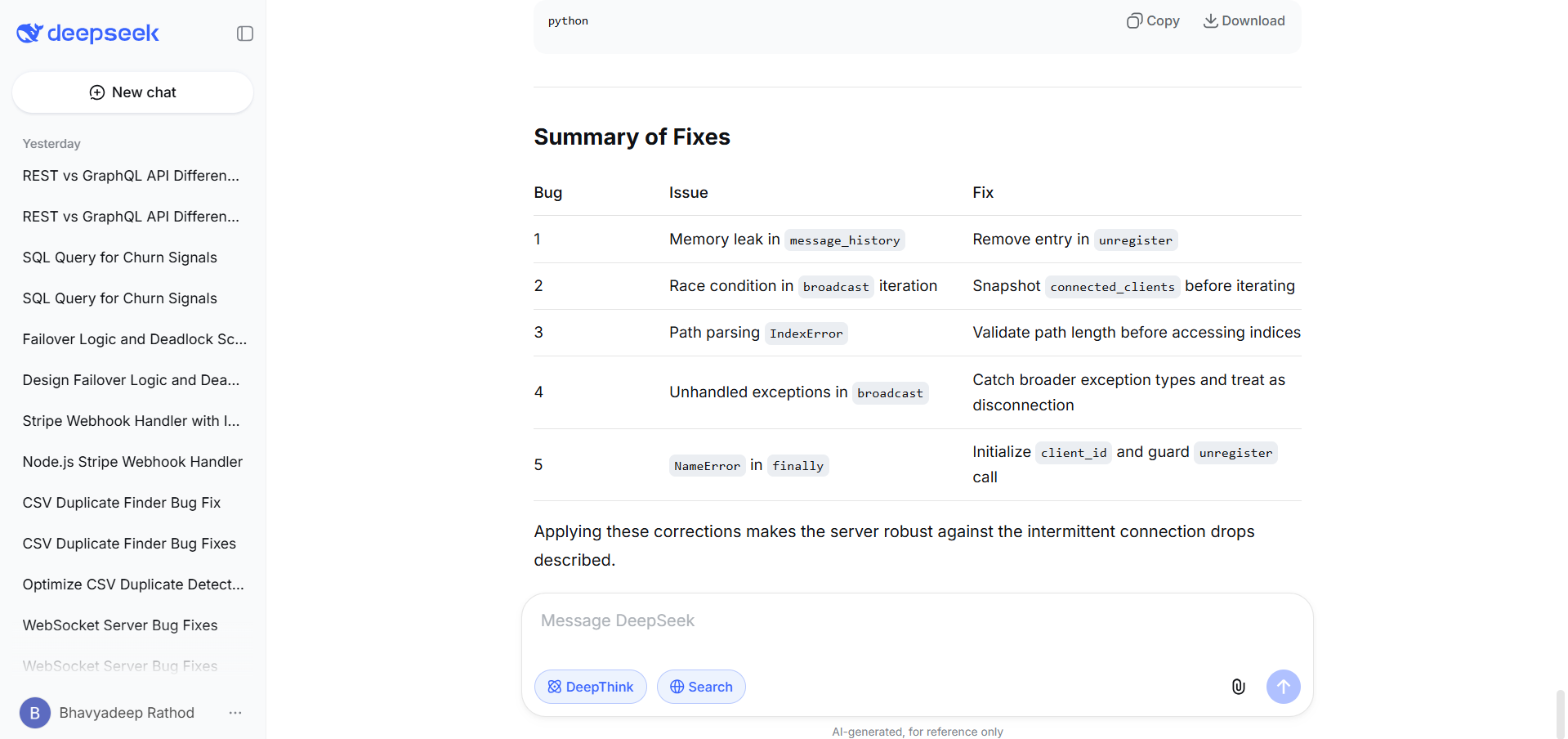

DeepSeek R1 output

R1 also caught the same three core bugs and provided accurate explanations of each. Its "why it occurs" explanations were slightly more precise on the race condition: it explicitly called out that await websocket.send(message) is the context-switch point where the event loop can interleave register/unregister calls. R1 then identified two additional bugs that V3 missed.

First, unhandled exception types in broadcast(): V3 only catches ConnectionClosed, but under load, transient network errors or WebSocketException can also break the loop and propagate to the caller, crashing the sending client's handler. R1 added a broader exception catch with logging.

Second, a NameError in the finally block: if path parsing fails before client_id is assigned, the finally block calls unregister(client_id) on an undefined variable. V3's fix for Bug 3 sidestepped this by returning early, but R1 explicitly identified it as a distinct failure mode and added a guard. R1 concluded with a summary table mapping each bug to its fix. Total output: roughly 1,000 tokens.

The verdict

Both models found the three obvious bugs and went beyond them, but they found different additional bugs. V3 caught the missing lock and the per-sender-only trim logic. R1 caught the narrow exception handling and the NameError in the finally block. Neither model found all seven issues on its own.

For this audience, the distinction matters. V3's lock recommendation is architecturally sound but arguably defensive for single-threaded asyncio where context switches only happen at await points. R1's catches are more operationally critical: the narrow exception handler will crash the broadcast loop under real load, and the NameError will fire on malformed connections. R1 found the bugs more likely to cause the exact symptom described in the prompt (intermittent connection drops under load).

V3 produced the more complete refactored codebase. If you're triaging a production incident, R1 gets you to the root cause faster. If you're refactoring the server for long-term reliability, V3's output is closer to a merge-ready PR.

Code refactoring and optimization

Most of a developer's coding time goes into refactoring, not writing fresh code. So for this test, I gave both models a working but painfully slow O(n²) duplicate finder along with two real CSV files, and asked them to make it faster. I was curious whether each model would just optimize the algorithm or also audit the existing logic for bugs it wasn't asked about.

Prompt

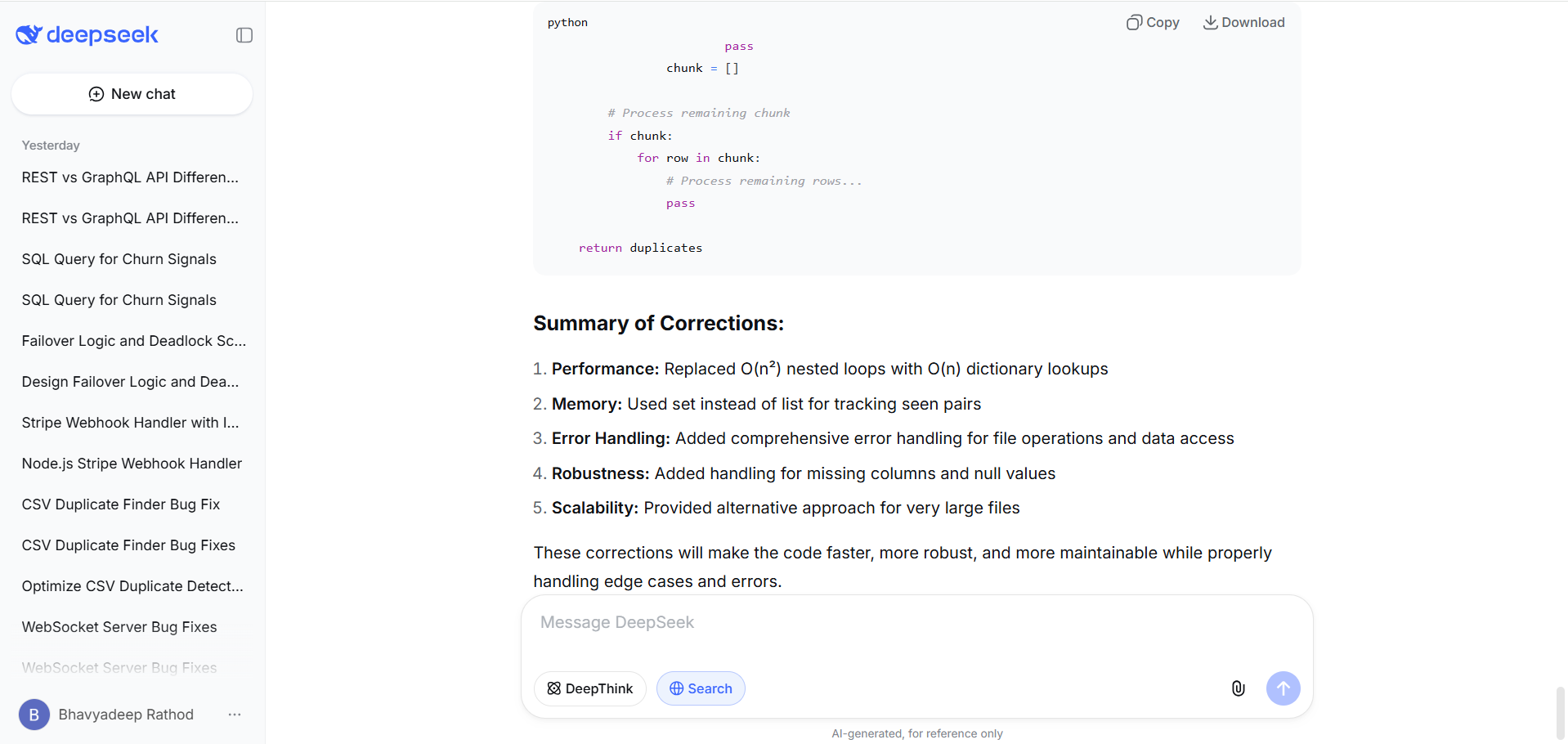

DeepSeek V3 output

V3 identified six issues. It caught the two performance bottlenecks: the nested loop (O(n×m)) and the linear-scan seen_pairs list. It replaced the nested loop with a dictionary keyed by (normalized_name, email) and swapped the list for a set. Beyond performance, V3 flagged four additional concerns: missing file I/O error handling, potential KeyError on missing columns, inconsistent None/empty-string handling on .strip() calls, and memory inefficiency for very large files (proposing a chunked processing alternative).

The corrected code was comprehensive, wrapping every file operation in try-except blocks with specific exception types (FileNotFoundError, PermissionError, csv.Error). V3 also added null-safe value access (row_a[col].strip() if row_a[col] else ""). Total output: roughly 1,500 tokens across six numbered sections plus a full corrected version.

DeepSeek R1 output

R1 identified five issues. It caught te same two performance bottlenecks and applied the same fix: dictionary index for File B, set for seen_pairs. R1 also flagged missing file I/O error handling and memory inefficiency. Where R1 diverged from V3 was in two areas. First, it caught a subtle behavioral issue V3 missed: the output file is only created when duplicates exist (if len(duplicates) > 0), which means downstream processes expecting an output file will break on zero-match runs. R1 fixed this by always writing the file, even if empty.

Second, R1 normalized the pair_key construction using sorted() to make it order-independent ("|".join(sorted([txn_a, txn_b]))), noting that while the current one-directional comparison makes this unnecessary, it prevents bugs if the algorithm is later modified to compare in both directions. R1 also used index_b.setdefault(key, []).append(row) instead of V3's explicit if key not in check, producing slightly more idiomatic Python.

R1 streamed File A directly from disk rather than loading it into memory, while V3 still loaded both files fully (though V3 proposed a chunked alternative as a separate function). Total output: roughly 1,000 tokens, tighter than V3.

The verdict

Both models solved the core optimization correctly and arrived at the same O(n) dictionary-lookup approach. The differences are in what else they noticed.

V3 went wider: six issues including null-safety, KeyError protection, and granular exception handling. If you're hardening this code for a pipeline that ingests unpredictable CSV data from external sources, V3's defensive coding saves you follow-up bugs.

R1 went deeper on the logic: it caught the missing-output-file behavior, normalized the pair key for future-proofing, and streamed File A instead of loading it fully. If you're reviewing this code for correctness and long-term maintainability, R1 flagged the issues that would actually bite you in a production data pipeline.

Neither model flagged that the name matching is case-insensitive while the email matching is case-sensitive, an inconsistency baked into the original code that could produce false negatives on email domains with mixed casing. That's the kind of domain-logic question both models leave to the developer.

API integration task

In this test, I gave both models a prompt with four distinct requirements (signature verification, event routing, idempotency, retry logic) that all need to work together in a single function. I wanted to see if each model could hold all four concerns in its head simultaneously, or if it would nail three and quietly botch the fourth.

Prompt

Write a Node.js function that handles incoming Stripe webhook events. It should verify the webhook signature, handle checkout.session.completed and invoice.payment_failed events, implement idempotency using a Redis cache, and retry failed database writes up to three times with exponential backoff.

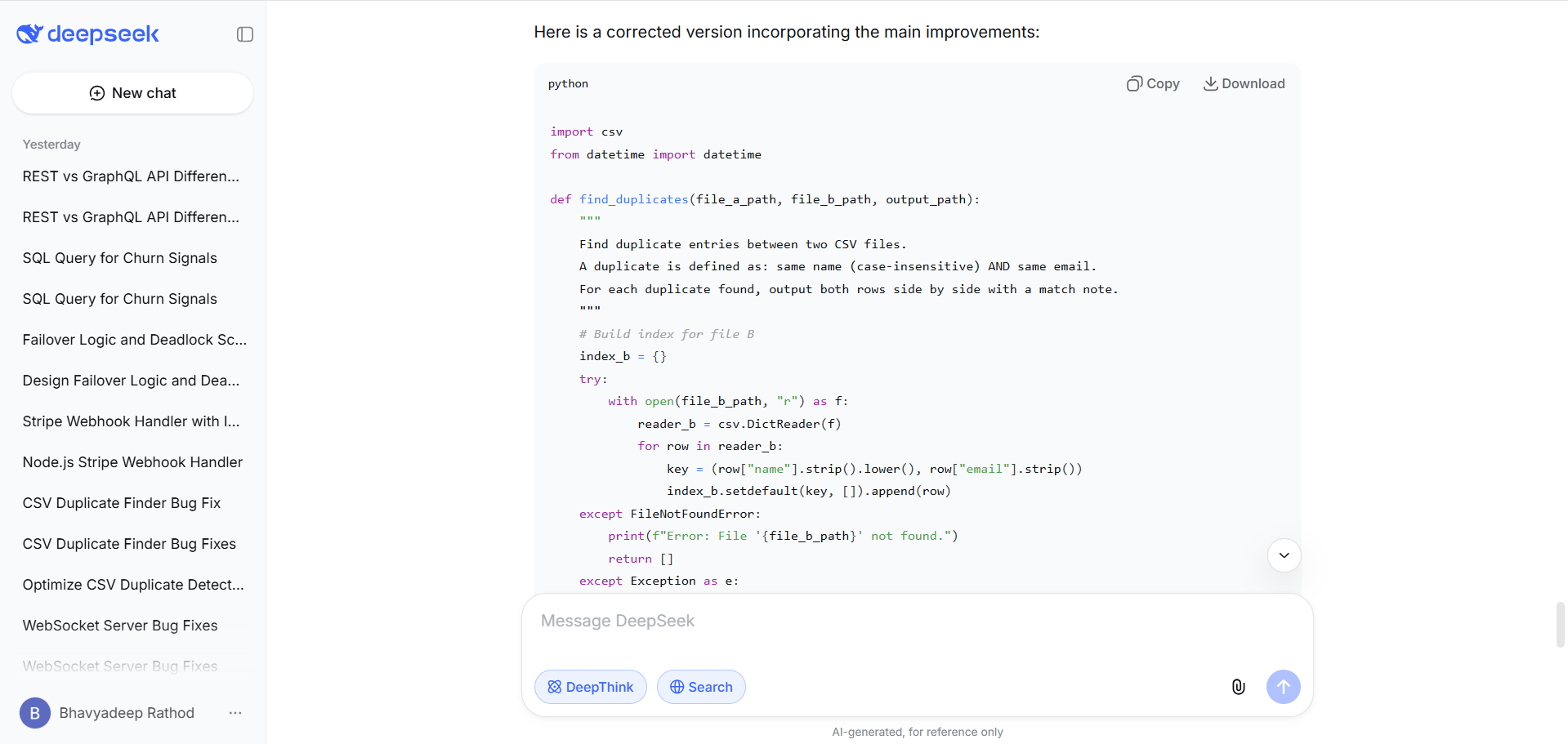

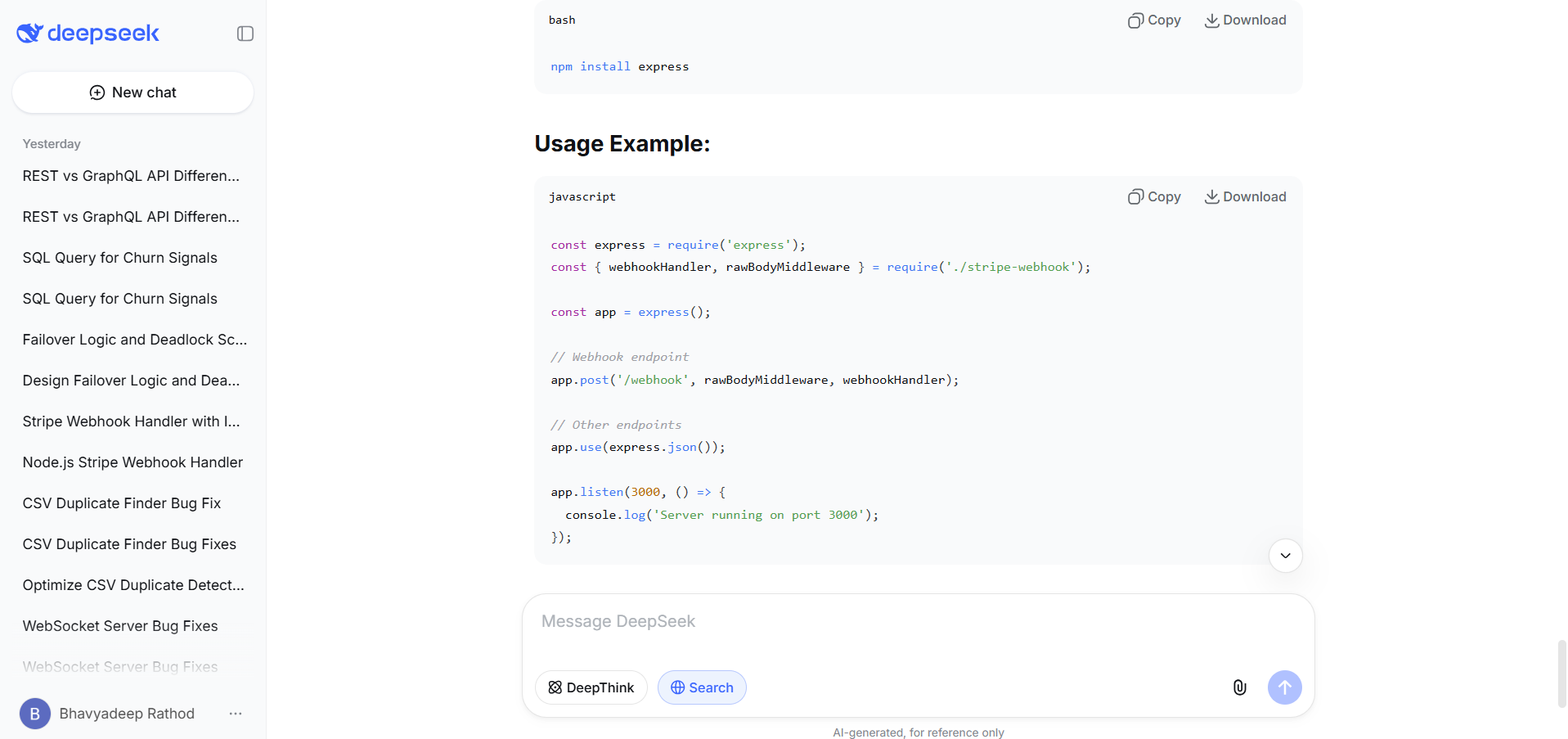

DeepSeek V3 output

V3 delivered a full-featured implementation at roughly 200 lines. The signature verification used stripe.webhooks.constructEvent() correctly. The retry function included exponential backoff with random jitter to prevent thundering herd, a detail the prompt didn't ask for but that matters in production. Idempotency was implemented as a single Redis key per event ID with a 24-hour TTL, checked before processing.

V3 also included pieces the prompt didn't request: a custom rawBodyMiddleware for capturing the raw request body, a Redis retry strategy on connection failures, placeholder database functions with simulated 70% success rates for testing, and a commented-out Express app setup.

The database functions (saveCheckoutSession, handlePaymentFailed) were separate, well-named, but used Math.random() for success simulation rather than actual DB logic. The export included three named functions. Total output: substantial, closer to a starter project than a single function.

DeepSeek R1 output

R1 produced a leaner implementation at roughly 100 lines. The same signature verification, same constructEvent() call. The retry function used clean exponential backoff (100ms, 200ms, 400ms) without jitter. Where R1 diverged significantly was on idempotency. Instead of a single key, R1 used a two-key pattern: a processing lock with a 60-second TTL (acquired with SET NX for atomicity) and a separate processed key set only after success.

This prevents a real concurrency problem: if two webhook deliveries for the same event arrive simultaneously (which Stripe can do), V3's single-key approach would let the first request mark it as processed, then if that request fails mid-processing, the event is permanently marked as handled but never actually completed. R1's lock-then-confirm pattern handles this correctly.

On failure, R1 deletes the processing lock so Stripe's retry can attempt again. On success, it deletes the lock and sets the permanent processed marker. R1 also noted that Express requires express.raw() middleware for the webhook route rather than writing custom middleware. The database operations were left as commented pseudocode (// await db.orders.updateOne(...)) rather than simulated functions.

The verdict

V3 produced more code, more polish, and more supporting infrastructure. If you're a developer bootstrapping a new Stripe integration and want a working starter file you can drop into a project, V3 gets you there faster, jitter included.

R1 produced less code but better architecture on the critical piece: idempotency. The two-key lock-then-confirm pattern is how production payment systems actually handle concurrent webhook delivery. V3's single-key approach has a real failure mode that would surface under load. For a developer building payment infrastructure where duplicate processing or lost events costs money, R1's implementation is the one you'd want to ship.

Multi-step reasoning task

In this task I've sat through enough architecture reviews to know that the value isn't in the happy path. It's in how many failure modes you can identify before they hit production. So I gave both models a distributed system prompt with no single correct answer and watched how far each one went in thinking through what could go wrong.

Prompt

DeepSeek V3 output

V3 structured its response as a six-section walkthrough: system overview, normal flow, failover logic, deadlock scenarios, additional considerations, and conclusion. The failover design was solid: health checks on B, priority queuing at C with high/low buckets, and pseudocode for A's routing logic.

V3 identified four potential deadlock or deadlock-adjacent scenarios: circular health-check dependencies (B waiting on C, C waiting on A), priority starvation causing retry storms that resemble livelock, shared distributed lock contention across A/B/C, and connection pool exhaustion from hanging health checks to an unhealthy B.

V3 also raised practical concerns the prompt didn't ask for: idempotency for retried requests during failover, failback logic when B recovers, and a cap on high-priority processing to protect free-tier minimum service. The analysis was thorough but kept the deadlock scenarios at a high level without proposing specific mitigations for each.

DeepSeek R1 output

R1 organized its response into two clear sections: failover logic design and deadlock scenarios, with numbered sub-sections under each. The failover design included the same health monitoring and priority queuing approach but added a circuit-breaker pattern with configurable failure thresholds and a recovery handler that gradually shifts traffic back to B rather than switching all at once. Where R1 pulled ahead was on deadlock identification.

R1 found five distinct scenarios, each with a specific mechanism and a named mitigation:

Priority inversion at C: A free-tier request holds a database lock, gets preempted by a premium request that needs the same lock. The premium request blocks indefinitely because the low-priority holder never gets CPU time to release it. R1 named this as classic priority inversion and proposed priority inheritance in the lock manager.

Distributed coordination deadlock: If A runs as a cluster, coordinating circuit-breaker state via a distributed lock creates a failure mode where the lock holder crashes and the lock stays held. R1 proposed lease-based locks with TTL and watchdog renewal.

Failover transition deadlock: A two-phase drain when switching back to B creates circular waiting: A waits for C to confirm an empty queue, C waits for A's acknowledgment. R1 proposed async, eventually-consistent routing table updates instead.

Resource starvation (livelock): High premium volume during failover starves free-tier requests completely. R1 proposed aging in the priority queue and a hard cap on high-priority processing time.

Cascading failure with circular dependency: If A depends on C for authentication tokens (stored in a DB accessed via C), and C is overloaded with premium traffic from A, a circular dependency forms. R1 proposed dependency isolation and separate connection pools.

The verdict

V3 produced a more practical response with pseudocode and operational concerns (idempotency, failback, rate caps) that a senior engineer would appreciate during implementation. Its deadlock analysis covered the right territory but stayed surface-level.

R1 went significantly deeper on the core question. Five deadlock scenarios, each with a named mechanism (priority inversion, circular wait, livelock), a concrete trigger condition, and a specific mitigation strategy. For a system design interview or an architecture review where you need to demonstrate that you've thought through failure modes exhaustively, R1's output is the one you'd present. V3's output is the one you'd start coding from.

Data analysis task

Business questions are rarely precise enough to translate directly into SQL. "Users showing churn signals" leaves plenty of room for interpretation. I gave both models a deliberately open-ended prompt to see how each one handles ambiguity: does it make a choice and commit, ask for clarification, or try to cover multiple interpretations at once?

Prompt

DeepSeek V3 output

V3 built an extensive query using six CTEs. For session drop detection, it used LAG() over monthly session counts across a three-month lookback window, comparing each month to its predecessor. For purchase analysis, it calculated average monthly purchases over a six-month baseline. Support tickets were counted over the last 14 days with a HAVING clause. V3 then combined all three signals in a combined_signals CTE that flags each signal as a boolean per user, counts how many signals each user exhibits, and joins back to the users table for context data (email, username, account age).

The final output returns users with at least one churn signal, ordered by signal count descending. V3 also included correlated subqueries in the SELECT to pull recent session counts, purchase counts, and ticket counts for validation. The query was well-commented, with a note about assumptions on column names and suggested lookback period adjustments.

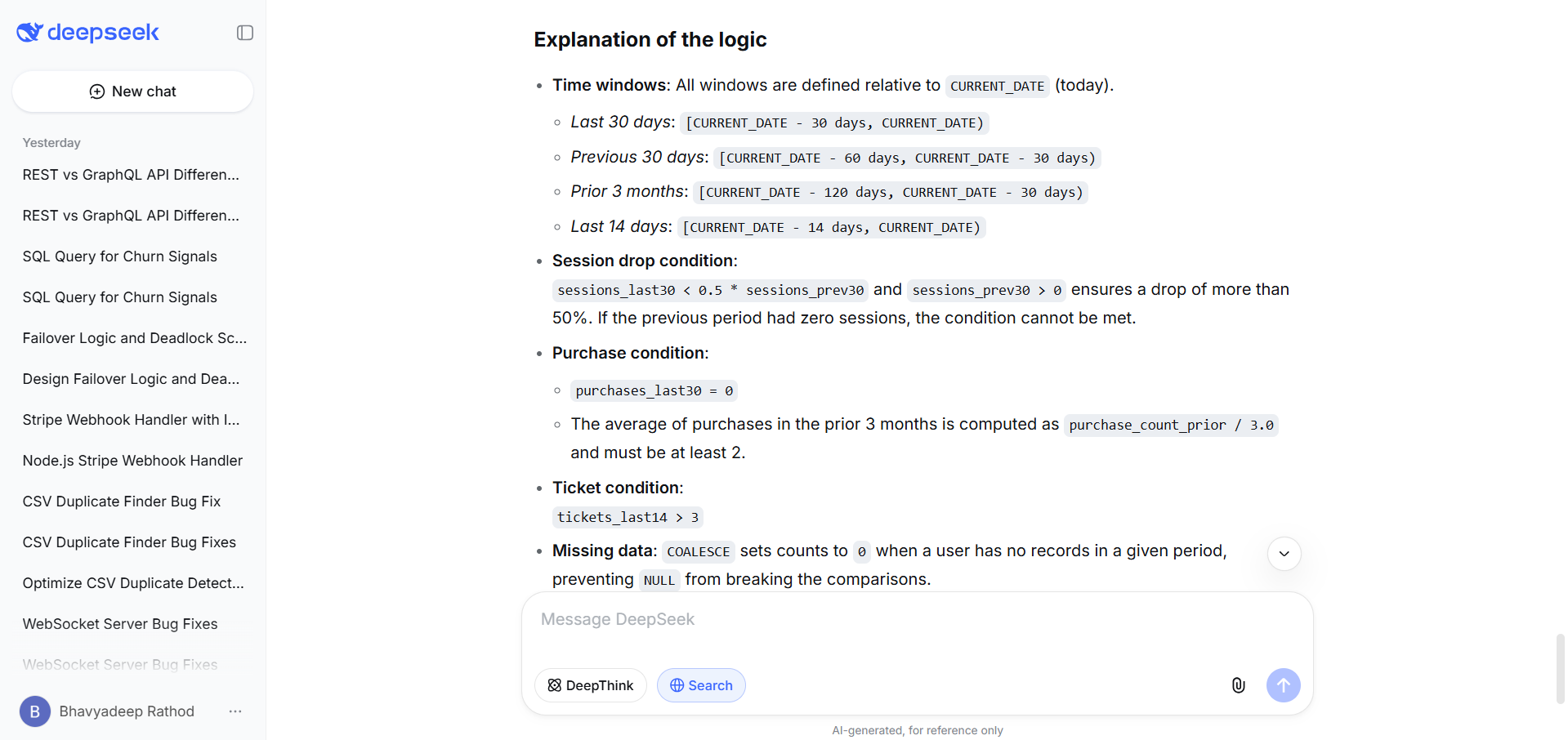

DeepSeek R1 output

R1 produced a leaner query with five CTEs and a tighter structure. For session drop detection, instead of using LAG() across monthly buckets, R1 compared two fixed 30-day windows: the last 30 days versus days 31-60. This is a simpler approach that directly answers "did frequency drop by 50% compared to last month" without needing multiple months of data. For purchase history, R1 used a three-month prior window (days 31-120) and computed the average as purchase_count_prior / 3.0 >= 2, which is arithmetically clean.

The critical design difference: R1's WHERE clause requires all three conditions to be true simultaneously using AND, meaning it only returns users exhibiting all three churn signals. V3's query returns users with any one or more signals. R1 also used COALESCE consistently throughout to handle NULL values from LEFT JOINs, preventing broken comparisons on users with no activity records. The explanation section explicitly called out the edge case where a user with zero previous sessions cannot satisfy the drop condition, and noted that indexes on (user_id, created_at) would improve performance.

The verdict

These two outputs reflect a genuine analytical disagreement, not just a stylistic one.

V3 interpreted "users showing churn signals" as users exhibiting any signal, then scored and ranked them by how many signals they trigger. This is the exploratory approach: cast a wide net, surface everyone at risk, let the business team prioritize. V3 also provided richer output with contextual columns for manual review.

R1 interpreted the prompt as requiring all three conditions simultaneously. This is the stricter, more conservative approach: only flag users who are definitively churning across every dimension. R1's query is also more performant because it filters aggressively and avoids correlated subqueries in the SELECT.

Which interpretation is correct depends on how the data team intends to act on the results. For an early-warning dashboard where you want to catch users at the first sign of trouble, V3's approach is better. For a targeted retention campaign where you only want to invest resources in users who are clearly leaving, R1's approach is more precise. A senior data scientist would likely ask for clarification before choosing, and neither model did that. But V3's ranking-based output is arguably more useful as a first pass because it includes the strict-match users (signal count = 3) alongside partial matches, giving the team flexibility R1's query doesn't.

General task

Not every task needs deep reasoning. I closed the test series with a simple recall question to see what happens when R1's chain-of-thought architecture has nothing meaningful to chew on. Does it still add value, or does it just burn tokens arriving at the same answer V3 delivers in three seconds?

Prompt

DeepSeek V3 output

V3 produced a two-paragraph summary followed by a four-point bullet list. The first paragraph covered REST (resource-centric, HTTP verbs, over/under-fetching tradeoffs, native HTTP caching). The second covered GraphQL (single endpoint, client-specified data shapes, schema-based introspection).

The bullet list compared data fetching, versioning, caching, and complexity, and included a practical performance warning about N+1 queries and DataLoader. Concise, accurate, and delivered fast.

DeepSeek R1 output

R1 structured its response as two parallel bullet lists: one for REST, one for GraphQL, with matching categories (endpoint structure, data fetching, schema, versioning, caching, operation). Each point was a one-line comparison.

R1 explicitly noted the schema difference (implicit via media types for REST vs. strongly typed SDL for GraphQL), which V3 mentioned but didn't contrast as sharply. Clean and scannable, but no prose tying the points together.

The verdict

Both responses are accurate, concise, and would serve the target audience well. V3 added a practical callout (N+1 queries, DataLoader) that R1 didn't, which gives it slightly more depth for developers who would actually implement GraphQL. R1's parallel structure is easier to scan. For a recall-and-synthesis task like this, neither model's reasoning architecture gives it a meaningful advantage.

V3 edges ahead on the inclusion of the N+1 gotcha, which is the kind of operational detail this audience cares about. This is the type of task where V3's speed advantage matters most: you get a comparable answer in less time and fewer tokens.

DeepSeek R1 vs V3: Testing scorecard across all 7 tasks

Test | V3 strength | R1 strength | Winner |

1. Coding (JSON flattener) | Full utility file with tests, type hints, JSON string helper | Tighter function, cleaner abstraction, flagged empty-structure edge case | V3 if bootstrapping a new project. R1 if dropping into an existing codebase. |

2. Debugging (WebSocket server) | Wider coverage (5 bugs), full corrected server delivered | Caught operationally critical bugs: narrow exception handling crashing broadcast, NameError in finally block | R1 for triaging production incidents. V3 for long-term refactoring. |

3. Refactoring (CSV duplicates) | Six issues including null-safety, KeyError protection, granular exceptions | Caught missing-output-file behavior, normalized pair keys, streamed File A from disk | V3 for hardening against unpredictable input. R1 for logic correctness in data pipelines. |

4. API integration (Stripe webhooks) | Complete starter project with jitter, raw body middleware, simulated DB functions | Two-key lock-then-confirm idempotency that handles concurrent delivery correctly | R1. V3's single-key idempotency fails under concurrent webhook delivery. |

5. System design (server failover) | Practical pseudocode, operational concerns (idempotency, failback, rate caps) | Five named deadlock scenarios with specific mitigations | R1. Deeper, more exhaustive failure analysis. |

6. Data analysis (SQL churn query) | OR-based signal ranking with contextual columns. Flexible for exploration. | AND-based strict filtering. Leaner, more performant query. | V3 for early-warning dashboards. R1 for targeted retention campaigns. |

7. General (REST vs GraphQL) | Included N+1 query warning and DataLoader mention | Clean parallel structure, sharper schema contrast | V3. Practical operational detail this audience acts on. |

The pattern across all seven tests: R1 consistently wins on tasks requiring deep reasoning, correctness under edge cases, and exhaustive failure analysis. V3 consistently wins on breadth, speed, and practical completeness. For the tasks in between, the winner depends on what you're optimizing for.

DeepSeek V3 vs R1: Pros and cons

The feature comparison and real-prompt tests above show where each model leads on specific tasks.

The tables below distill that into a quick-reference format: what you gain and what you give up with each model, so you can weigh the tradeoffs against your own workflow.

What are the pros and cons of DeepSeek V3?

Pros | Cons |

Extremely cost-effective ($0.27/$1.10 per 1M tokens) | Weaker on multi-step logical reasoning |

128K context window for long-document processing | Doesn't self-verify answers |

Fast response times, lower latency | Scores lower on math/reasoning benchmarks |

Strong coding performance across multiple languages | Can miss edge cases in complex debugging |

MIT licensed, 671B MoE architecture | Less rigorous on proof-level tasks |

What are the pros and cons of DeepSeek R1?

Pros | Cons |

Best-in-class math reasoning (97.3% MATH-500) | Higher API cost ($0.55/$2.19 per 1M tokens) |

Self-verifying chain-of-thought reduces hallucinations | Slower response times due to reasoning overhead |

Matches OpenAI o1 on AIME at a fraction of the cost | Verbose output requires parsing CoT tokens |

MIT licensed, fully open-source, distillable | 64K context window (smaller than V3's 128K) |

Excels at debugging complex async/concurrent code | Overkill for simple recall or generation tasks |

DeepSeek R1 vs V3: Pricing and cost efficiency

V3 is roughly 50% cheaper than R1 per million tokens, and the gap widens in practice because R1 generates additional chain-of-thought tokens that inflate output costs.

DeepSeek R1 vs V3 API pricing (as of March 2026)

Pricing dimension | DeepSeek R1 | DeepSeek V3 |

Input (cache miss) | $0.55 / 1M tokens | $0.27 / 1M tokens |

Input (cache hit) | $0.14 / 1M tokens | $0.07 / 1M tokens |

Output | $2.19 / 1M tokens | $1.10 / 1M tokens |

Context window | 64K | 128K |

Effective cost on reasoning tasks | 3-5x higher (due to CoT tokens) | Baseline |

For context, R1 is still roughly 96% cheaper than OpenAI's o1 series on output tokens. Both DeepSeek models offer cache discounts of approximately 90% on repeated input prefixes, which can significantly reduce costs for applications with shared system prompts or document templates.

The practical cost calculus: if your pipeline runs 1,000 reasoning-heavy queries per day, R1 might cost 3-5x more than V3. For a mixed workload where only 10-15% of queries genuinely need deep reasoning, routing most traffic to V3 and reserving R1 for complex tasks is the most cost-efficient approach.

When should you choose DeepSeek R1?

R1 earns its cost premium when reasoning depth directly impacts the quality of your output. Pick R1 when:

You're solving multi-step math or logic problems where partial credit doesn't exist. R1's 97.3% on MATH-500 isn't a marginal improvement over V3; it's a different capability tier.

You're debugging complex code, especially async, concurrent, or distributed systems.

R1's step-by-step trace catches race conditions and deadlocks that V3 misses.

You need auditable reasoning. R1's chain-of-thought output lets you verify how the model arrived at its answer, not just what it answered. For regulated industries or safety-critical applications, this transparency has real value.

You're building AI agents that need to plan multi-step actions. The same reasoning architecture that solves math proofs also helps R1 decompose complex workflows into sequential actions.

Accuracy matters more than speed. If a wrong answer costs you more than a slow answer, R1 is the right call.

When should you choose DeepSeek V3?

V3 is the default for most production workloads.

Pick V3 when:

You need fast, scalable responses across diverse task types. Code generation, summarization, translation, content writing, classification, extraction: V3 handles all of these well without the overhead of chain-of-thought reasoning.

You're processing long documents. V3's 128K context window is twice R1's 64K API limit, which makes a real difference when analyzing lengthy codebases, legal documents, or research papers.

You're optimizing for cost at scale. At $0.27/$1.10 per million tokens, V3 is one of the cheapest frontier-class models available. For high-volume API calls, the savings add up fast.

Your task doesn't require multi-step deduction. If the answer comes from pattern matching, recall, or straightforward generation rather than logical derivation, V3 will match R1's accuracy at half the cost and double the speed.

You're building chatbots, content tools, or coding assistants for everyday use. V3's clean, direct output style is easier to integrate into user-facing products than R1's verbose reasoning traces.

Vibe coding with Emergent: skip the model comparison, build the app

Everything above assumes you're integrating a raw model into your own pipeline: choosing between R1 and V3, managing API keys, parsing outputs, wiring up frontends to backends yourself. That's the right approach if you need fine-grained control over inference. But if your actual goal is a working application, vibe coding skips that entire layer. You describe what you want in plain language, and an AI agent writes the code, tests it, fixes errors, and deploys it. No model selection. No infrastructure setup.

Emergent is the platform built around this approach. It orchestrates AI agents that handle full-stack development end to end: React frontends, Node.js backends, MongoDB databases, Stripe payments, OAuth authentication, GitHub version control, and one-click deployment with custom domains.

Where R1 would help you reason through a Stripe webhook implementation (as we saw in Test 4), Emergent builds the entire payment flow for you, tests it, and ships it. The Universal LLM Key gives your apps access to GPT-5, Claude, Gemini, and DeepSeek models through a single billing system, so you're not locked into any one provider. Plans start at $20/month.

If you’re still trying to figure out which model to plug into your AI coding workflow, you might be asking the wrong question. Models can generate answers, but they won’t take you all the way to a working product. That part is still on you. What actually moves things forward is a system that helps you go from idea to build without constant switching, stitching, and second-guessing. Stop choosing models. Start shipping - build for free.

Final Verdict

Choosing the right tool ultimately comes down to what you’re trying to optimize for. Some workflows demand deep reasoning and precision, others need speed and efficiency at scale, and in many cases, the real goal is simply to ship something that works. Here’s how to think about each option.

For reasoning, math, and complex debugging

Go with DeepSeek R1.

Its reinforcement learning approach gives it a clear edge in step by step logical reasoning. Benchmarks like 97.3% on MATH-500 and near parity with OpenAI o1 on AIME support that. If your work involves solving hard problems where precision is critical, R1 is the right choice.

For speed, scale, and everyday tasks

Choose DeepSeek V3.

You get 80 to 90% of R1’s capability at roughly half the cost and significantly faster speed. It works well for code generation, summarization, translation, and general API workloads. With a 128K context window and lower latency, V3 fits naturally into production environments.

For building complete applications

Skip the model debate and use Emergent.

If you are evaluating models for your AI coding workflow, you might be asking the wrong question. Models can generate outputs, but turning those outputs into a working product still requires stitching together tools, managing context, and handling execution. Emergent removes that friction by combining AI powered code generation with a full development environment, so you can go from idea to shipped product without the usual overhead. Stop choosing models. Start shipping. Build for free.

FAQs

1. What is the main difference between DeepSeek R1 and V3?

R1 is a reasoning model trained through reinforcement learning to generate chain-of-thought traces before answering. V3 is a general-purpose model optimized for speed and breadth across diverse tasks. R1 excels at multi-step logic and math. V3 excels at fast, cost-efficient code generation and general-purpose AI tasks.