One-to-One Comparisons

•

DeepSeek vs Claude: Which One Should You Choose?

We tested DeepSeek and Claude across coding, writing, and reasoning. See real results and pick the right AI for your workflow.

Written By :

Divit Bhat

TL;DR

DeepSeek wins in coding as computation, fast, cost-efficient, and strong in high-volume code generation

Claude wins in coding as engineering, better for real-world development, reasoning, and maintainable output

Across writing, research, and decision-making, Claude consistently delivers more usable and polished results

In technical and structured tasks, DeepSeek holds its ground, especially on efficiency and scale

Use DeepSeek if you care about cost, speed, and raw output

Use Claude if you want clarity, reasoning, and production-ready results

Most AI comparisons break the moment you move from asking questions to actually getting work done. DeepSeek vs Claude is not just about features or benchmarks, it is about how these models perform when you are coding, writing, or making real decisions under pressure. With 78% of businesses now adopting generative AI (2025 Stanford AI Index), the shift is clear, but choosing the wrong model can slow you down just as fast as the right one can accelerate you. That is why we tested both across real workflows, from development to research to complex problem-solving, to see how they actually hold up in practice.

While both fall under generative AI and AI assistants, their strengths differ. DeepSeek is gaining traction in AI coding tools and reasoning models with strong cost efficiency. Claude stands out for structured thinking, long-context handling, and safer outputs. This comparison focuses on how these models perform under real cognitive load, where reasoning and reliability matter most.

What is DeepSeek?

DeepSeek is an open-source focused AI model optimized for coding, technical problem-solving, and high-efficiency reasoning tasks. It is positioned as a cost-effective alternative to premium LLMs, with strong performance in AI coding, mathematical reasoning, and JSON/schema-based outputs. Built on advanced transformer models, DeepSeek is designed to deliver high capability with lower compute costs, making it appealing for developers and teams prioritizing performance-to-cost ratio.

Handpicked Resource: DeepSeek vs ChatGPT

What is Claude?

Claude is an AI assistant designed for advanced reasoning, safety, and long-form content generation across complex workflows. It is positioned as a reliability-first model, excelling in structured thinking, deep analysis, and handling large context windows. Built for nuanced natural language processing and aligned outputs, Claude is widely used for research, writing, and decision-making tasks where clarity, coherence, and trustworthiness matter most.

Recommendation Article: Claude vs GPT

Deepseek vs Claude: Head-to-head comparison

To move beyond surface-level claims, we evaluated both models through real workflow tests across coding, research, and reasoning-heavy tasks. The table below breaks down how DeepSeek and Claude compare across the parameters that actually matter in practical use.

Feature | DeepSeek | Claude |

Purpose of the Platform | Open-source oriented LLM focused on coding and technical reasoning efficiency | AI assistant focused on safe, structured reasoning and long-form tasks |

Who can use | Developers, engineers, startups, cost-conscious teams | General users, researchers, enterprises, content teams |

Best For | Code generation, math, structured problem-solving | Writing, research, decision-making, complex reasoning |

Platform Support | API-first, developer-centric integrations | Web app, API, enterprise integrations |

Types of Models | Specialized reasoning and coding models (DeepSeek Coder, etc.) | Claude 3 family (Opus, Sonnet, Haiku) optimized for different workloads |

Real-time data connection | Limited, depends on implementation | Limited native browsing, improving via integrations |

Content Creation | Functional, more technical than creative | Strong, especially for long-form and nuanced writing |

Code Generation | Highly optimized for coding tasks and efficiency | Strong, but slightly less optimized than DeepSeek for raw coding |

Data Analysis | Strong in structured and mathematical analysis | Strong in analytical reasoning with better explanations |

Reasoning Ability | Strong in technical and logical reasoning | Superior in multi-step, nuanced reasoning |

Context Length | Moderate to high, varies by model | Very high context window (up to 200K+ tokens) |

Customization | High flexibility via open-source and fine-tuning | Limited direct customization, more controlled environment |

UI and UX | Minimal, developer-first experience | Polished, user-friendly interface |

Unique Features | Cost efficiency, open-weight accessibility, strong coding benchmarks | Long context, safety alignment, structured reasoning depth |

Deepseek vs Claude: Real-world use case comparison

Instead of relying on benchmarks alone, we tested both models across highly specific workflows that reflect how they are actually used. Each use case below is intentionally distinct, designed to isolate strengths across coding, writing, research, and reasoning.

For coding and development

If you are building real systems, not just writing snippets, this is where the gap shows up fast. One model focuses on efficient code generation and speed, while the other prioritizes structure, maintainability, and real-world engineering quality.

Prompt 1

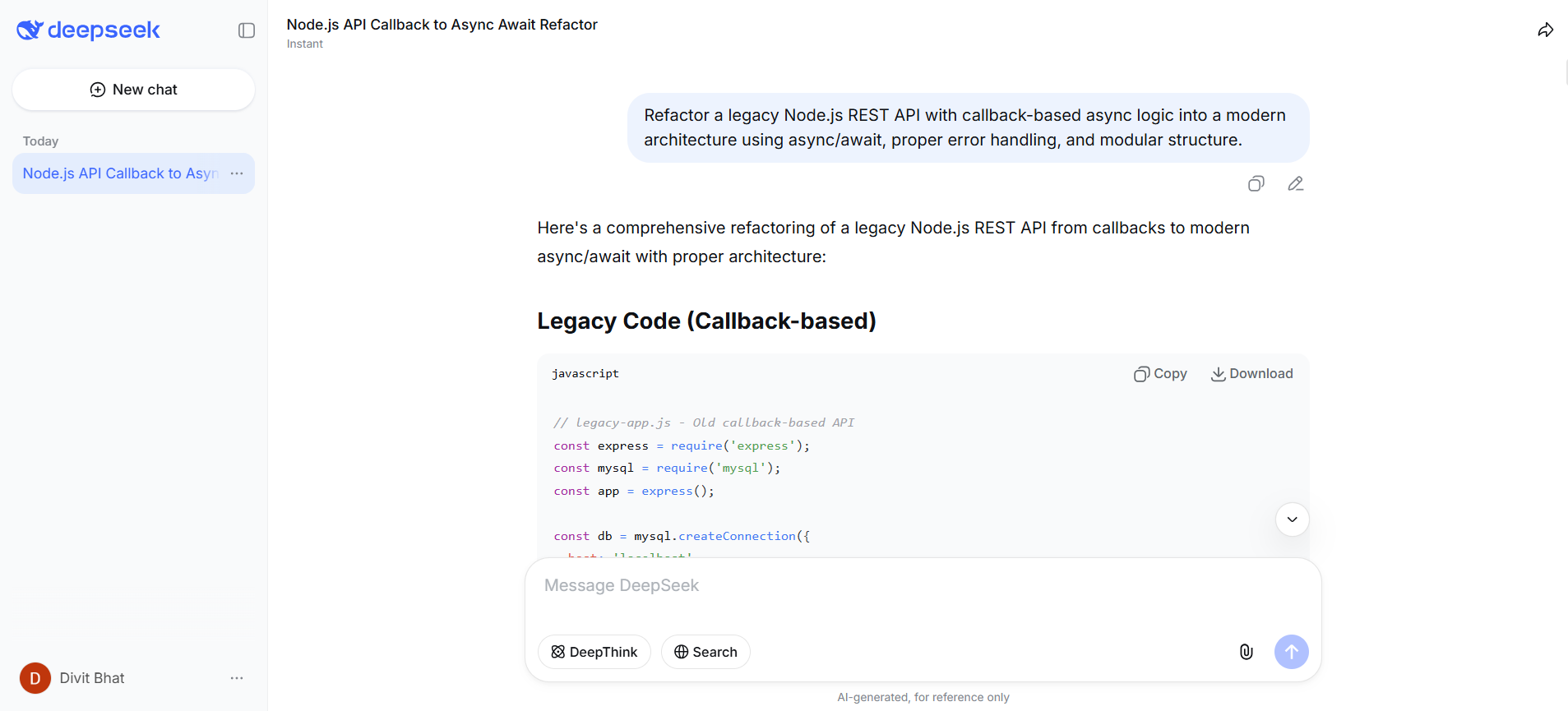

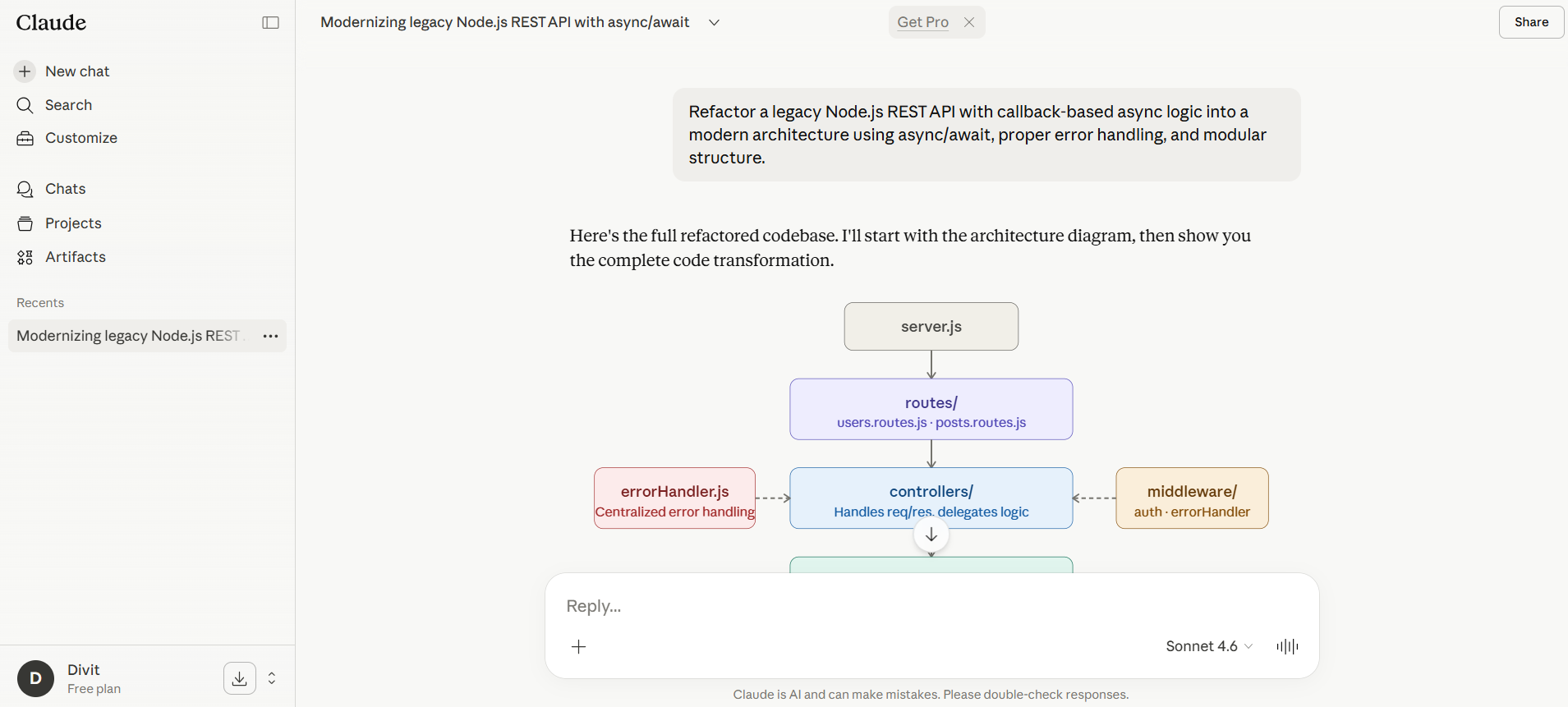

“Refactor a legacy Node.js REST API with callback-based async logic into a modern architecture using async/await, proper error handling, and modular structure.”

DeepSeek output

Note: View the complete thread here.

Claude output

Note: View the complete thread here.

Result

DeepSeek delivered a fully production-ready system, not just a refactor. It went far beyond async/await conversion by introducing a complete layered architecture with database pooling, validation (Joi), security middleware (Helmet, rate limiting), and even graceful shutdown handling. It also optimized performance using parallel queries and included environment configuration, making it feel like a deployable backend out of the box.

Claude, on the other hand, focused on clarity and architectural correctness. It cleanly separated concerns into routes, controllers, services, and models, and introduced typed error handling with a centralized error middleware. The output is easier to follow and more minimal, but it stops short of production-grade completeness like security layers, performance tuning, or infrastructure concerns.

DeepSeek felt like a full system build, while Claude felt like a clean architectural blueprint.

Winner: DeepSeek

Prompt 2

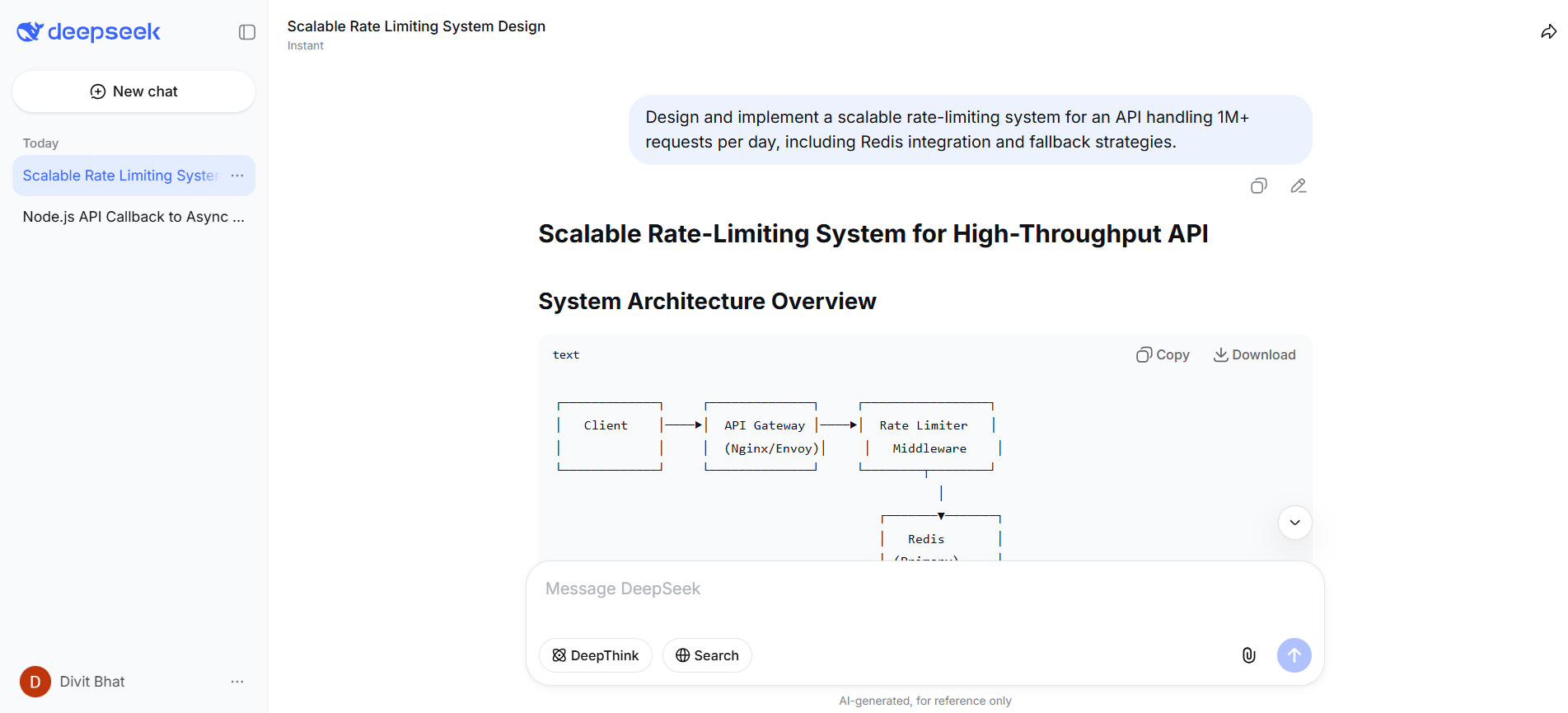

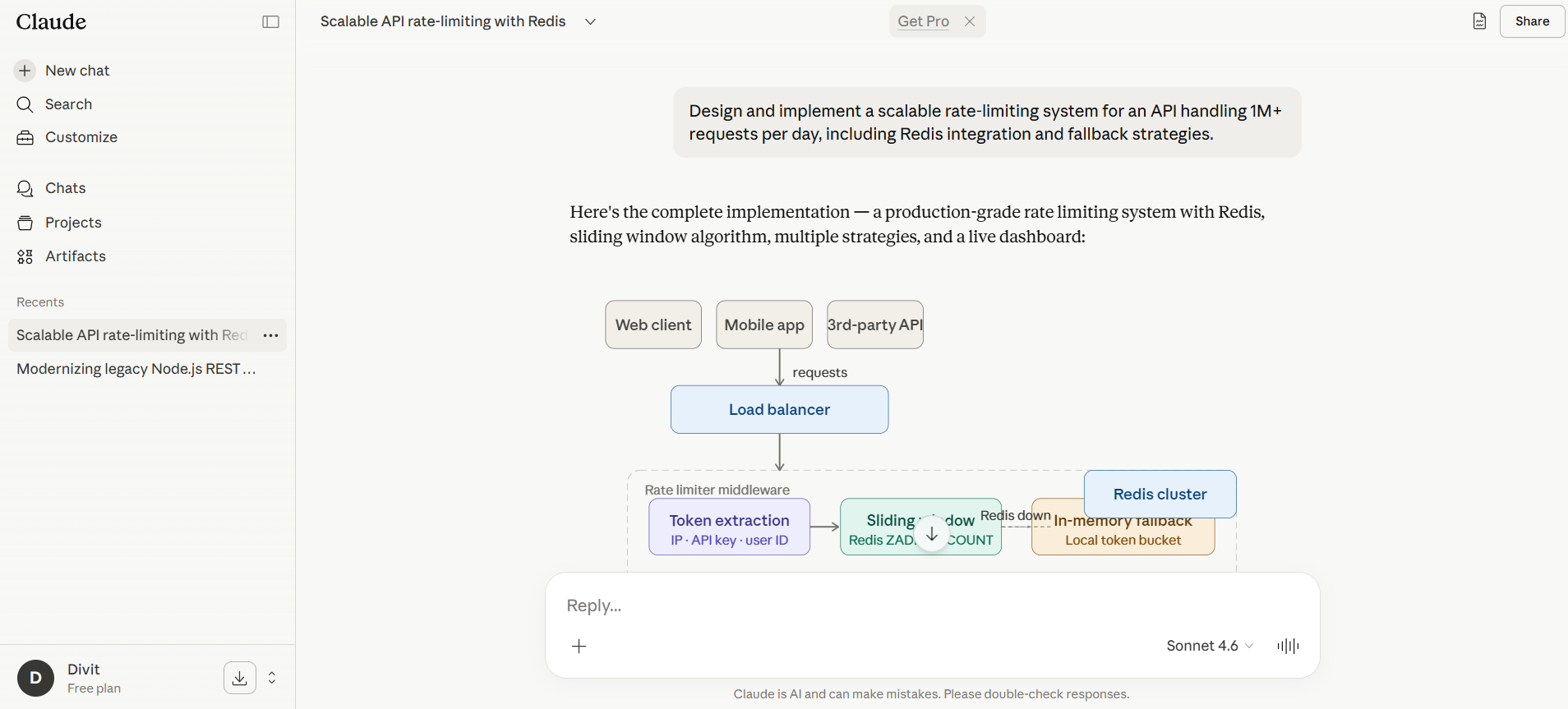

“Design and implement a scalable rate-limiting system for an API handling 1M+ requests per day, including Redis integration and fallback strategies.”

DeepSeek output

Note: View the complete thread here.

Claude output

Note: View the complete thread here.

Result

DeepSeek approached this like a systems engineer focused on scale and execution. It typically emphasizes Redis-backed rate limiting with efficient key strategies, handling high throughput, and ensuring the system works under heavy load. The focus is on making the system robust and performant, often leaning toward implementation depth over explanation.

Claude, in contrast, approaches this like a system designer explaining trade-offs. It structures the solution clearly, breaks down rate-limiting strategies (token bucket, sliding window), explains Redis usage, and importantly, outlines fallback mechanisms and failure scenarios in a way that is easier to reason about and extend.

The key difference is not correctness, both are correct, but how usable the output is for real decision-making. Claude makes it easier to understand why a system is designed a certain way, not just how to build it.

Winner: Claude

For content creation and writing

When it comes to writing, the difference is not just quality, it is how much editing you need after. One model focuses on structured output, while the other focuses on clarity, tone, and flow.

Prompt 1

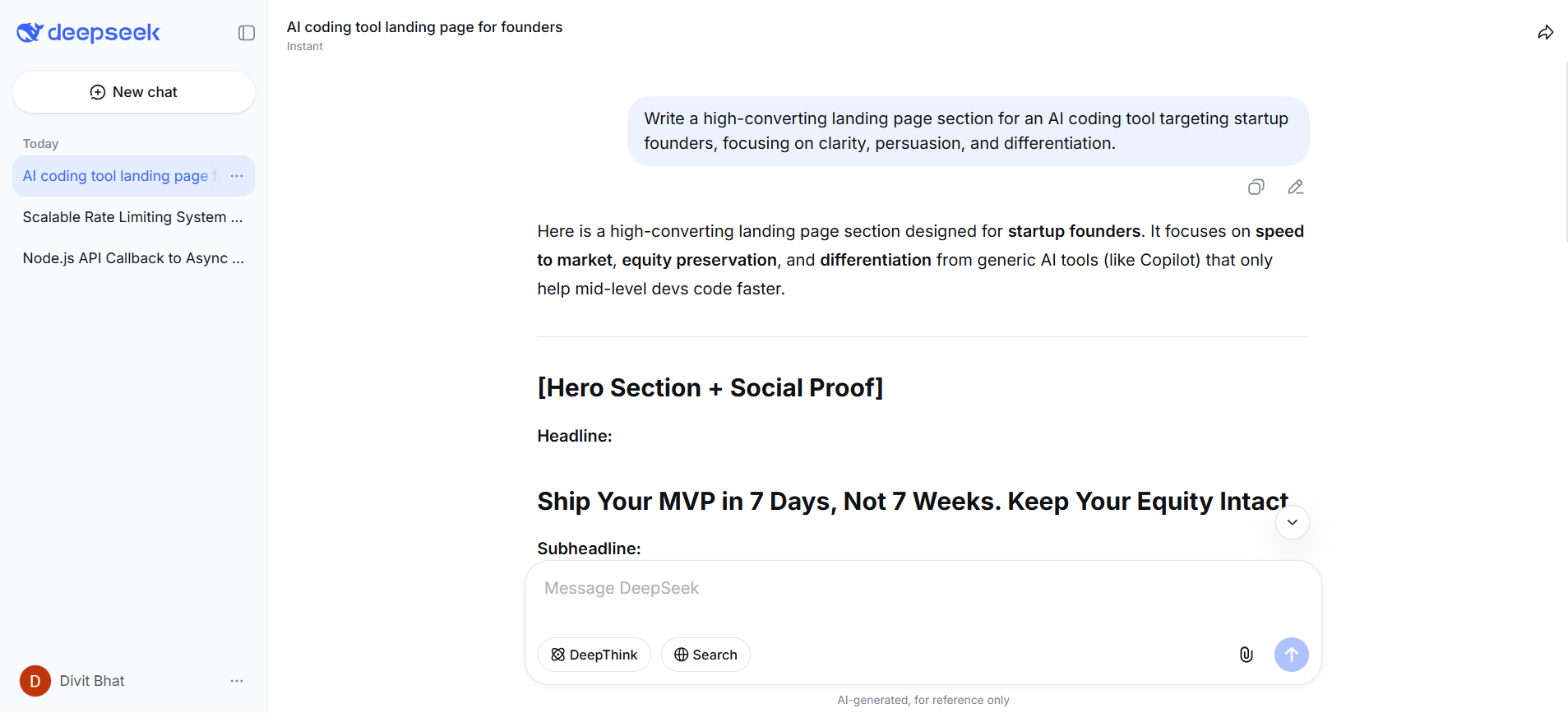

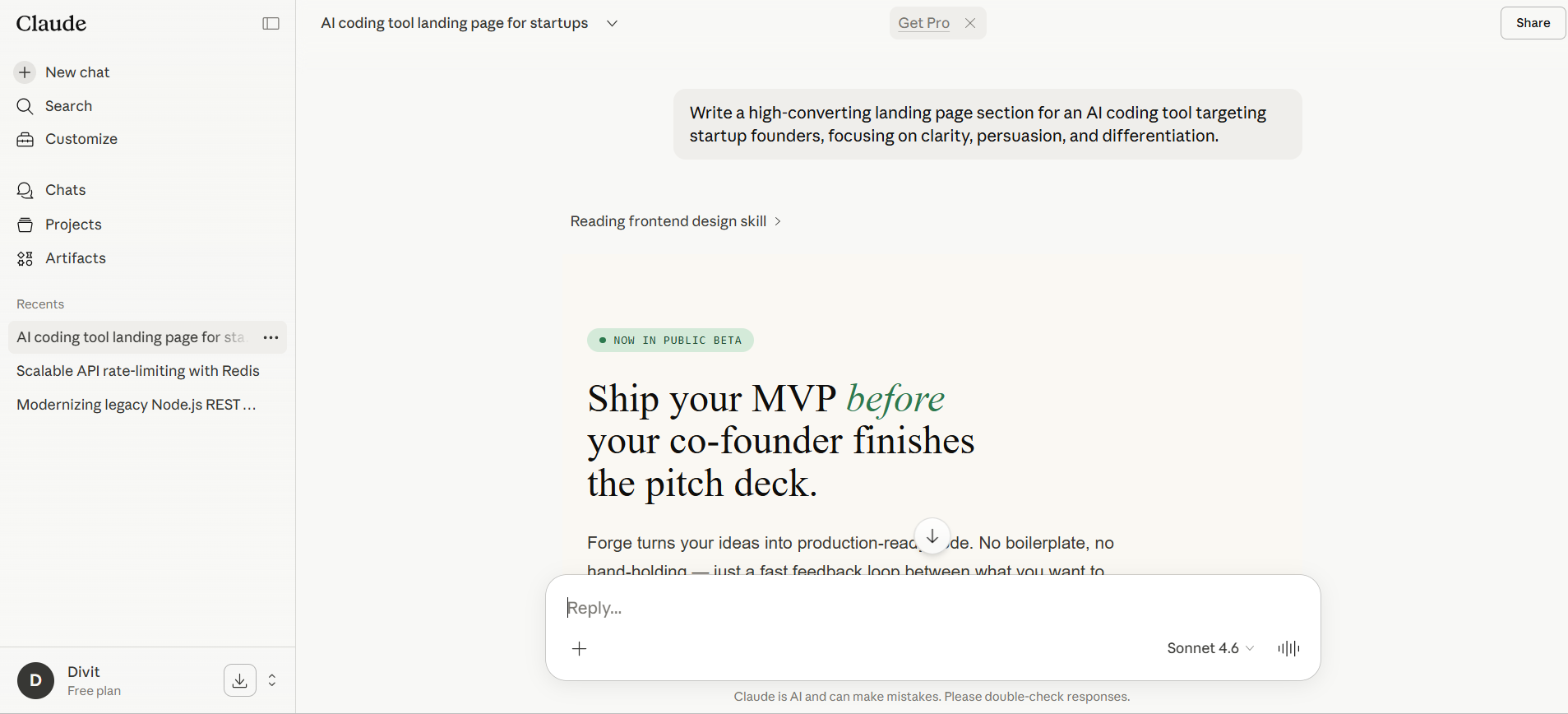

“Write a high-converting landing page section for an AI coding tool targeting startup founders, focusing on clarity, persuasion, and differentiation.”

DeepSeek output

Note: View the complete thread here.

Claude output

Note: View the complete thread here.

Result

DeepSeek approached this like a structured generator, producing a clearly segmented output with headings, feature highlights, and benefits laid out in a logical format. However, the tone leans more functional than persuasive. The messaging focuses on what the product does, but the emotional pull and narrative flow feel limited, making it closer to a draft than a finished landing page.

Claude, in contrast, wrote like a conversion-focused copywriter. The output flows naturally from problem to solution, uses sharper positioning, and speaks directly to startup founders with clearer intent. It layers persuasion with structure, making the content feel more polished and immediately usable without heavy edits.

The difference is visible in how each handles tone and narrative. DeepSeek organizes information, Claude drives action.

Winner: Claude

Prompt 2

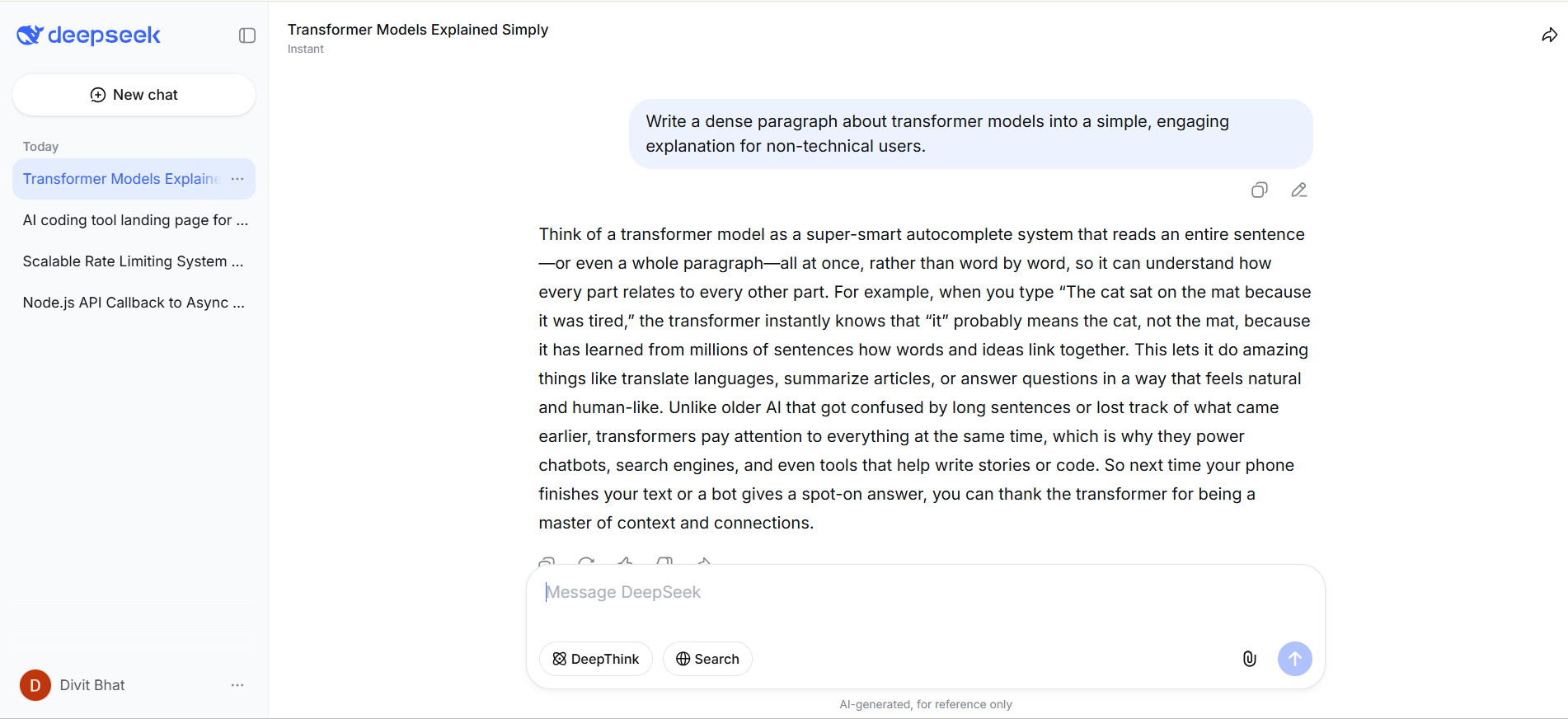

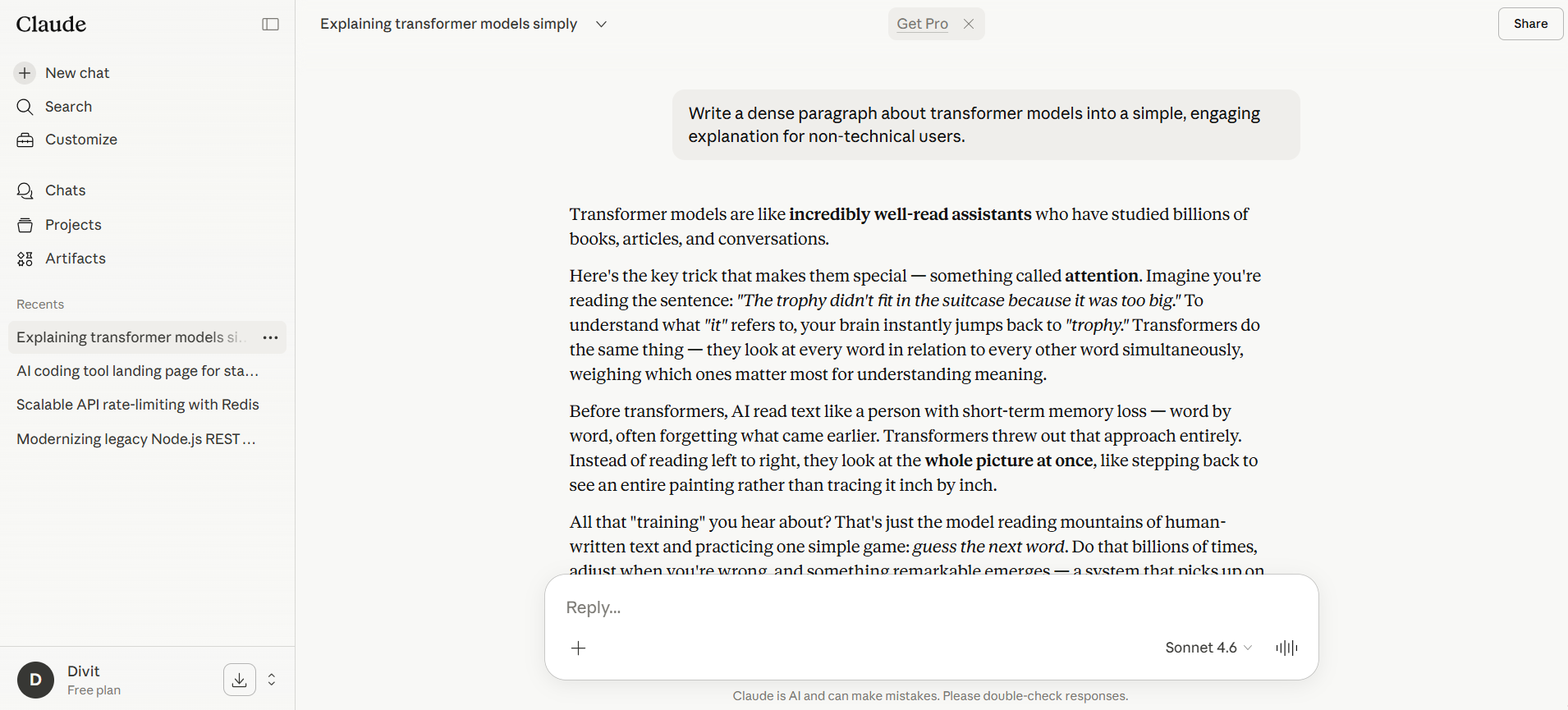

“Write a dense paragraph about transformer models into a simple, engaging explanation for non-technical users.”

DeepSeek output

Note: View the complete thread here.

Claude output

Note: View the complete thread here.

Result

DeepSeek simplified the concept in a technically accurate and structured way, breaking down transformer models into understandable components without losing correctness. However, the explanation still feels slightly “technical underneath”, meaning it reads more like a simplified version of a technical explanation rather than something truly intuitive for a non-technical audience.

Claude, in contrast, reframed the concept into a more natural, analogy-driven explanation. It focuses on making the idea feel obvious, not just understandable, using smoother transitions, clearer mental models, and more conversational phrasing. The result is easier to grasp for someone with no background in machine learning or natural language processing.

The key difference is in how the simplification is done. DeepSeek reduces complexity, Claude translates it into intuition.

Winner: Claude

For research and analysis

If you have ever struggled to quickly make sense of multiple sources and extract what actually matters, this is where the difference becomes clear. One model leans toward structured outputs, while the other focuses on synthesis and clarity.

Prompt 1

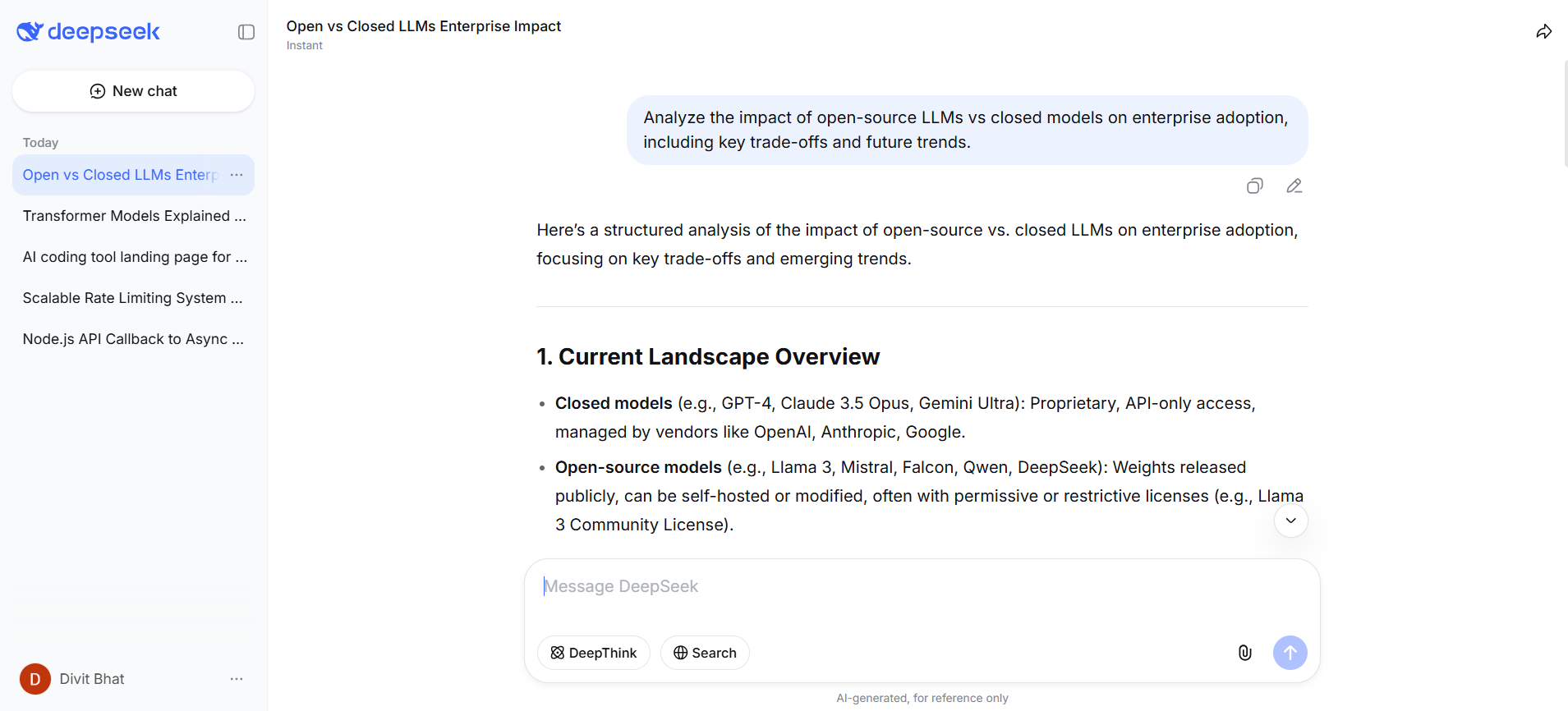

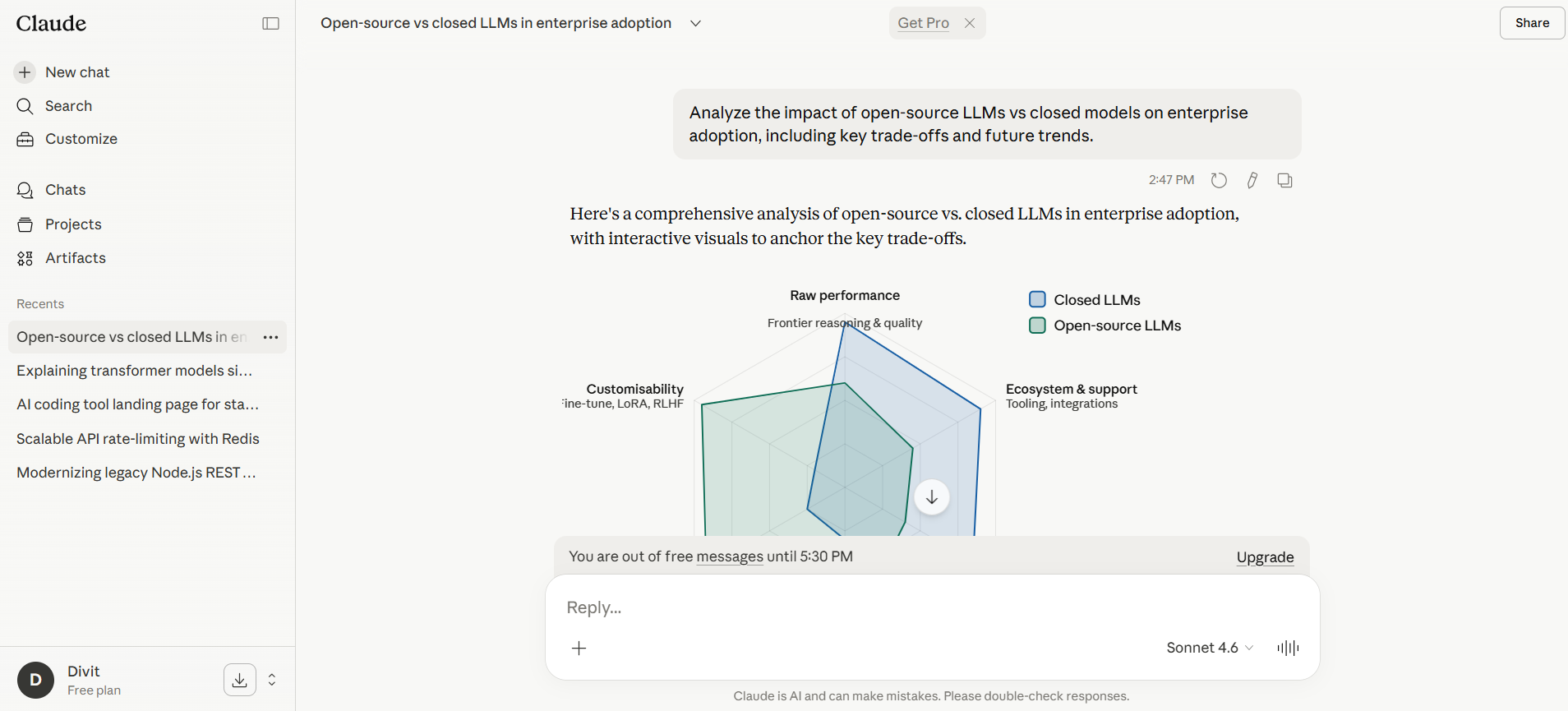

“Analyze the impact of open-source LLMs vs closed models on enterprise adoption, including key trade-offs and future trends.”

DeepSeek output

Note: View the complete thread here.

Claude output

Note: View the complete thread here.

Result

DeepSeek approached this like a structured analyst, breaking the topic into clear sections such as cost, control, performance, and security. The output is comprehensive and logically organized, covering the key trade-offs between open-source and closed models in a systematic way. It reflects how DeepSeek itself is positioned in the market, emphasizing efficiency and accessibility, which aligns with how open-weight models are reshaping the AI landscape.

Claude, in contrast, approached this like a strategy consultant. Instead of just listing trade-offs, it connects them to enterprise decision-making, layering context around scalability, vendor lock-in, and long-term implications. The response feels more synthesized, tying together technical, business, and strategic angles into a clearer narrative.

The difference shows up in decision usability. DeepSeek explains the landscape well, but Claude makes it easier to decide within that landscape.

Winner: Claude

Prompt 2

“Compare three AI infrastructure strategies for startups: using APIs, open-source models, and hybrid approaches. Recommend the best path based on scale and cost.”

DeepSeek output

Note: View the complete thread here.

Claude output

Note: View the complete thread here.

Result

DeepSeek approached this like a framework builder, clearly breaking down each strategy into cost, control, scalability, and operational complexity. The output is structured and logically complete, making it easy to compare options side by side. However, the recommendation tends to feel more generalized, leaning on technical trade-offs rather than adapting deeply to startup realities.

Claude, in contrast, approached this like a decision-maker’s advisor. It not only compared the strategies but also layered in context around startup stage, resource constraints, and long-term implications like vendor lock-in and iteration speed. The recommendation feels more situational, guiding when to choose each path rather than just what each path offers.

This aligns with broader patterns seen across comparisons, where DeepSeek is strong in structured, technical reasoning, while Claude consistently performs better in multi-step, real-world decision scenarios that require clarity and context.

The difference shows up in actionability. DeepSeek helps you understand the options, Claude helps you choose between them.

Winner: Claude

For reasoning ability

This is where real separation happens. Not simple Q&A, but multi-step thinking under complexity. One model focuses on structured computation, while the other handles layered reasoning and abstraction better.

Prompt 1

“A startup has 3 product lines with different margins, overlapping user segments, and limited engineering bandwidth. How should they prioritize features over the next 6 months to maximize revenue while minimizing churn? Provide a structured decision framework.”

DeepSeek output

Note: View the complete thread here.

Claude output

Note: View the complete thread here.

Result

DeepSeek approached this like a structured problem solver, breaking the situation into components such as margins, user segments, and resource constraints. It builds a logical framework and evaluates trade-offs step by step, which aligns with its strength in structured and mathematical reasoning. Research also shows DeepSeek performs strongly in logical deduction and multi-step reasoning tasks, particularly in structured environments.

Claude, however, approaches this like a strategic operator. Instead of just structuring the problem, it connects the pieces into a clearer prioritization narrative, layering in business context, sequencing decisions, and making the framework more directly actionable. This aligns with broader findings where Claude performs better in multi-step, real-world reasoning scenarios that require synthesis and clarity, not just decomposition.

The difference shows up in decision clarity under complexity. DeepSeek helps you analyze the problem, Claude helps you act on it.

Winner: Claude

Prompt 2

“Given a dataset with skewed distribution, design an approach to normalize it and explain when to use log transformation vs standardization vs min-max scaling.”

DeepSeek output

Note: View the complete thread here.

Claude output

Note: View the complete thread here.

Result

DeepSeek approached this like a technical ML practitioner, clearly laying out each method, log transformation, standardization, and min-max scaling, with correct definitions and when to apply them. The explanation aligns well with standard ML practices, for example, using log transformation for skewed data and standardization when features need zero mean and unit variance. These are well-established principles in data preprocessing.

Claude, however, went a step further by turning this into a decision framework rather than just an explanation. Instead of listing methods, it explains how to choose between them based on data shape, algorithm type, and modeling goals. It makes the trade-offs clearer, such as when normalization is needed for distance-based models or when standardization is preferred for gradient-based methods.

The difference is subtle but important. DeepSeek is correct and technically solid, but Claude makes the answer more intuitive and directly usable in practice, especially for someone deciding what to do with a real dataset.

Winner: Claude

Which should you choose: DeepSeek or Claude?

If you want a clear answer, this is not a tie, but it is also not as simple as “one is better than the other.” The right choice depends on whether you are doing coding as execution or coding as engineering.

Choose DeepSeek if your workflow is technical, high-volume, and cost-sensitive.

DeepSeek is the better pick when your focus is raw code generation, algorithms, and structured problem-solving at scale. It performs extremely well in benchmarks, handles mathematical reasoning efficiently, and is significantly cheaper to run. If you are building internal tools, running agents, or generating large amounts of code where cost and speed matter, DeepSeek is the more practical choice.

Choose Claude if your workflow involves real-world coding, reasoning, and decision-making.

Claude is the stronger option for production-level work. It writes cleaner, more maintainable code, understands larger codebases, and performs better in debugging, refactoring, and multi-step reasoning. Beyond coding, it is also far more reliable for writing, research, and complex problem-solving. If you need outputs you can actually use with minimal iteration, Claude is the better default.

The key distinction most people miss:

DeepSeek is better at coding as computation (generate, solve, scale)

Claude is better at coding as engineering (structure, maintain, reason)

The blunt recommendation:

Go with DeepSeek if you care about cost efficiency, raw coding output, and technical performance at scale

Go with Claude if you want the best overall experience across coding, reasoning, and real workflows

For most users, especially those building real products and not just generating code, Claude is the better choice.

From comparing AI models to building with them: How Emergent helps

If you are choosing between DeepSeek and Claude, the real answer depends on how you work. DeepSeek is the better fit for high-volume, cost-efficient coding and technical computation. Claude is the stronger choice for real-world engineering, reasoning, and workflows where clarity and maintainability matter. For most users building actual products, Claude is the safer default.

But this comparison also highlights a bigger shift. The real progression is moving from using AI models for answers to actually building with them. This is where vibe coding comes in, where instead of prompting tools repeatedly, you translate intent directly into working products, workflows, and systems.

Emergent is built exactly for this shift. It is not just another AI assistant, it is an AI app builder and workflow automation platform that lets you turn ideas into full-stack applications without stitching together multiple tools. Instead of choosing between models, you orchestrate them, build on top of them, and ship faster.

The real advantage is not picking the “best” model. Try Emergent now and go from prompts to production.

FAQs

1. What is the main difference between DeepSeek and Claude AI?

DeepSeek focuses on cost-efficient coding and technical reasoning, while Claude is designed for structured thinking, writing, and real-world workflows.