One-to-One Comparisons

•

Perplexity vs ChatGPT: The Smarter AI Tool?

Thinking about switching from ChatGPT to Perplexity? Let’s compare research, coding, reasoning, and everyday AI tasks.

Written By :

Divit Bhat

TL;DR

The practical takeaway:

Use Perplexity for fast, source-backed real-time information

Use ChatGPT to think, write, solve, or build step-by-step

The core difference:

Perplexity = find and verify information

ChatGPT = turn information into something usable

What most people actually do:

Start with Perplexity to research and validate

Move to ChatGPT to refine, decide, and execute

Perplexity vs ChatGPT, at a glance, both tools can feel interchangeable and one might feel there’s hardly anything to compare. You ask a question, you get an answer. But the moment the task requires depth or nuance, whether it is writing, decision-making, or problem-solving, the gap becomes obvious. This is less about which tool is better, and more about which one fits the kind of work you are actually trying to do.

To make that difference clear, I tested both tools across multiple real-world use cases, covering students, developers, content creators, and business operators. For each scenario, I used the same prompts and compared the actual outputs side by side. What you’ll see ahead is not a feature comparison, but how these tools behave when you actually use them.

The fundamental difference

Perplexity starts by looking outward. Every query is treated like a research task, it pulls from live sources, prioritizes what is recent and relevant, and builds a response around those references. The citations are not an add-on, they are the foundation of the answer itself. That is why it feels fast and reliable when your goal is to understand what is happening, what people are saying, or what the current consensus looks like.

ChatGPT starts from a different place. It looks inward first, using its training, the context you provide, and the way you frame the problem to generate an answer. Instead of asking “what do sources say,” it focuses on “what is the most coherent way to solve or explain this.” That shift is subtle, but it changes the output completely. You get structure, reasoning, and iteration, not just aggregation.

So the gap is not just search vs writing, it is retrieval vs reasoning as the starting point. Once you see that clearly, you stop forcing one tool into the other’s role, and your workflow becomes much more efficient without needing to overthink the choice every time.

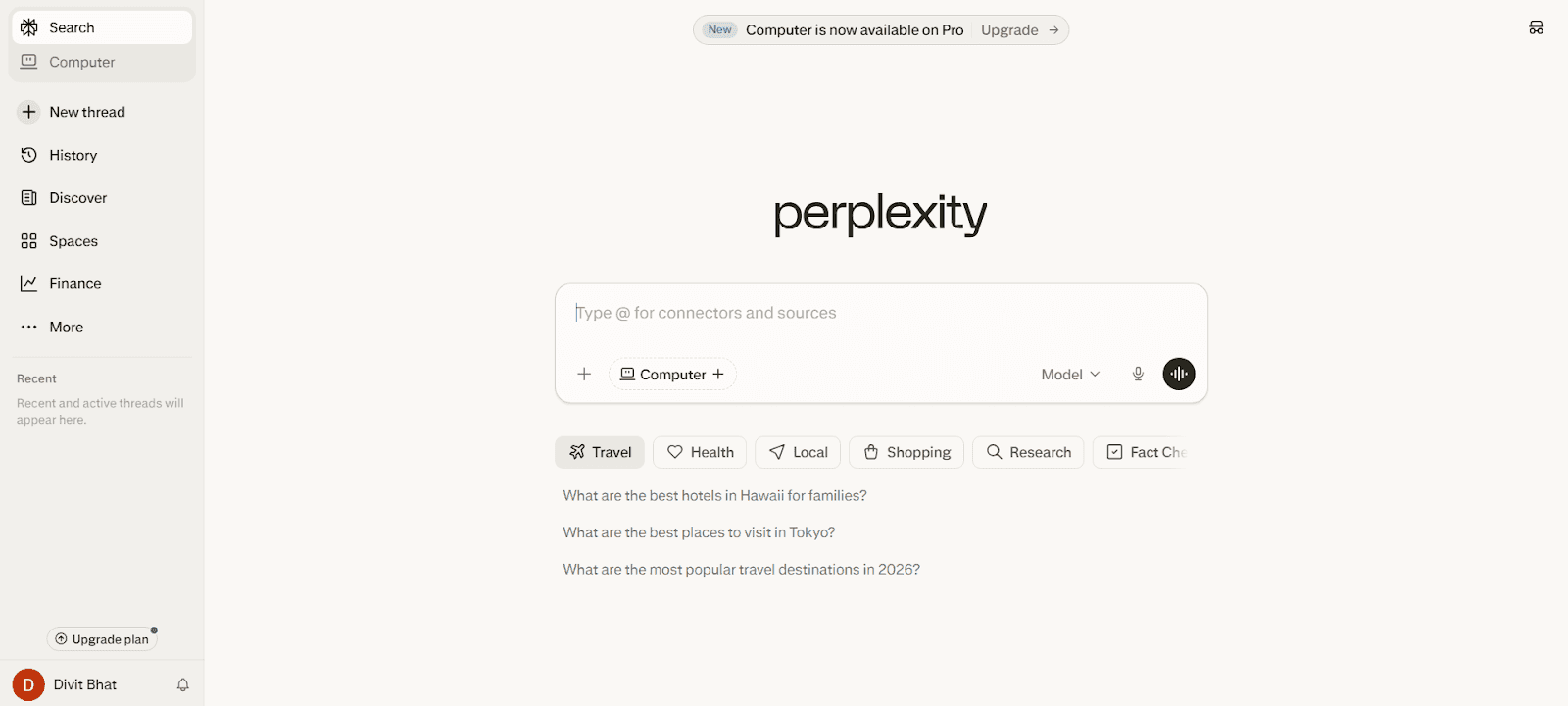

Perplexity AI (Answer engine)

Perplexity AI is not just another chatbot layered on top of a language model, it is built as an answer engine where retrieval is the core system, not a feature added later. Every query is treated as a live information problem, which means the system actively pulls, ranks, and synthesizes data from the web in real time before forming a response. This is fundamentally different from tools that generate first and optionally attach sources after.

What makes it stand out is how tightly integrated retrieval is with the answer generation itself. It does not simply list links or summarize one source, it performs multi-source synthesis while preserving traceability. That is why the citations feel native to the response instead of being appended for credibility. In practice, this reduces the gap between asking a question and trusting the authenticity of the answer, which is where most traditional search workflows break down.

Another layer that often gets missed is how Perplexity structures its experience around continuous research rather than isolated prompts. Features like Copilot and focus modes are designed to guide queries, refine intent, and narrow down sources (academic, web, etc.), which makes it behave like a research assistant rather than a static search tool.

This is also why it feels faster for discovery workflows, as it removes intermediate steps like opening tabs, cross-checking sources, and manually synthesizing information.

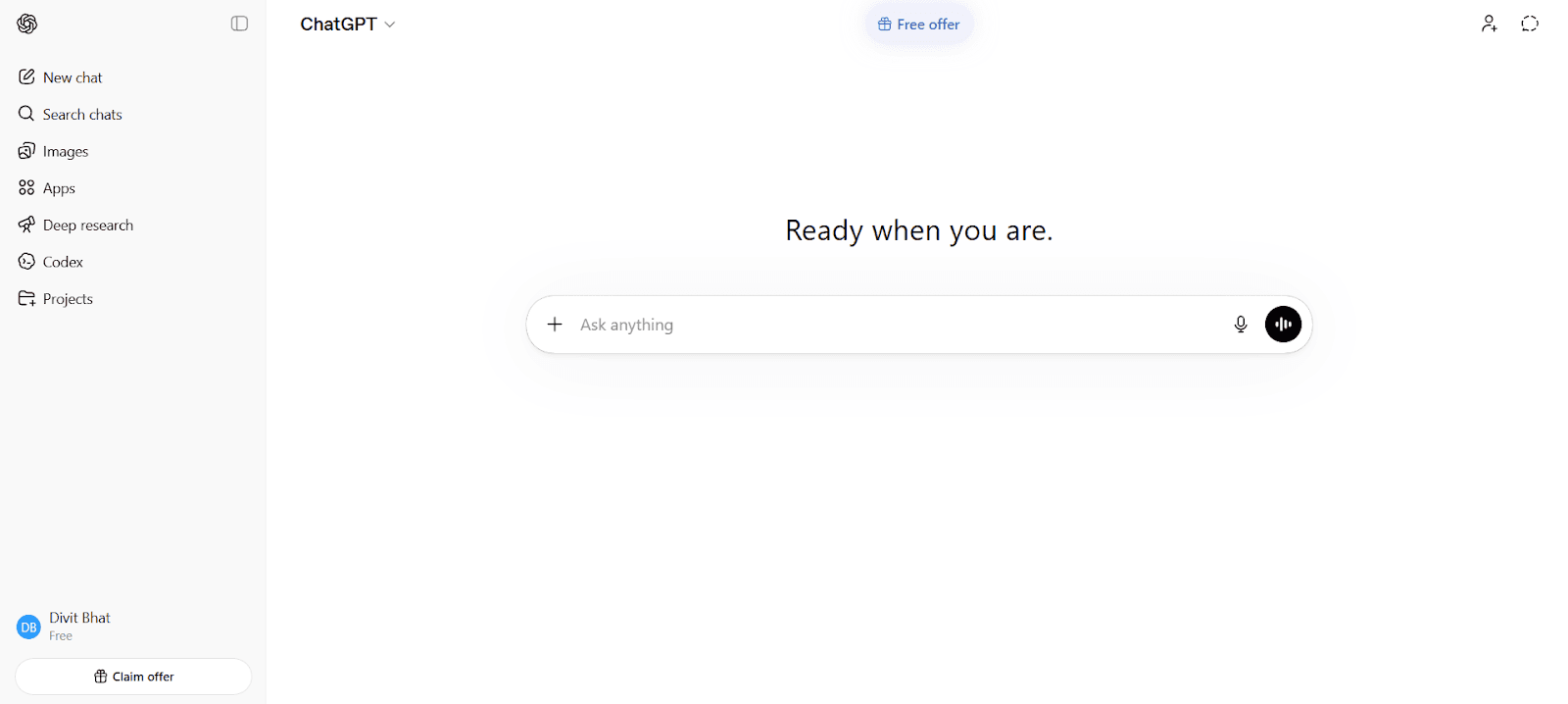

ChatGPT (Reasoning engine)

ChatGPT is built around generation first, but more importantly, around structured reasoning and iteration. It does not treat every query as a lookup problem. Instead, it treats it as something that can be broken down, interpreted, and reshaped based on context. That is why it feels less like a search tool and more like a system you can think with.

What sets it apart is how flexible the interaction layer is. You are not just asking for an answer, you are building toward one. The model keeps track of context across turns, adapts to constraints you introduce, and can refine outputs in multiple passes without losing direction. This makes it particularly strong for tasks where the first answer is rarely the final one, like writing, problem solving, coding, or strategy.

Another difference that does not get talked about enough is how much control you have over the output itself. Through prompting, system instructions, and tools available via the API, you can shape tone, structure, depth, and even the reasoning path the model takes. This is why it scales beyond casual use. It can act as a writing partner, a coding assistant, or a decision support system depending on how you guide it. In practice, that makes it far more adaptable for workflows where the goal is not just to find information, but to turn that information into something usable.

Perplexity vs ChatGPT - The most comprehensive comparison table

Choosing between these two comes down to how you work, not just what features they offer. This table breaks down the differences across the areas that actually impact real usage.

Criteria | ChatGPT | Perplexity AI |

Purpose of the platform | Reasoning, creation, and problem-solving engine | AI-powered answer engine built for search and research |

Who can use | Writers, developers, students, businesses, general users | Researchers, students, analysts, general users |

Best for | Writing, coding, decision-making, workflows | Real-time search, fact-checking, quick research |

Platform support | Web, mobile apps, API integrations | Web, mobile apps |

Types of models | Multiple advanced models with different capabilities | Mix of proprietary + external models (search-focused) |

Real-time data connection | Limited, requires browsing tools | Strong, built on live web retrieval by default |

Content creation | High-quality, structured, publish-ready outputs | Informational, summary-style content |

Code generation | Strong, supports debugging, optimization, iteration | Good for standard solutions and references |

Image creation | Supported (generation + editing workflows) | Limited / not core focus |

Data analysis | Strong, supports structured reasoning and transformations | Limited to summarization of available data |

Thinking context | Maintains multi-turn context and adapts deeply | Limited conversational depth |

Deep research | Requires prompting and validation | Native, source-backed multi-source synthesis |

Customization | High control via prompts, instructions, API | Limited control over output style |

UI and UX | Flexible but depends on user prompting skill | Extremely simple, search-like experience |

Unique interesting features | Iteration, reasoning, extensibility via API | Copilot, focus modes, built-in citations |

Pricing | Free + paid tiers with advanced capabilities | Free + paid tiers for enhanced search and models |

Iteration & refinement | Strong, improves outputs across multiple turns | Limited depth in iterative workflows |

Source transparency | Optional, needs explicit prompting | Native citations in every response |

Handpicked Resource: Best Perplexity Alternatives

Perplexity AI features that actually change how you search

Perplexity AI is not just adding AI on top of search, it is redesigning how search itself works. The features that matter are the ones that remove steps from your workflow, not just add capabilities.

Search that runs a retrieval pipeline, not just a query

Every question in Perplexity triggers a live retrieval process where multiple sources are pulled, ranked, and synthesized before you even see an answer. This is very different from traditional search, where you are given links and expected to do the synthesis yourself.

What matters here is not that it “uses sources,” but that retrieval is the first step, not an afterthought. The answer is built on top of that pipeline, which is why it feels closer to a finished output than a starting point.

Native source attribution, not appended citations

Most tools that show sources add them after generating an answer. Perplexity structures the answer around its sources.

This changes how you read the output. You are not guessing whether something is reliable and then checking links manually. You can trace each part of the answer back to where it came from without breaking your flow. That is a small shift, but it removes one of the most time-consuming parts of research.

Guided querying through Copilot

Perplexity’s Copilot actively shapes your query before and during the search process.

Instead of expecting you to write the perfect prompt, it asks follow-up questions, narrows scope, and adjusts the search direction. This makes a big difference in research-heavy tasks, where the quality of the question often determines the quality of the answer.

Focus modes that change the data layer itself

One of the more underrated features is the ability to switch between different “focus” modes, like web, academic, or specific domains.

This is not just filtering results after the fact. It changes where the system looks for information in the first place, which directly affects the quality and relevance of the output. For example, academic mode prioritizes research-backed content instead of general web summaries.

Threaded research, not one-off queries

Perplexity treats research as a continuous process rather than isolated prompts.

You can refine questions, dig deeper, and build on previous queries within the same thread without losing context. This reduces the need to restart searches or reframe queries from scratch, which is a common friction point in traditional search workflows.

Faster “question to answer” loop

The biggest advantage is not a single feature, but the reduction of steps.

In a traditional flow, you search → open links → scan → compare → synthesize.

In Perplexity, that loop is compressed into a single interaction.

That is why it feels faster, not just in speed, but in time to get a source-backed answer.

Additional Resource: Perplexity vs Claude

ChatGPT features that actually change how you think and work

ChatGPT is often described as a chatbot, but that framing misses what makes it useful at scale. Its real strength is not just generating answers, it is how much control you have over how those answers are created, refined, and reused.

Context as a working memory, not just chat history

ChatGPT does not treat each prompt in isolation. It builds a working context across turns, which means you can layer constraints, refine ideas, and iterate without starting over.

This is what turns it from a Q&A tool into something closer to a workspace. You are not just asking questions, you are building toward an outcome step by step. Over multiple turns, the model adapts to your intent, tone, and direction, which reduces the need to restate everything every time.

Instruction control that shapes the output itself

Most users think prompting is just “asking better questions.” In reality, ChatGPT allows you to control how the model behaves.

Through structured instructions, system-level guidance, and role framing, you can influence tone, depth, format, and reasoning style. This is why two users can get completely different outputs for the same task. The tool is not fixed, it adapts based on how you direct it.

That level of control is what makes it useful for serious work, not just casual queries.

Multi-step reasoning as a default capability

ChatGPT is designed to break problems down internally before answering. Whether it is writing, coding, or decision-making, it can hold multiple steps in context and build toward a structured output.

This is why it performs better on tasks that are not clearly defined at the start. You can begin with a rough idea and refine it through interaction until it becomes usable. The model is not just retrieving information, it is actively shaping it.

Iteration without losing direction

One of the most underrated capabilities is how well ChatGPT handles iteration.

You can ask for changes, “make this shorter,” “rewrite this for a different audience,” “turn this into a plan,” and the model adjusts while keeping the core intact. This is difficult to replicate with tools that treat each query independently.

This makes it valuable especially in workflows where the first output is rarely final, which is most real-world work.

Tool and API extensibility (beyond the interface)

Through its API and developer ecosystem, ChatGPT is not limited to the chat interface. It can be embedded into products, workflows, and internal tools.

You can combine it with external data, automate tasks, and build systems that generate, transform, or analyze information at scale. This is where it shifts from a tool you use to something you build with.

Output transformation, not just generation

The biggest shift is this, ChatGPT is not just good at producing answers, it is good at changing them.

You can take raw information, restructure it, simplify it, expand it, or adapt it for a completely different purpose. This makes it useful at every stage of work, from rough thinking to final output.

Check This: ChatGPT Plus vs Pro

Perplexity vs ChatGPT: How they actually perform for different users

This is where the difference becomes obvious. Instead of comparing features, it helps to look at what happens when you use both tools for the kind of work you actually do. Same prompt, same intent, but very different outputs depending on how each system approaches the problem.

Students

If you are studying, the goal is usually not just to find an answer, but to actually understand it, apply it, and sometimes explain it in your own words. That is where the difference between retrieval and reasoning starts to show up clearly.

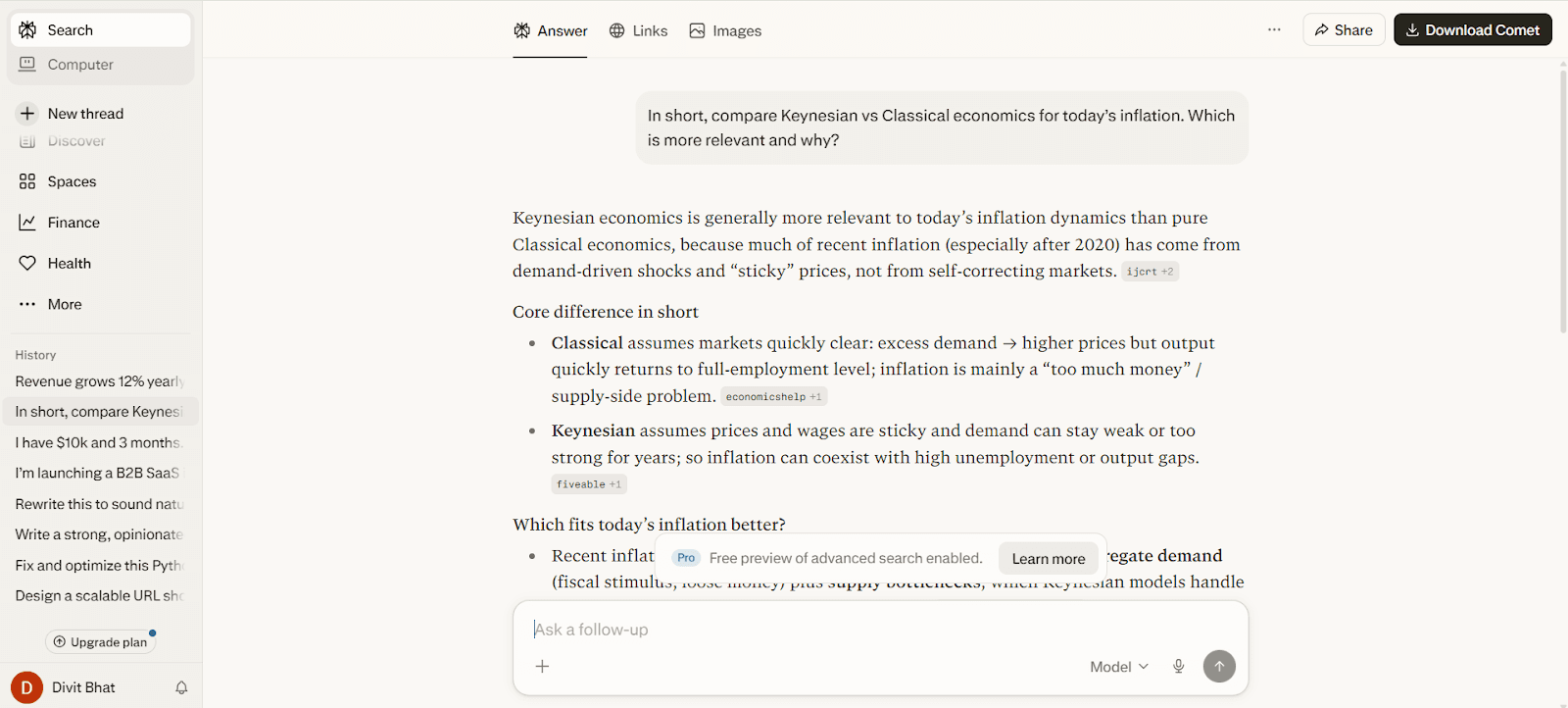

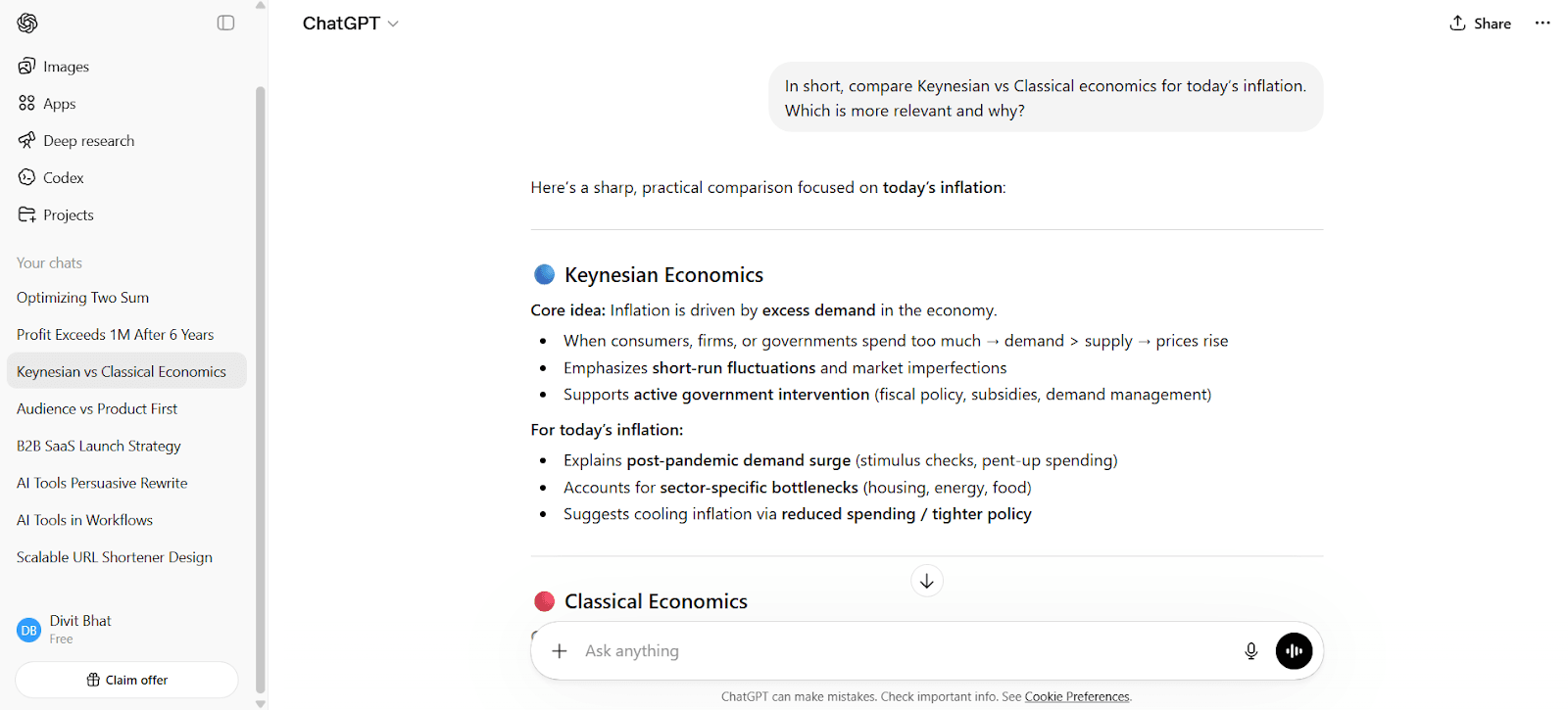

a) Comparing economic theories in a real-world context

Prompt

In short, compare Keynesian vs Classical economics for today’s inflation. Which is more relevant and why?

Perplexity AI output

Note: Read the complete output here.

ChatGPT output

Note: Read the complete output here.

Result

Perplexity anchors everything in sources. It connects recent inflation to demand shocks, supply issues, and policy responses, while showing where each point comes from. If you need something you can trust and reference, it does that with almost no extra effort.

ChatGPT focuses on clarity. It structures the comparison cleanly, simplifies the ideas, and gives you a conclusion you can actually reuse. The way it separates causes and solutions makes it easier to understand and write from.

Both are correct. The difference is usability:

Use ChatGPT when you need to actually understand it.

Use Perplexity when you need validated information fast.

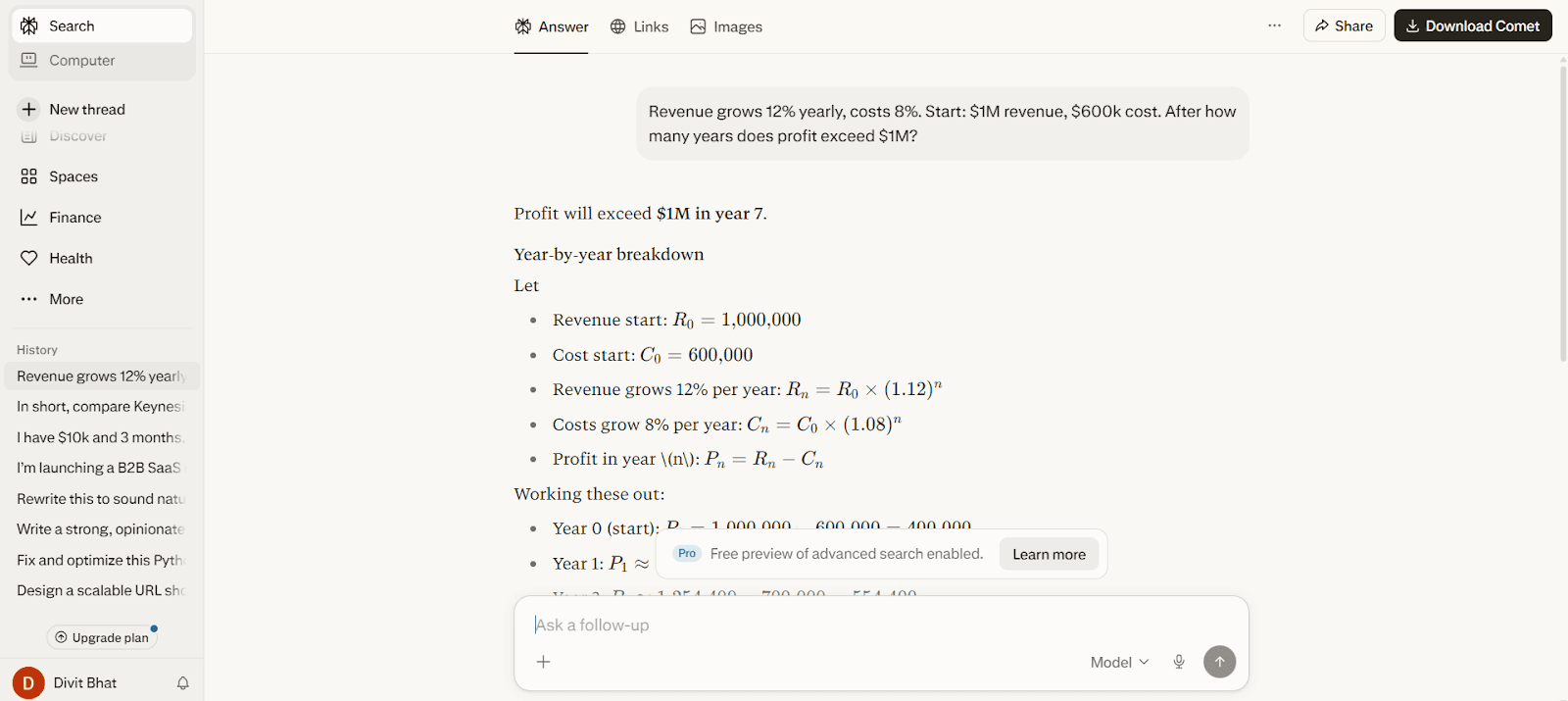

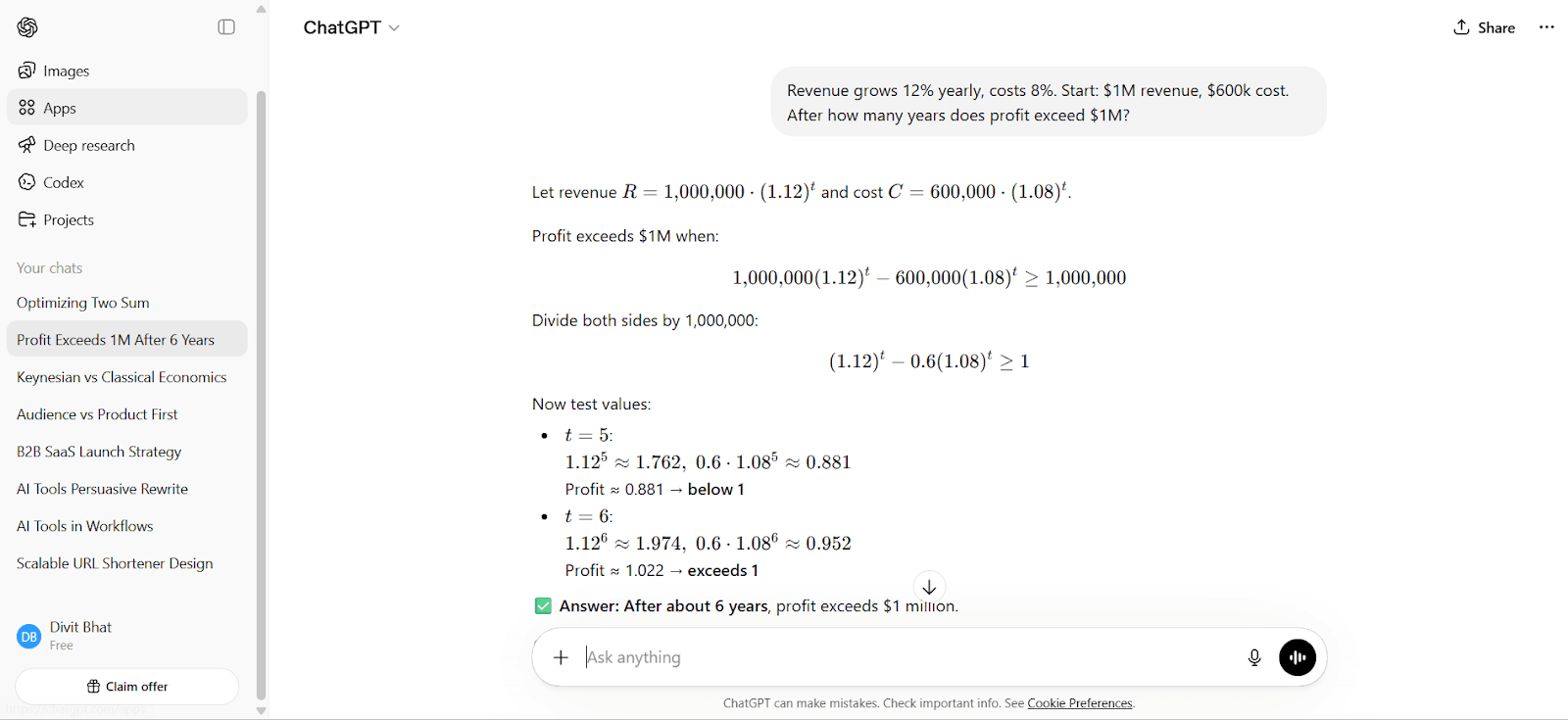

b) Solving a multi-step financial problem

Prompt

Revenue grows 12% yearly, costs 8%. Start: $1M revenue, $600k cost. After how many years does profit exceed $1M?

Perplexity AI output

Note: Check the results here.

ChatGPT output

Note: Check the results here.

Result

Both get to the same outcome, but how they get there is very different.

Perplexity walks through a full year-by-year breakdown and even clarifies the ambiguity between “year 6” vs “year 7.” That makes it useful if you want to double-check logic or see the progression clearly.

ChatGPT takes a more direct route. It sets up the equation, simplifies it, and quickly tests values to arrive at the answer. The reasoning is tighter, which makes it easier to follow and replicate under time pressure.

The difference here is not accuracy, it is efficiency vs exhaustiveness:

Use Perplexity when you want a detailed breakdown and validation.

Use ChatGPT when you want a clean, faster path to the answer.

Developers

If you are building or debugging, the question is not just “is this correct,” it is “can I actually use this output to move forward.” Small differences in how the answer is structured or explained start to matter a lot more here.

a) Fixing and optimizing inefficient code

Prompt

Fix and optimize this Python code for large inputs, explain your approach:

def two_sum(nums, target): for i in range(len(nums)): for j in range(i+1, len(nums)): if nums[i]+nums[j]==target: return [i,j]

Perplexity AI output

Note: View the detailed output here.

ChatGPT output

Note: View the detailed output here.

Result

Both tools identify the core issue immediately, the O(n²) brute-force approach, and both move to the standard hash map solution. So at a surface level, they look very similar.

The difference shows up in how the solution is delivered.

Perplexity presents it like a clean reference answer. It explains the complexity shift, lays out the steps clearly, and backs the approach with external sources. If you already understand the problem and just want to confirm the correct pattern and why it works, this is solid and quick to trust.

ChatGPT’s response typically leans more into explanation flow and reasoning clarity. It focuses less on citing sources and more on helping you follow the transition from brute force to optimized logic in a way that feels easier to internalize and adapt.

So the gap here is subtle but important:

Perplexity is stronger when you want a validated, textbook-correct solution quickly.

ChatGPT is more useful when you want to understand the transition and reuse the pattern in a slightly different problem.

b) Designing a scalable system

Prompt

Design a scalable URL shortener like Bitly. Cover architecture, database, and handling high traffic.

Perplexity AI output

Note: Use this link to read the whole thread in detail.

ChatGPT output

Note: Use this link to read the whole thread in detail.

Result

Perplexity approaches this like a well-structured overview. It typically lays out the key components, load balancer, database, caching, and scaling strategies, and aligns them with standard system design patterns. This makes it useful when you want to quickly understand what a complete system should include, especially for revision or high-level clarity.

That aligns with how Perplexity works overall, it pulls from multiple sources and synthesizes a structured answer rather than building one from first principles.

ChatGPT, in contrast, usually treats this as a problem to be built step by step. The answer tends to go deeper into request flow, trade-offs (like SQL vs NoSQL, hash generation strategies, collision handling), and how each component interacts under load. That makes it feel closer to something you could use in an interview or actually implement, not just describe.

The difference here is depth vs coverage:

Use Perplexity when you want a clean, high-level system overview fast.

Use ChatGPT when you need to think through the system and its trade-offs in detail.

Content creators

If you are creating content, it has to sound natural, hold attention, and actually work for the reader. This is where small differences in tone, structure, and depth become very obvious.

a) Writing something that actually reads well

Prompt

Write a strong, opinionated article on why most AI tools fail in real workflows, in 400 words.

Perplexity AI output

Note: Here is the link for this thread.

ChatGPT output

Note: Here is the link for this thread.

Result

Perplexity approaches this like a well-informed summary. It brings in common failure themes, grounds them in real-world observations, and structures the answer cleanly. If you are trying to understand what’s already known or validated about the topic, it gives you a solid base very quickly.

But it stays close to aggregation. The ideas are correct, but the voice does not fully carry through as a strong, opinionated narrative.

ChatGPT takes a different route. It builds a clearer point of view, connects ideas into a flowing argument, and writes in a way that feels closer to something you would actually publish. The examples and transitions are designed to hold attention, not just inform.

So the gap here is not information, it is writing intent.

Use Perplexity to ground your ideas and see the landscape.

Use ChatGPT to turn those ideas into something people will actually read.

b) Rewriting to sound natural and persuasive

Prompt

Rewrite this to sound natural and persuasive: “AI tools are becoming popular and improve efficiency across domains.”

Perplexity AI output

ChatGPT output

Result

This is a much simpler task, but it reveals a lot about how each tool handles language.

Perplexity usually improves the sentence, but often stays close to the original phrasing. In this case, Perplexity added corporate jargon that made the sentence more vague than persuasive.

ChatGPT tends to go further in reshaping the sentence. It adjusts tone, flow, and emphasis in a way that feels more human and less templated. That makes a big difference when you are working on content where how it sounds matters as much as what it says.

If you just need something corporate ready, Perplexity works fine.

If you want something that feels natural and ready to use, ChatGPT is usually stronger.

Business operators

If you are making business decisions, you are not looking for just information or structure. You need something that helps you decide, prioritize, and act. This is where depth and clarity matter more than anything else.

a) Building and positioning a product in a competitive market

Prompt

I’m launching a B2B SaaS in a crowded market. How should I position, price, and get my first 100 customers?

Perplexity AI output

Note: Find the full thread here.

ChatGPT output

Note: Find the full thread here.

Result

Perplexity usually approaches this by pulling together common strategies, positioning frameworks, pricing models, and acquisition tactics. It gives you a broad view of what typically works and anchors it in established thinking. That makes it useful when you want to quickly understand the landscape and available approaches.

But it often stays at the level of aggregation. The advice is correct, but it may not feel tightly connected into a single, opinionated path forward.

ChatGPT tends to go further in shaping a direction. It connects positioning, pricing, and acquisition into a more cohesive strategy and makes clearer recommendations. The output usually feels more like something you can act on directly, rather than a list of possible approaches.

The difference here is clarity of direction.

Use Perplexity when you want to explore options and validate approaches.

Use ChatGPT when you need to decide and move forward.

b) Making a decision under constraints

Prompt

I have $10k and 3 months. Should I build a product or grow an audience first? Give a clear recommendation.

Perplexity AI output

Note: Read the thread in detail here.

ChatGPT output

Note: Read the thread in detail here.

What actually matters

Perplexity approaches this like a structured exploration. It typically lays out both paths, product vs audience, explains the pros and risks of each, and grounds the answer in general startup patterns. That aligns with how it works overall, it pulls from existing knowledge and synthesizes a balanced view.

ChatGPT, in contrast, is more decisive. It weighs the constraints directly, time, capital, and uncertainty, and leans toward a clear recommendation with reasoning. The response usually feels more like guidance you can act on immediately, not just evaluate.

This difference shows up consistently in decision-making tasks. ChatGPT tends to perform better when nuance, prioritization, and clarity of direction matter, while Perplexity is stronger when you want a well-rounded view of available approaches.

So the gap here is simple.

Use Perplexity when you want to see the full picture and trade-offs.

Use ChatGPT when you need to make a call and move forward.

Pros and cons: Perplexity vs ChatGPT

Perplexity AI works best when the goal is to get reliable information quickly. These pros and cons reflect how it performs in real research workflows, not just feature comparisons.

Pros and cons of Perplexity AI

Pros | Cons |

Real-time, source-backed answers with built-in citations | Limited depth in reasoning for complex problems |

Reduces research time by synthesizing multiple sources instantly | Multi-turn conversations feel shallow compared to ChatGPT |

High trust for factual queries and verification tasks | Output can feel like a compiled summary, not original thinking |

Extremely simple, search-like user experience | Limited control over tone, structure, or output style |

Strong for quick discovery and staying updated | Not ideal for writing, strategy, or creative tasks |

Focus modes improve relevance (academic, web, etc.) | Iteration and refinement workflows are limited |

ChatGPT is built around reasoning, creation, and iteration. These pros and cons focus on how it behaves when you are actually using it to write, solve, or build something.

Pros and cons of ChatGPT

Pros | Cons |

Strong reasoning and structured problem-solving capabilities | May require manual verification for factual accuracy |

High-quality writing, natural tone, and content creation | Not inherently real-time without browsing |

Excellent for coding, debugging, and technical explanations | Can be overly verbose without proper prompting |

Maintains context across conversations for deeper workflows | Learning curve to get the best outputs |

Highly customizable via prompts and instructions | No built-in source attribution by default |

Strong iteration, refinement, and multi-step workflows | Can sound confident even when partially incorrect |

Perplexity vs ChatGPT: Which one should you actually use?

The choice is not about which tool is better overall, it is about which one reduces friction for the exact task in front of you. This breakdown maps real situations to the tool that gets you to a usable outcome faster.

Situation | Goal | Use | Why this works |

You need the latest, verifiable information | Up-to-date facts with sources you can trust | Perplexity AI | Built-in retrieval + citations removes manual verification |

You are researching a topic from scratch | Quick understanding of what exists and what matters | Perplexity AI | Synthesizes multiple sources into a single starting point |

You need to write something that is reader-friendly | Structure, flow, tone, and clarity | ChatGPT | Strong narrative building and iteration |

You are solving a complex problem | Step-by-step reasoning and breakdown | ChatGPT | Handles multi-step logic and maintains context |

You are debugging or writing code | Explanation + usable output | ChatGPT | Better at restructuring and explaining code logic |

You are making a decision under constraints | Clear recommendation, not just options | ChatGPT | More decisive and context-aware reasoning |

You need a quick overview before going deeper into a subject | High-level understanding without digging through links | Perplexity AI | Compresses search → read → compare into one step |

You are iterating on an idea multiple times | Refinement across drafts or steps | ChatGPT | Maintains direction across multiple turns |

You want to save time in research workflows | Fewer tabs, faster synthesis | Perplexity AI | Eliminates manual cross-checking |

The practical way most people end up using both

Start with Perplexity AI to find and verify information quickly

Move to ChatGPT to turn that information into something usable

That flow removes the biggest bottlenecks, searching for reliable information and then making sense of it.

Final Takeaways

Perplexity AI and ChatGPT are not competitors. They are two halves of a workflow. Perplexity compresses the research loop, getting you from question to source-backed answer without the usual tab-hopping and manual cross-checking. ChatGPT picks up where retrieval ends, turning raw information into structured thinking, polished writing, working code, or a clear decision. The most effective approach is sequential: research with Perplexity, then execute with ChatGPT. Trying to force one into the other's role is where most frustration comes from.

But both tools share the same ceiling. They stop at output you still have to act on. The text, the plan, the code snippet, none of it ships itself. Turning that output into a working product still requires translating ideas into specs, configuring infrastructure, wiring integrations, and managing deployment. That gap between "I know what to build" and "people can use it" is where most projects quietly stall.

Emergent picks up exactly where ChatGPT and Perplexity stop. Describe your app in plain language, and Emergent's AI agents handle the full stack: frontend, backend, database, authentication, testing, and deployment. No specs to write, no infrastructure to configure, no code to stitch together. Your idea goes from a prompt to a live, deployed product you can share, sell, or scale. Start building on Emergent for free.

FAQs

1. What is the main difference between ChatGPT and Perplexity AI?

Perplexity is built for real-time, source-backed answers, while ChatGPT is built for reasoning, writing, and problem-solving. One retrieves, the other transforms.