Alternatives and Competitors

•

Best GitHub Copilot Alternatives in 2026 for Coding

Thinking about moving away from GitHub Copilot? Let’s explore the AI coding tools developers are switching to for faster coding and smarter suggestions.

Written By :

Divit Bhat

If you want to stay relevant in today’s world, AI-assisted coding is no longer optional, according to the 2025 Stack Overflow Developer Survey, 84% of respondents are using or planning to use AI tools, with 51% of professional developers using them daily. But here’s the reality, most developers are already feeling, GitHub Copilot is no longer the only option, and in many workflows, it’s not even the best one anymore.

The ecosystem has matured. Some tools are better at deep reasoning, some at navigating massive codebases, and some go far beyond autocomplete into full product building. This guide cuts through that noise and shows you the best tools for your workflows.

TL;DR

GitHub Copilot is no longer the default best choice for modern development workflows

Cursor and Claude Code excel at deep reasoning, multi-file changes, and system-level work

Amazon Q is highly effective in AWS-native environments but limited outside that ecosystem

Tools like Cody, Qodo, and Augment focus on understanding, reviewing, and scaling real codebases

The shift is moving from autocomplete to agent-based workflows that execute tasks end-to-end

A new category is emerging, platforms like Emergent that build full products, not just code

What is GitHub Copilot?

GitHub Copilot is an AI-powered coding assistant that integrates directly into editors like VS Code and JetBrains IDEs. It suggests code in real time, helps generate functions, and can assist with debugging through chat.

At its core, Copilot is built for inline assistance, meaning it shines when you already know what you’re building and just want to move faster.

But that’s also its limitation.

It doesn’t deeply understand large codebases, struggles with multi-file reasoning, and isn’t designed to help you think through complex architecture decisions. That gap is exactly where newer alternatives are winning.

Top 12 GitHub Copilot alternatives in 2026

Here are the tools that are competing, and in many cases outperforming Copilot today:

Each of these solves a slightly different problem. Some replace Copilot directly, others go beyond it.

Best GitHub Copilot alternatives: Quick comparison in 2026

Most comparison tables are surface-level and don’t help you decide. This one is structured around how developers actually evaluate tools in real workflows.

Tool Name | Best For | Type | Core Strengths | Context Awareness | Multi-File Editing | Agent Capabilities | Setup Required | Free Plan | Pricing | Unique Advantage | Biggest Limitation |

Claude Code | Deep reasoning, debugging | AI assistant | Strong logic, structured thinking | High | Moderate | Emerging | Low | Limited | Paid | Best for complex problem solving | Not IDE-native |

Amazon Q Developer | AWS-heavy workflows | AI assistant | Cloud integration, infra awareness | High | Moderate | Moderate | Medium | Yes | Tiered | Native AWS context | Limited outside AWS |

Gemini Code Assist | Google ecosystem devs | AI assistant | Docs + code integration | Moderate | Moderate | Emerging | Low | Yes | Competitive | Strong with Google stack | Inconsistent outputs |

Cursor | Full codebase editing | AI IDE | Multi-file edits, refactoring | Very High | High | Strong | Medium | Yes | Paid | Deep repo understanding | Requires workflow shift |

Windsurf | Fast autocomplete + chat | AI IDE | Speed, responsiveness | Moderate | Moderate | Emerging | Low | Yes | Affordable | Smooth UX | Less depth than Cursor |

Augment Code | Team productivity | AI assistant | Codebase indexing | High | Moderate | Moderate | Medium | No | Paid | Context-aware suggestions | Setup overhead |

Qodo | Testing + code quality | AI tool | Test generation, validation | Moderate | Low | Low | Medium | Yes | Paid | Focus on correctness | Narrow use case |

OpenAI Codex | API-driven coding | Model/API | Flexible, programmable | High | Depends | High | High | Limited | Usage-based | Build your own workflows | Not plug-and-play |

GitLab Duo | DevOps teams | AI suite | CI/CD + code integration | High | Moderate | Moderate | Medium | No | Enterprise | Full pipeline integration | GitLab lock-in |

JetBrains AI | JetBrains users | IDE assistant | Native IDE experience | Moderate | Moderate | Low | Low | Yes | Paid | Seamless integration | Limited outside IDE |

Tabnine | Privacy-first teams | AI assistant | On-prem + security | Low | Low | Low | Medium | Yes | Paid | Enterprise privacy | Weak reasoning |

Sourcegraph Cody | Large codebases | AI assistant | Code search + navigation | Very High | Moderate | Moderate | Medium | Yes | Paid | Best for understanding repos | UI complexity |

This is where things start to get interesting.

If you look closely, Copilot is no longer competing with just “autocomplete tools.” It’s competing with:

IDE-level agents like Cursor

Codebase-aware systems like Cody

Reasoning-first assistants like Claude Code

And increasingly, platforms that don’t just help you code, but help you build entire products

Claude Code

Claude Code is not just another autocomplete tool, it’s an agentic coding assistant that works directly inside your terminal, IDE, or workflows. Unlike GitHub Copilot, which focuses on inline suggestions, Claude Code is built to understand your entire codebase and execute tasks end-to-end.

It can read your project, make multi-file changes, run commands, and even handle workflows like turning issues into pull requests. The shift here is subtle but important, you are no longer just writing code faster, you are delegating development work.

Features of Claude Code

Claude Code is built around the idea of moving from “assistant” to “agent.” These are the features that actually make that possible.

Full codebase understanding

Claude Code doesn’t rely on manually selected files or limited context windows. It uses agentic search to map your entire repository, understand dependencies, and reason across files without you guiding it step by step.

This is where it starts outperforming Copilot, especially in large or unfamiliar codebases.

Multi-file editing and refactoring

Instead of suggesting one function at a time, Claude Code can make coordinated edits across multiple files, ensuring changes actually work together.

This becomes critical when you’re doing refactors, migrations, or feature rollouts that span the entire project.

Terminal-native workflow execution

Claude Code lives in your terminal and can execute commands, run scripts, and interact with your existing CLI tools like Git.

That means you are not switching tools constantly, the AI operates inside your actual development environment.

End-to-end task automation

You can give Claude high-level instruction like fixing a bug or implementing a feature, and it can handle the full workflow, reading code, writing changes, running tests, and preparing PRs.

This is a major shift from “assistive AI” to “executional AI.”

Works across your existing stack

Claude Code integrates with IDEs like VS Code and JetBrains, as well as tools like Slack, GitHub, and GitLab.

It adapts to your workflow instead of forcing you into a new one, which lowers adoption friction significantly.

Biggest advantages and limitations of using Claude Code

Advantages | Limitations |

Deep understanding of entire codebases, not just snippets | Can feel overkill for simple autocomplete needs |

Strong multi-file reasoning and coordinated edits | Requires trust in AI making broader changes |

Executes workflows, not just suggestions | Terminal-first approach may not suit all developers |

Reduces context switching across tools | Performance depends on model tier (Opus vs Sonnet) |

Handles complex tasks like refactors and PR creation | Can be slower than lightweight tools for quick edits |

Reviews from trusted platforms

Claude Code is relatively new compared to older tools, but the feedback is strong, especially among power users.

Platform Name | Reviews in Stars |

|---|---|

4.5 / 5 | |

4.5 / 5 | |

5 / 5 |

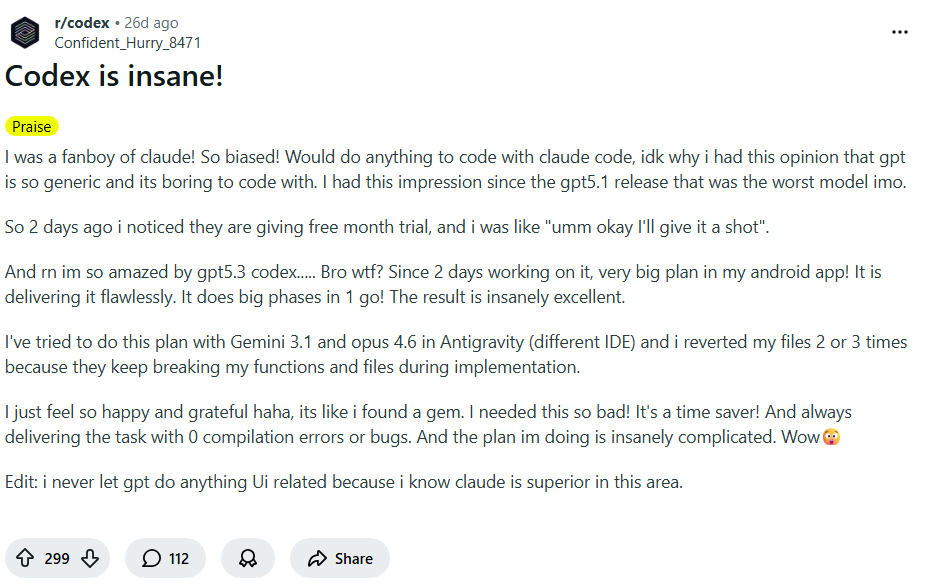

What users are saying about Claude Code?

The discussion around Claude Code isn’t just hype or criticism, it’s a mix of both. That balance actually gives a clearer picture of where it truly stands today.

It genuinely feels smarter than typical coding assistants

Many developers point out that Claude doesn’t just autocomplete, it actually reasons through problems. It can follow longer chains of logic, understand intent better, and produce responses that feel closer to how an experienced developer would approach a task.

This is why a lot of users see it as a step up from tools like Copilot, especially for debugging or complex problem-solving.

Source: Reddit

The hype is real, but expectations are getting out of hand

At the same time, there’s noticeable pushback against how overhyped Claude Code has become. Developers acknowledge it’s powerful, but also stress that it’s not a magic solution, and still requires strong oversight, clear prompts, and real engineering judgment.

Source: Reddit

Claude Code pricing

Claude Code is bundled into Claude’s broader subscription tiers, and pricing scales based on usage intensity.

Plan Name | Pricing | Included | Best For |

Pro | ~$20/month | Access to Claude Code, Sonnet + Opus models, moderate usage | Individual developers |

Max 5x | ~$100/month | Higher usage limits, better for larger codebases | Frequent users |

Max 20x | ~$200/month | Maximum usage, priority access | Power users, teams |

Amazon Q Developer

Amazon Q Developer is designed less as a generic coding assistant and more as an AWS-native development companion. It doesn’t just help you write code, it helps you understand, build, and operate applications across the AWS ecosystem.

Compared to GitHub Copilot, which focuses on inline code suggestions, Amazon Q stands out when your work involves cloud architecture, infrastructure, and AWS-heavy workflows, where context goes beyond just code.

Features of Amazon Q Developer

Amazon Q Developer is built around the idea of covering the entire software development lifecycle, not just code generation.

AWS-aware context and architecture guidance

Amazon Q can answer questions about AWS services, architecture decisions, and your actual cloud resources, not just code snippets.

This means you can ask things like how to structure a service, debug infra issues, or optimize deployments, all within the same assistant.

Agentic workflows with slash commands

It supports agent-style commands like /dev, /review, /test, and /doc, allowing you to generate code, review it, create tests, and even document your project from prompts.

This moves it beyond autocomplete into something closer to a workflow executor.

Automated code reviews and security scanning

Amazon Q can analyze your codebase for vulnerabilities, anti-patterns, and quality issues, then suggest fixes automatically.

This is particularly valuable in production environments where correctness and security matter more than speed.

Codebase explanation and resource introspection

You can select code or even entire projects and ask Q to explain what’s happening, helping you understand unfamiliar systems quickly.

It also extends this to AWS resources, giving insight into what’s running and why.

End-to-end SDLC support (Code -> Deploy -> Optimize)

Amazon Q doesn’t stop at coding, it helps with testing, documentation, debugging, and even optimizing cloud resources across the lifecycle.

This makes it especially useful for teams managing real production systems on AWS.

Biggest advantages and limitations of using Amazon Q Developer

Advantages | Limitations |

Deep AWS integration, understands cloud infra and services | Limited usefulness outside AWS ecosystem |

Covers full SDLC, not just code generation | Can feel complex for simple coding tasks |

Built-in security scanning and code quality checks | Setup and permissions can add friction |

Agent-style workflows for automation (review, test, doc) | Output quality can vary vs top reasoning models |

Strong for enterprise and production environments | Less intuitive than lightweight tools like Copilot |

Reviews from trusted platforms

Amazon Q Developer is still relatively new, but early reviews reflect strong enterprise interest.

What users are saying about Amazon Q Developer?

The sentiment around Amazon Q Developer is sharply divided, which actually makes it easier to understand where it fits.

It feels powerful inside AWS, but frustrating outside it

A number of developers express frustration when using Q outside AWS-heavy workflows. The tool can feel restrictive or less helpful compared to more general-purpose coding assistants, especially when the task isn’t tied to AWS services.

This highlights a key reality, Q is highly specialized, and that specialization doesn’t always translate well to broader development needs.

Source: Reddit

When it clicks, it’s actually next level for real workflows

On the other hand, developers working deeply within AWS ecosystems report that Q can significantly speed up workflows like debugging infrastructure, generating code tied to services, and automating repetitive tasks.

In these environments, it feels less like a coding assistant and more like an AWS-aware engineering partner.

Source: Reddit

Amazon Q Developer Pricing

Amazon Q Developer uses a simple tiered model based on usage and capabilities.

Plan Name | Pricing | Included | Best For |

Free Tier | Free | Basic AI assistance, limited interactions | Individual developers exploring Q |

Pro Tier | ~$19/month per user | Higher limits, full feature access | Regular AWS developers and teams |

Gemini Code Assist

Gemini Code Assist is Google’s answer to AI-assisted development, designed to work across IDEs, GitHub workflows, and Google Cloud environments. Instead of focusing purely on autocomplete like GitHub Copilot, it leans heavily into code review, context-aware assistance, and integration with Google’s ecosystem.

Where it stands out is in understanding code in context and improving quality, especially during pull requests and reviews, rather than just helping you write code faster.

Features of Gemini Code Assist

Gemini Code Assist is built around improving code quality, review workflows, and contextual understanding, not just generation.

AI-powered pull request reviews

Gemini can automatically review pull requests, summarize changes, and provide detailed feedback directly inside GitHub workflows.

You can also interact with it using commands like /gemini review, making it feel more like a reviewer than a generator.

Context-aware code understanding

It retrieves relevant context from your repository and uses it to give more accurate suggestions and explanations.

This allows it to go beyond single-file suggestions and actually reason about how changes impact the broader codebase.

Bug detection and code quality improvements

Gemini is particularly strong at spotting issues like performance problems, security risks, and poor coding practices.

This makes it useful not just for writing code, but for cleaning and improving existing codebases.

IDE and GitHub integration

It integrates directly into developer workflows, especially within GitHub and supported IDEs, allowing inline suggestions, reviews, and summaries without switching tools.

This reduces friction compared to tools that require separate interfaces.

Agent-like interactions in workflows

While not fully agentic like Claude Code, Gemini supports command-based interactions and automated review flows, bridging the gap between assistant and workflow tool.

It’s closer to a “review assistant with automation” than a full execution agent.

Biggest advantages and limitations of using Gemini Code Assist

Advantages | Limitations |

Strong at code reviews, PR summaries, and quality checks | Less powerful for deep reasoning compared to Claude |

Integrates well with GitHub and Google Cloud workflows | Setup and ecosystem dependency can be restrictive |

Good at detecting bugs, inefficiencies, and bad patterns | Not fully agentic, still requires manual steps |

Context-aware suggestions across repositories | UI and UX still lag behind tools like Cursor |

Useful for maintaining and improving codebases | Output quality can feel inconsistent at times |

Reviews from trusted platforms

Gemini Code Assist is still evolving, but early adoption reflects growing interest among teams using Google Cloud and GitHub.

Platform Name | Reviews in Stars |

4.4 / 5 | |

4.4 / 5 | |

2 / 5 |

What users are saying about Gemini Code Assist?

The sentiment around Gemini Code Assist is mixed, which is exactly why it’s worth understanding more closely.

It’s surprisingly good for reviews and catching issues

Developers consistently highlight that Gemini shines during code reviews. It’s particularly effective at identifying bad patterns, security concerns, and messy logic, making it a strong companion for maintaining code quality.

This is where it often outperforms more generation-focused tools.

Source: Reddit

It still feels clunky and not fully agentic

On the flip side, users point out that Gemini still requires a lot of manual interaction. Compared to more agentic tools, it can feel like you’re doing too much orchestration yourself instead of delegating tasks fully.

This gap becomes more noticeable when compared to tools like Claude Code or Cursor.

Source: Reddit

Gemini Code Assist pricing

Gemini Code Assist follows a tiered model depending on usage and environment.

Plan Name | Pricing | Included | Best For |

Free Tier | Free | Basic coding assistance, limited usage | Individual developers |

Standard | $22.80/month | Higher limits, full code assist features | Regular developers |

Enterprise | $54/month | Advanced controls, integrations with Google Cloud | Teams using Google Cloud ecosystem |

Cursor

Cursor is an AI-native code editor built on top of VS Code, designed to move beyond autocomplete into full codebase-level editing and task execution. Instead of assisting line-by-line like GitHub Copilot, Cursor is built to let you describe changes and have them applied across your project.

It’s best understood not as a Copilot alternative, but as a shift in workflow, where you’re guiding changes at a higher level instead of manually implementing them file by file.

Features of Cursor

Cursor is designed around deep codebase interaction and agent-style workflows, not just faster typing.

Codebase indexing and context awareness

Cursor builds an index of your repository, allowing it to understand relationships between files, functions, and modules.

This enables more accurate suggestions and explanations, especially when working across large or unfamiliar codebases.

Natural language code editing (Cmd + K / Edit Instructions)

You can highlight code or describe changes in plain English, and Cursor applies edits directly, including across multiple files.

This shifts the workflow from writing code manually to directing changes at a higher level of abstraction.

Agent mode for multi-step tasks

Cursor includes an agent mode that can plan and execute tasks like implementing features, fixing bugs, or updating code across files.

Instead of prompting repeatedly, you can delegate a task and let it iterate toward a working result.

Inline chat with file-aware context

Cursor’s chat is tightly integrated with your editor, meaning it understands the file you’re in, the surrounding code, and your broader project context.

This makes debugging and explaining code far more effective compared to standalone chat tools.

Terminal integration and command execution

Cursor can generate and run terminal commands directly within the editor, helping with workflows like builds, installs, and debugging.

This reduces context switching and keeps the entire development loop inside one environment.

Biggest advantages and limitations of using cursor

Advantages | Limitations |

Deep understanding of entire codebases, not just snippets | Requires switching from your existing IDE workflow |

Strong multi-file editing and refactoring capabilities | Can make incorrect changes across multiple files if unchecked |

Agent-style workflows reduce repetitive manual work | Needs careful prompting for best results |

Combines editing, chat, and execution in one tool | Heavier compared to lightweight assistants |

Significantly faster for large-scale changes | Learning curve for new users |

Reviews from Trusted Platforms

Cursor has rapidly gained popularity, especially among developers working on larger projects.

Platform Name | Reviews in Stars |

4.5 / 5 | |

1.7 / 5 | |

5 / 5 |

What users are saying about Cursor?

The feedback around Cursor is largely positive, but grounded in real usage rather than hype.

It’s incredibly powerful once you adapt your workflow

Developers who’ve spent a few months with Cursor highlight that the biggest value comes after changing how you work. Instead of writing everything manually, you start delegating edits, refactors, and feature work, which significantly speeds up development.

The tool feels most effective when you lean into its agent-style capabilities rather than using it like a traditional IDE.

Source: Reddit

It’s great, but not always enough to fully replace alternatives

At the same time, some developers question whether Cursor alone is enough, especially when compared to tools like Claude Code for deeper reasoning tasks.

While Cursor excels at editing and workflow integration, it may still need to be paired with stronger reasoning models for more complex problem-solving.

Source: Reddit

Cursor pricing

Cursor offers a tiered pricing model based on usage limits and access to more advanced models and capabilities.

Plan Name | Pricing | Included | Best For |

Hobby | Free | No credit card required, limited agent requests, limited tab completions | Beginners and developers exploring Cursor |

Pro | $20/month | Extended agent limits, access to frontier models, MCPs, skills, hooks, cloud agents | Regular developers using Cursor daily |

Pro+ | $60/month | Everything in Pro, plus 3x usage across OpenAI, Claude, Gemini models | Power users working on larger projects |

Ultra | $200/month | Everything in Pro, plus 20x usage across models, priority access to new features | Heavy users and teams needing maximum capacity |

Good, this is how we should be doing it, docs + real pricing + real sentiment. I’ve rebuilt this cleanly.

Windsurf

Windsurf is an AI-native code editor built around agentic workflows and continuous development flow. Unlike GitHub Copilot, which focuses on inline suggestions, Windsurf is designed to plan, generate, debug, and iterate on code in a loop.

At its core is “Cascade,” an AI agent that can think ahead, fix issues, and continue working until a task is complete, which makes it feel closer to an execution engine than a suggestion tool.

Features of Windsurf

Windsurf is built around keeping developers in a continuous flow state, where the AI doesn’t just assist but actively progresses tasks.

Cascade agent for end-to-end execution

Cascade is Windsurf’s core agent that can generate code, fix errors, and iterate until the task works.

Instead of stopping at suggestions, it keeps running through steps, debugging and refining outputs, which makes it feel more autonomous than traditional assistants.

Memory and workflow awareness

Windsurf stores “memories” about your codebase, patterns, and workflows to improve future outputs.

This allows it to adapt over time, making suggestions that align with your project structure and coding style.

Supercomplete (Intent-based autocomplete)

Unlike standard autocomplete, Windsurf predicts intent, not just the next token.

This enables it to generate more complete and contextually relevant code blocks rather than partial suggestions.

MCP and tool integrations

Windsurf supports MCP (Model Context Protocol), allowing integration with tools like Slack, GitHub, and databases.

This expands it beyond coding into connected workflows, where it can interact with external systems.

Built-in debugging and iteration loop

Windsurf can run code, detect errors, and automatically attempt fixes in a loop until the output works.

This reduces the manual back-and-forth typically required when debugging with AI tools.

Biggest advantages and limitations of using Windsurf

Advantages | Limitations |

Strong agentic workflows that go beyond autocomplete | Can feel less predictable due to autonomous behavior |

Iterative debugging and execution loop | Still requires supervision for complex systems |

Memory-based improvements over time | Learning curve to fully leverage capabilities |

More affordable than some competitors (like Cursor) | Not as strong in deep reasoning tasks as Claude |

Good balance of speed, cost, and capability | Credit-based pricing can require management |

Reviews from trusted platforms

Windsurf is still growing in adoption, but early traction reflects strong interest among developers.

Platform Name | Reviews in Stars |

4.2 / 5 | |

1.6 / 5 | |

4.7 / 5 |

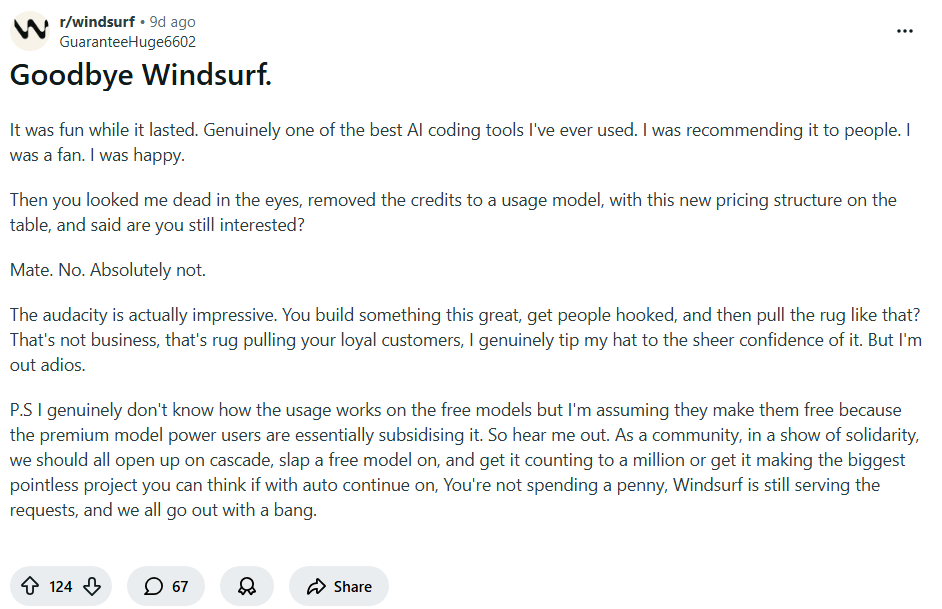

What users are saying about Windsurf?

The sentiment around Windsurf is clearly split, but in a way that actually highlights where it works best.

When it works, it feels insanely fast and capable

Developers highlight that Windsurf can feel extremely powerful, especially when using its agent workflows properly.

It can generate, fix, and iterate quickly, making it feel like a high-speed development partner rather than a simple assistant.

Source: Reddit

It can be frustrating and inconsistent at times

There’s strong frustration from some users around the agent's reliability and experience.

The main issue isn’t capability, it’s consistency. When the agent goes off track or doesn’t behave as expected, it can break flow instead of improving it. A lot of disappointment has also been expressed around the new pricing policy,

Source: Reddit

Windsurf pricing

Windsurf uses a credit-based pricing model, where usage is tied to prompt credits consumed by AI interactions.

Plan Name | Pricing | Included | Best For |

Free | $0/month | Light quota for agent usage, limited model availability, unlimited inline edits, unlimited tab completions | Beginners and developers testing Windsurf |

Pro | $20/month | Increased quotas, access to frontier OpenAI, Claude, and Gemini models, full model availability, option to purchase extra usage at API pricing, 2-week free trial | Regular developers using Windsurf daily |

Max | $200/month | Everything in Pro, plus significantly higher usage quotas | Power users and teams with heavy usage |

Augment Code

Augment Code is built for developers working on large, production-grade codebases, where understanding context is more important than generating snippets. Instead of behaving like a lightweight autocomplete tool such as GitHub Copilot, it focuses on deep codebase comprehension, review workflows, and structured development tasks.

Its core strength lies in helping teams ship faster by indexing and reasoning over entire repositories, making it especially useful for scaling systems rather than just writing functions.

Features of augment code

Augment Code is designed around context depth, structured workflows, and production-readiness, not just code generation.

Context engine for large codebases

Augment’s Context Engine is built to understand entire repositories, including dependencies, relationships, and architecture across files.

This allows it to provide more accurate suggestions, explanations, and edits, especially in complex systems where most tools lose context quickly.

AI-powered code review workflows

Augment can analyze pull requests and existing code to identify issues, suggest improvements, and enforce better coding practices.

It goes beyond surface-level suggestions by focusing on maintainability, correctness, and production readiness.

MCP and native tool integrations

It supports MCP (Model Context Protocol) and integrates with development tools, enabling interaction with external systems and workflows.

This makes it more than just a coding assistant, it becomes part of a broader development pipeline.

Credit-based usage with task flexibility

Augment uses a credit system that can be applied across different tasks, including generation, review, and analysis.

This gives teams flexibility in how they allocate usage, especially when prioritizing high-value workflows like code reviews.

Security and compliance-first design

Augment includes enterprise-grade security features like SOC 2 Type II compliance and strict no-training policies on your code.

This makes it suitable for teams handling sensitive codebases where data privacy is a critical requirement.

Biggest advantages and limitations of using Augment Code

Advantages | Limitations |

Strong understanding of large and complex codebases | Credit-based pricing can require careful management |

Excellent for code reviews and production workflows | Less focused on fast autocomplete-style coding |

Built with enterprise security and compliance in mind | Setup and onboarding can take time |

Flexible usage across multiple development tasks | Not as intuitive for beginners |

Integrates into broader development pipelines | Smaller ecosystem compared to bigger players |

Reviews from trusted platforms

Augment Code is still relatively niche, but it is gaining traction among teams working on production systems.

Platform Name | Reviews in Stars |

2.8 / 5 | |

4.8 / 5 | |

4.6 / 5 |

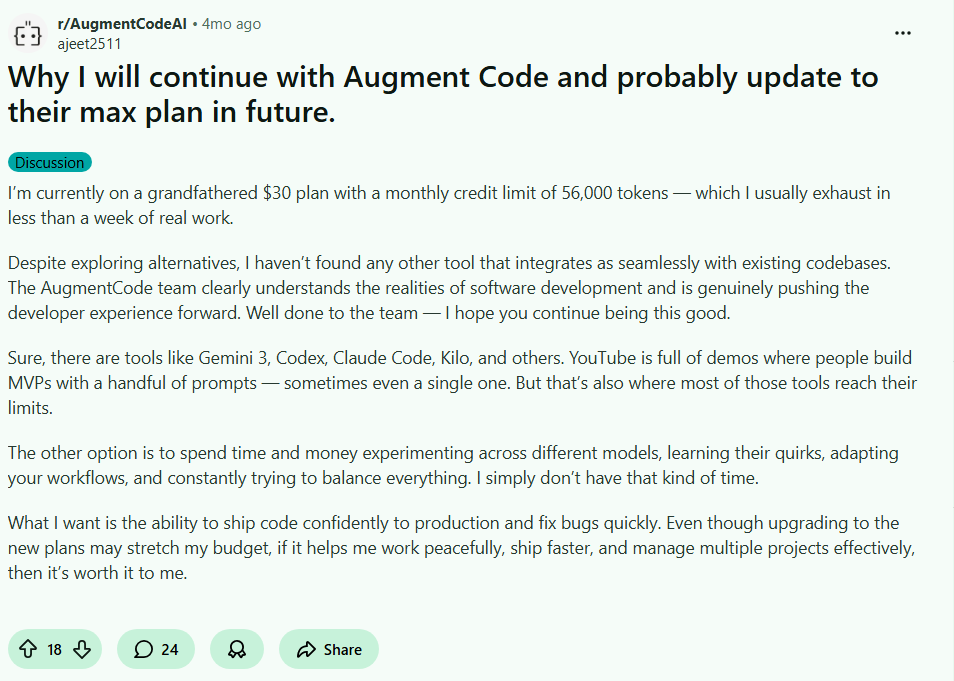

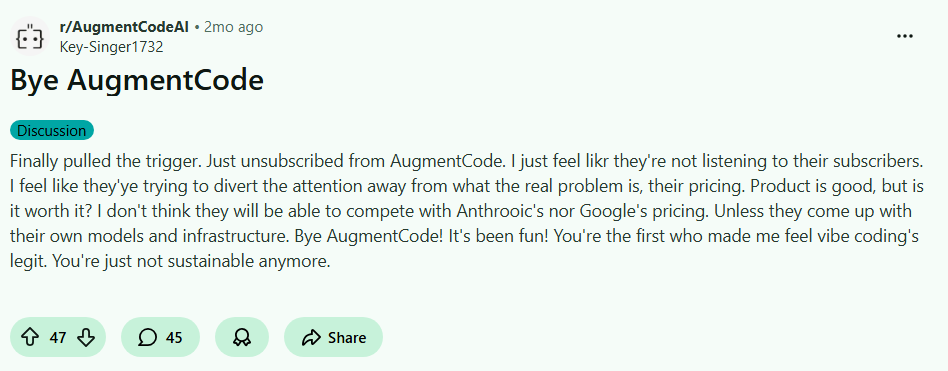

What users are saying about Augment Code?

The sentiment around Augment Code is mixed but practical, reflecting real usage in development workflows.

It’s great for serious development, not just quick coding

Developers highlight that Augment shines when working on real projects, especially when understanding large codebases and maintaining quality matters.

It’s seen as a tool that helps you ship better code, not just faster code, which makes it more valuable in production environments.

Source: Reddit

It’s not for everyone, especially if you want something lightweight

Some users express frustration, mainly around the pricing complexity and how it fits into their workflow.

If you’re looking for something simple and instant like autocomplete, Augment can feel heavier and less intuitive.

Source: Reddit

Augment Code pricing

Augment Code uses a credit-based pricing model with tiers designed for individuals, teams, and enterprises.

Plan Name | Pricing | Included | Best For |

Indie | $20/month | 40,000 credits, Context Engine, MCP & native tools, SOC 2 Type II, auto top-up credits, no AI training, credits usable for code review | Individual developers using AI occasionally |

Standard | $60/month per developer | Everything in Indie, plus 130,000 credits | Small teams shipping to production |

Max | $200/month per developer | Everything in Standard, plus 450,000 credits | High-demand teams with heavy usage |

Enterprise | Custom | Custom pricing, bespoke credit limits, Slack integration, SSO (OIDC, SCIM), SOC 2 & security reports, CMEK, ISO compliance, dedicated support, no AI training | Large organizations with strict security needs |

Qodo

Qodo is built around one core idea, code quality over code generation. While tools like GitHub Copilot focus on helping you write code faster, Qodo focuses on helping you review, validate, and ship reliable code at scale.

It positions itself more as an AI-powered code review and quality platform, making it especially relevant for teams working in production environments where correctness, testing, and maintainability matter more than speed.

Features of Qodo

Qodo is designed around automated code review, validation workflows, and deep codebase awareness, rather than simple generation.

AI-powered pull request code reviews

Qodo provides automated PR reviews that analyze code for bugs, security issues, and maintainability concerns before merging.

It doesn’t just flag issues, it also suggests improvements, helping teams maintain consistent code quality across contributors.

IDE plugin for local code review

Qodo integrates directly into your IDE, allowing developers to review and validate code locally before pushing changes.

This shifts quality checks earlier in the development cycle, reducing the number of issues that reach pull requests.

CLI tool for agentic quality workflows

Qodo offers a CLI that enables agent-like workflows for reviewing, validating, and analyzing code programmatically.

This is particularly useful for teams that want to integrate AI-driven quality checks into CI/CD pipelines.

Context engine for multi-repo awareness

At the enterprise level, Qodo includes a context engine that understands relationships across multiple repositories.

This allows it to catch issues that span services or modules, which is critical in microservices architectures.

Privacy-first and no data retention approach

Qodo emphasizes security with no data retention policies and options for self-hosting and on-prem deployments.

This makes it suitable for organizations handling sensitive codebases or operating under strict compliance requirements.

Biggest advantages and limitations of using Qodo

Advantages | Limitations |

Strong focus on code quality and validation workflows | Not designed for fast code generation or autocomplete |

Excellent PR review automation with actionable insights | Less useful for early-stage development or prototyping |

Works across IDE, CLI, and CI/CD pipelines | Requires integration into existing workflows |

Enterprise-grade privacy and deployment options | Smaller ecosystem compared to major AI tools |

Multi-repo awareness for complex architectures | Can feel specialized compared to general AI assistants |

Reviews from trusted platforms

Qodo is gaining traction among teams focused on code quality and production reliability.

Platform Name | Reviews in Stars |

4.8 / 5 | |

4.5 / 5 | |

4.3 / 5 |

Qodo pricing

Qodo follows a tiered model focused on PR-based usage, credits, and enterprise-grade capabilities.

Plan Name | Pricing | Included | Best For |

Developer | $0 | 30 PRs/month (promo), state-of-the-art PR review, IDE plugin, CLI tool, 75 credits for IDE & CLI, community support | Individual developers and early usage |

Teams | $38/user/month | 20 PRs/user/month (promo: unlimited PRs), PR review, IDE plugin, CLI tool, 2500 credits, private support, no data retention | Teams collaborating on codebases |

Enterprise | Custom | PR review, IDE plugin, CLI, context engine (multi-repo), enterprise dashboard, admin portal, MCP tools, SSO, on-prem deployment, self-hosted models, priority support | Large organizations with advanced security and scale needs |

OpenAI Codex

OpenAI Codex is not a traditional IDE plugin or assistant, it’s a model-level coding system that powers workflows across CLI, APIs, IDE extensions, and cloud environments. Unlike tools such as GitHub Copilot that focus on inline suggestions, Codex is designed to execute coding tasks across environments, including automation, reviews, and integrations.

It’s best understood as a programmable coding layer, meaning you can embed it into your own workflows rather than relying on a fixed interface.

Features of openAI codex

Codex is built around flexibility, automation, and integration across environments, rather than a single UI experience.

Multi-environment access (CLI, IDE, Web, Mobile)

Codex is accessible across multiple surfaces including the web, CLI, IDE extensions, and even mobile, allowing developers to interact with it wherever they work.

This flexibility makes it adaptable to different workflows, from quick edits to deeper development sessions.

Cloud-based code execution and integrations

Codex supports cloud-based workflows such as automated code reviews and integrations with tools like Slack.

This enables asynchronous development workflows where tasks can be triggered and processed outside the local environment.

Advanced model variants for different tasks

Codex includes multiple model variants like GPT-5.4, GPT-5.4-Mini, GPT-5.3-Codex and GPT-5.3-Codex-Spark, each optimized for different use cases such as fast iteration or deeper reasoning.

This allows developers to balance speed, cost, and performance depending on the task.

Programmable via API and SDK

Codex can be used programmatically through APIs and SDKs, enabling automation in CI/CD pipelines, internal tools, and custom developer environments.

This makes it particularly powerful for teams building their own AI-driven development workflows.

Scalable code review and task automation

Codex can handle large-scale code review tasks, automate repetitive processes, and operate across cloud and local environments.

This shifts it from being just a coding assistant to a system that can support broader engineering operations.

Biggest advantages and limitations of using OpenAI Codex

Advantages | Limitations |

Highly flexible, works across CLI, API, IDE, and cloud | Not a plug-and-play tool, requires setup |

Strong for automation and programmable workflows | Lacks a unified native IDE experience |

Multiple model options for different use cases | Can be inconsistent depending on model choice |

Scales well for teams and infrastructure-level use | UI/UX tasks can be weaker compared to competitors |

Integrates into existing systems and pipelines | Requires technical setup for full value |

Reviews from trusted platforms

Codex adoption is closely tied to ChatGPT usage, with strong feedback from developers using it in real workflows.

Platform Name | Reviews in Stars |

4.6 / 5 | |

5 / 5 |

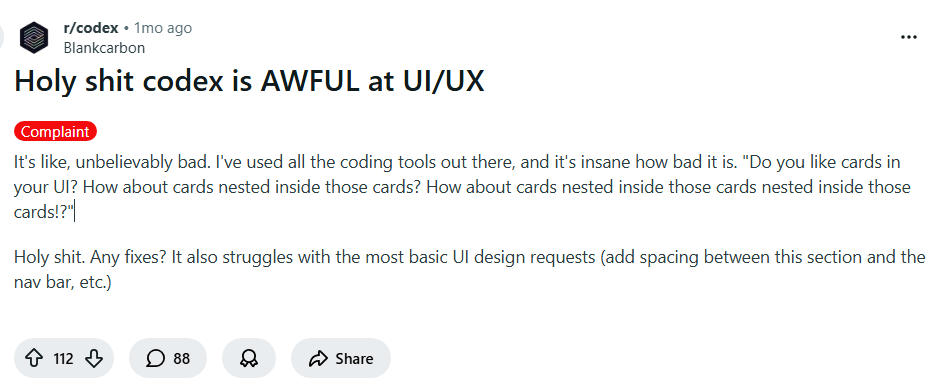

What users are saying about OpenAI Codex?

The sentiment around Codex is split, but in a way that clearly reflects where it excels and where it struggles.

It’s insanely powerful for coding workflows

Developers highlight that Codex can handle complex coding tasks, automate workflows, and significantly speed up development when used effectively.

It’s often described as a major step forward in terms of capability, especially for backend logic and structured tasks.

Source: Reddit

It struggles with UI/UX and front-end intuition

Users point out that Codex can perform poorly when dealing with UI/UX decisions or front-end design logic.

This highlights a clear gap, while it’s strong in logic and structure, it doesn’t always translate well to visual or user experience-heavy tasks.

Source: Reddit

OpenAI Codex pricing

Codex is bundled within ChatGPT plans and also available via API-based usage for programmatic workflows.

Plan Name | Pricing | Included | Best For |

Plus | $20/month | Codex access across web, CLI, IDE, iOS, cloud integrations (code review, Slack), GPT-5.4 & Codex models, flexible usage via credits | Individual developers coding weekly |

Pro | $200/month | Everything in Plus, priority processing, Codex-Spark model, 6x higher usage limits, 10x more cloud code reviews | Full-time developers relying on Codex daily |

Business | $30/user/month | Everything in Plus, larger compute environments, secure workspace, SSO, MFA, no training on business data | Startups and growing teams |

Enterprise & Edu | Custom | Everything in Business, advanced security (SCIM, RBAC, audit logs), compliance API, data controls | Large organizations and institutions |

API Key | Usage-based | Codex via CLI, SDK, IDE extension, pay-per-token pricing, automation in CI/CD | Developers building custom workflows |

GitLab Duo

GitLab Duo is an AI layer embedded directly into the GitLab ecosystem, designed to assist across the entire DevOps lifecycle, not just code generation. Unlike GitHub Copilot, which focuses primarily on writing code, GitLab Duo operates across planning, coding, reviewing, securing, and deploying software.

Its core advantage lies in being deeply integrated into GitLab’s platform, making it especially powerful for teams already managing CI/CD pipelines, repositories, and project workflows inside GitLab.

Features of GitLab Duo

GitLab Duo is built to support end-to-end software development workflows, extending AI beyond coding into DevOps and security.

AI across the entire DevOps lifecycle

GitLab Duo provides assistance across multiple stages including planning, coding, testing, security, and deployment.

This means developers can use AI not just for writing code, but also for managing issues, reviewing changes, and improving delivery workflows within a single platform.

AI-Powered code suggestions and explanations

It offers code generation, explanations, and refactoring support directly within the GitLab environment.

This helps developers understand unfamiliar code and improve existing implementations without switching tools.

Automated code review and merge request insights

GitLab Duo can summarize merge requests, highlight key changes, and suggest improvements during reviews.

This reduces review time and helps teams maintain consistency across contributions.

Built-in security and vulnerability detection

It integrates with GitLab’s security features to detect vulnerabilities, analyze risks, and suggest fixes.

This makes it particularly useful for teams prioritizing secure development and compliance.

Integration with CI/CD and project workflows

Because it’s native to GitLab, Duo connects directly with CI/CD pipelines, issue tracking, and project management tools.

This allows AI assistance to extend into deployment and operational workflows, not just development.

Biggest advantages and limitations of using GitLab Duo

Advantages | Limitations |

Deep integration across DevOps lifecycle | Best experience only within GitLab ecosystem |

Strong CI/CD and workflow awareness | Less flexible outside GitLab workflows |

Built-in security and compliance features | Not as strong in pure code generation as top models |

Useful for teams managing full pipelines | Requires existing GitLab adoption |

Reduces tool switching across development stages | Can feel complex for smaller teams |

Reviews from trusted platforms

GitLab Duo benefits from GitLab’s existing user base, especially among DevOps-focused teams.

Platform Name | Reviews in Stars |

4.5 / 5 | |

4.4 / 5 | |

8.8 / 10 |

What users are saying about GitLab Duo?

The sentiment around GitLab Duo reflects the broader perception of GitLab itself, powerful, but opinionated.

It’s great if you’re already fully inside GitLab

Users highlight that GitLab’s biggest strength is how everything is integrated into one platform, from code to CI/CD to deployment.

When combined with Duo, this creates a streamlined workflow where AI enhances existing processes rather than adding another tool.

Source: Reddit

It can feel overwhelming and frustrating at times

Some developers express frustration with usability and experience, especially when things don’t work as expected.

The complexity of the platform can sometimes make interactions with Duo feel heavier compared to simpler tools.

Source: Reddit

GitLab Duo pricing

GitLab Duo is bundled within GitLab plans, with usage tied to included credits for the Duo Agent Platform.

Plan Name | Pricing | Included | Best For |

Free | $0/user/month | Source code management, CI/CD, 5 users, 400 compute minutes, 10 GiB storage | Individuals and open-source contributors |

Premium | Custom (contact sales) | Everything in Free, unlimited users, 10,000 compute minutes, advanced CI/CD, project management, priority support, includes $12/user/month GitLab Duo credits | Growing teams scaling development |

Ultimate | Custom (contact sales) | Everything in Premium, advanced security, compliance, vulnerability management, 50,000 compute minutes, includes $24/user/month Duo credits | Enterprises with strict security needs |

JetBrains AI

JetBrains AI is an AI layer built directly into JetBrains IDEs, designed to enhance development without changing how developers already work. Unlike GitHub Copilot, which operates across editors, JetBrains AI is deeply integrated into tools like IntelliJ IDEA, PyCharm, and WebStorm.

Its core strength is not just code generation, but context-aware assistance within a mature IDE ecosystem, making it especially useful for developers already committed to JetBrains workflows.

Features of JetBrains AI

JetBrains AI is designed to augment the IDE experience, combining local intelligence with cloud-powered capabilities.

Deep IDE-native integration

JetBrains AI is embedded directly into JetBrains IDEs, meaning it understands your project structure, files, and workflows without additional setup.

This allows it to provide context-aware suggestions, navigation help, and assistance that feels native rather than layered on top.

AI Chat for code understanding and generation

It includes an AI chat interface that can explain code, generate snippets, and assist with debugging directly inside the IDE.

Because it has access to project context, responses are more relevant compared to standalone chat tools.

Intelligent code completion and refactoring

JetBrains AI enhances code completion with AI-powered suggestions and can assist in refactoring existing code.

This helps improve both development speed and code quality, especially in complex projects.

Local and cloud AI hybrid model

JetBrains AI combines local processing with cloud-based models, allowing some operations to run locally while others use more powerful remote models.

This balance helps with performance, privacy, and flexibility depending on the task.

Junie AI Agent (Experimental)

JetBrains is introducing “Junie,” an AI coding agent designed to handle more complex, multi-step development tasks.

While still evolving, it signals a move toward more agentic workflows within the JetBrains ecosystem.

Biggest advantages and limitations of using JetBrains AI

Advantages | Limitations |

Seamless integration within JetBrains IDEs | Limited value outside JetBrains ecosystem |

Strong context awareness due to IDE-level access | Credit-based usage can feel restrictive |

Combines local and cloud AI capabilities | Less powerful than top standalone models |

Enhances existing workflows without disruption | Agent capabilities still evolving |

Good balance of productivity and control | Requires JetBrains IDE adoption |

Reviews from trusted platforms

JetBrains AI adoption is closely tied to the popularity of JetBrains IDEs among developers.

Platform Name | Reviews in Stars |

4.5 / 5 | |

2.2 / 5 | |

4.2 / 5 |

JetBrains AI pricing

JetBrains AI uses a credit-based pricing model, with tiers based on usage limits and access to advanced capabilities.

Plan Name | Pricing | Included | Best For |

AI Free | Free | Unlimited code completion, local AI support, 3 AI credits per 30 days, small cloud quota, 30-day AI Pro trial | Developers exploring AI features |

AI Pro | $10/month per user | 10 AI credits per 30 days, AI chat, coding assistance, access to Junie (trial), top-up options | Individual developers using AI regularly |

AI Ultimate | $30/month per user | 35 AI credits per 30 days, higher cloud usage, recommended for Junie workflows, top-ups available | Power users working with AI agents |

AI Enterprise | $60/month per user (billed annually) | Maximum credits, enterprise security, custom AI integrations | Organizations with advanced requirements |

Tabnine

Tabnine is one of the earliest AI coding assistants, but its positioning today is very different from tools like GitHub Copilot. Instead of competing purely on generation quality, Tabnine focuses on privacy, control, and enterprise-ready deployments.

It’s built for teams that want AI assistance without sending code externally, making it especially relevant for organizations with strict security, compliance, or on-prem requirements.

Features of Tabnine

Tabnine is designed around secure AI adoption, controlled environments, and enterprise workflows, rather than pushing cutting-edge generation.

Private and flexible deployment options

Tabnine supports SaaS, VPC, on-prem, and fully air-gapped deployments, giving teams full control over where their code is processed.

This makes it one of the few tools that can operate in highly regulated environments without compromising security policies.

AI Code completions and IDE chat

It provides code completions for single lines and full functions, along with an AI chat interface integrated into IDEs.

These features support everyday development tasks, including writing, understanding, and refactoring code within familiar workflows.

Multi-LLM support across providers

Tabnine integrates with multiple leading models from providers like OpenAI, Anthropic, Google, and others.

This allows teams to choose models based on performance, cost, or compliance requirements instead of being locked into one ecosystem.

Enterprise-grade security and compliance

Tabnine ensures zero code retention, no training on user code, and full encryption across environments.

It also meets standards like GDPR, SOC 2, and ISO 27001, making it suitable for organizations with strict compliance needs.

Governance, control, and observability

Teams can define rules, control access to models, track usage, and audit AI-generated outputs.

This level of governance is critical for enterprises that need visibility and control over how AI is used across teams.

Biggest advantage and limitations of using Tabnine

Advantages | Limitations |

Strong focus on privacy and secure deployments | Weaker reasoning compared to newer AI tools |

Flexible deployment (on-prem, air-gapped, VPC) | Not as advanced in agentic workflows |

Multi-LLM support for flexibility and control | Limited innovation pace vs competitors |

Enterprise-grade governance and compliance | Less powerful for complex coding tasks |

Works across IDEs and environments | Feels outdated compared to newer AI-native tools |

Reviews from trusted platforms

Tabnine has a long-standing presence, especially among enterprise and security-focused teams.

Platform Name | Reviews in Stars |

4.1 / 5 | |

4.6 / 5 | |

2.2 / 5 |

Tabnine pricing

Tabnine offers two primary plans focused on secure deployment and enterprise workflows, with optional add-ons for advanced usage.

Plan Name | Pricing | Included | Best For |

Code Assistant Platform | $39/user/month | AI completions, IDE chat, multi-LLM support, works across IDEs, Jira integration, secure deployment (SaaS/VPC/on-prem), zero code retention, compliance standards | Teams needing secure AI coding assistance |

Agentic Platform | $59/user/month | Everything in Code Assistant, plus agentic workflows, context engine, MCP integrations, CLI agent, org-level awareness, advanced governance and analytics | Teams automating workflows with AI agents |

Sourcegraph Cody

Sourcegraph Cody is an AI coding assistant built specifically for understanding and navigating large codebases, rather than just generating code. Unlike GitHub Copilot, which focuses on inline suggestions, Cody is designed to help developers search, analyze, and reason across entire repositories.

It’s especially valuable for teams working with complex systems, legacy code, or multi-repo architectures where understanding context is the biggest bottleneck.

Features of Sourcegraph Cody

Cody is built around codebase intelligence, search, and deep context awareness, making it more of a navigation and reasoning tool than a pure generator.

Deep codebase search and context retrieval

Cody leverages Sourcegraph’s search capabilities to pull relevant code, symbols, and references across repositories.

This allows it to answer questions and generate outputs based on real project context, not just isolated snippets.

AI Chat with repository awareness

Cody includes an AI chat interface that understands your codebase and can explain functions, debug issues, and suggest improvements.

Because it uses indexed code, responses are grounded in actual project structure rather than generic assumptions.

Cross-Repository and multi-codebase understanding

Cody can connect to multiple repositories and code hosts, enabling it to reason across services and systems.

This is particularly useful in microservices architectures where logic is distributed across different codebases.

Code Navigation and symbol-level insights

It supports features like symbol search, code navigation, and references, helping developers trace how different parts of the system interact.

This reduces the time spent manually exploring unfamiliar or complex code.

Integration with enterprise code search platform

Cody is tightly integrated with Sourcegraph’s enterprise search platform, which includes features like batch changes, code insights, and monitoring.

This allows teams to combine AI assistance with powerful search and analytics capabilities.

Biggest advantage and limitations of using Sourcegraph Cody

Advantages | Limitations |

Excellent for understanding large and complex codebases | Not focused on fast code generation |

Strong search and context retrieval capabilities | Requires setup with Sourcegraph ecosystem |

Works across multiple repositories and services | Less useful for small projects |

Useful for debugging and code exploration | UI can feel complex for new users |

Integrates with enterprise workflows and tooling | Not as agentic as newer AI tools |

Reviews from trusted platforms

Sourcegraph Cody is widely used among teams dealing with large-scale codebases and enterprise environments.

Platform Name | Reviews in Stars |

4.4 / 5 | |

4.7 / 5 | |

4.1 / 5 |

Sourcegraph Cody pricing

Sourcegraph Cody is part of the broader Sourcegraph platform, with pricing centered around enterprise-grade code search and scalability.

Plan Name | Pricing | Included | Best For |

Enterprise Search | $49/user/month | Deep search, code search, symbol search, batch changes, code insights, navigation, monitoring, single-tenant cloud deployment, multi-language support, enterprise security, remote codebase context, 24x5 support | Teams working with large, complex, multi-repo codebases |

What should you look for in GitHub Copilot alternatives?

Choosing a Copilot alternative isn’t about finding “another autocomplete tool.” That category has already peaked. The real decision today is about how much of the development process you want to delegate.

Here’s what you need to know when you evaluate these tools in 2026:

Depth of context, not just suggestions

Most tools can complete a function. Very few can understand why that function exists within your system.

Look for tools that:

Understand entire codebases, not just open files

Can trace dependencies, flows, and architecture

Give answers grounded in your actual project

This is the difference between writing faster and thinking better with AI.

Multi-file and system-level editing

Modern development rarely happens in one file. Real changes span APIs, services, schemas, and configs.

The best alternatives should:

Edit across multiple files reliably

Maintain consistency across changes

Handle refactors without breaking things

If a tool can’t do this, it’s still stuck in the Copilot era.

Agent capabilities vs Passive assistance

Autocomplete is reactive. Agents are proactive.

Strong tools today:

Plan tasks

Execute changes

Iterate until something works

This is where tools like Cursor or Claude Code start to feel fundamentally different. You’re no longer prompting line by line, you’re assigning work.

Workflow integration (IDE, CLI, CI/CD)

The best tools don’t just sit in your editor, they plug into your workflow.

Look for:

IDE + terminal support

CI/CD or PR integration

Ability to run, test, and deploy

If you’re constantly switching tools, you’re losing the advantage.

Security, privacy, and deployment flexibility

For teams, this is non-negotiable.

Key considerations:

On-prem or VPC deployment

No code retention policies

Compliance (SOC2, GDPR, etc.)

This is where tools like Tabnine and enterprise platforms differentiate heavily.

Pricing model vs Actual usage

Most developers underestimate this.

Some tools charge:

Per seat

Per token

Per action (credits)

What matters is:

How often you’ll use it

Whether it scales with your workflow

A cheap tool can become expensive quickly if it’s usage-based.

What stage of development it actually solves

This is the most overlooked point.

Different tools dominate different stages:

Writing code: Copilot-style tools

Editing systems: Cursor, Windsurf

Reviewing code: Qodo

Understanding codebases: Cody

Automating workflows: Codex

If you pick the wrong tool for your stage, it will feel underwhelming no matter how good it is.

The shift beyond coding assistants: Understanding vibe coding

What is vibe coding?

Vibe coding is the shift from writing code step by step to describing what you want and letting systems build it.

Instead of:

Writing functions

Debugging errors

Wiring APIs

You:

Describe intent

Refine outcomes

Guide the system

It’s not about replacing developers, it’s about changing the interface of development itself.

What is Emergent?

Emergent sits in a completely different category from every tool we’ve discussed so far.

While tools like Copilot, Cursor, or Claude Code help you write and manage code, Emergent helps you build complete applications from prompts.

The core idea is simple:

Build apps and websites with prompts, not code

You describe:

What the app should do

How users should interact

What workflows it should support

And Emergent generates:

Frontend

Backend

Data models

Auth

APIs

All in one flow.

Positioning against all tools (Not just Copilot)

Every tool in this article improves how you code.

Emergent redefines the equation by making you question whether you need to code at all.

Copilot - faster typing

Cursor - smarter editing

Claude Code - better reasoning

Qodo - better validation

Cody - better understanding

Emergent - shipping actual products

It’s not competing at the same layer. It’s operating one level above.

How Emergent goes beyond AI coding assistants and makes developers’ lives easier?

The shift using Emergent is structural, not incremental.

From autocomplete to full app generation

Traditional tools help you write code faster. Emergent removes the need to write most of it.

You move from completing thousands of lines of code to generating entire applications from a prompt

This is not just about working faster, it fundamentally replaces the way the workflow operates.

From coding to product building

Most developers don’t just want code, they want working products.

Emergent focuses on:

User flows

Features

End-to-end functionality

Instead of assembling pieces manually, you start with the outcome and work backward.

From developer to creator

When the barrier to building drops, the role expands.

You’re no longer limited by:

Boilerplate

Setup time

Infrastructure complexity

You can move directly from idea -> implementation -> iteration.

Prompts to app

You describe what you want, and the system generates a working application with UI, backend logic, and data handling.

This removes the need to stitch together multiple tools just to get something running.

Built-in hosting

Unlike traditional workflows, where deployment is a separate step, Emergent includes hosting as part of the process.

You don’t need to configure servers, pipelines, or environments separately.

Automation workflows

Emergent allows you to define workflows and logic at a higher level, automating processes that would otherwise require custom code.

This is especially useful for internal tools, dashboards, and operational apps.

No DevOps needed

One of the biggest hidden costs in development is infrastructure.

Emergent abstracts away:

Deployment

Scaling

Environment setup

So you can focus on building, not maintaining systems.

Conclusion

GitHub Copilot opened the door to AI-assisted coding, but it’s no longer the ceiling.

Today’s landscape is broader and more specialized:

Some tools help you write code faster

Others help you understand and manage systems

And a new category is emerging that helps you build entire products

The right choice depends on what you’re actually trying to do.

If your workflow is still centered around writing code, tools like Cursor, Claude Code, or Codex can significantly improve productivity.

But if your goal is to ship products faster, reduce complexity, and move beyond code as the bottleneck, then platforms like Emergent represent a fundamentally different path.

FAQs

1. Are there open-source alternatives?

Yes, tools like Codeium (Windsurf) and self-hosted models offer open-source or flexible alternatives, though capabilities may vary.